A.I. PRIME - Article

Adaptive Automation for Self-Healing Workflows in Financial Services

Discover how adaptive automation enables self-healing workflows that reduce manual exceptions by 40-60% in financial services operations.

Financial services organizations operate in an environment where even milliseconds matter and compliance failures carry existential consequences. Yet many institutions still rely on rigid, brittle automation that breaks under pressure - a single exception, an unexpected data format, or a downstream system outage can cascade into manual workarounds, missed deadlines, and regulatory exposure. This is where adaptive automation fundamentally changes the game. Rather than treating automation as a fixed set of rules, adaptive automation systems learn from anomalies, self-correct in real time, and intelligently escalate only the exceptions that genuinely require human judgment. For financial services leaders grappling with legacy workflows, compliance complexity, and the pressure to do more with less, adaptive automation offers a path to resilience without sacrificing control.

Understanding Adaptive Automation in High-Compliance Environments

Adaptive automation represents a paradigm shift from traditional rule-based workflow engines. Rather than executing a predetermined sequence of steps and failing when conditions deviate, adaptive systems continuously monitor their own performance, detect anomalies, and adjust their behavior in real time. In financial services, this capability is not merely a convenience - it is a strategic necessity. Learn more in our post on Continuous Optimization: Implement Closed‑Loop Feedback for Adaptive Workflows.

The core principle is simple: automation should behave like a well-trained subordinate who understands the intent behind a task, not just its mechanics. When a market order execution system encounters a liquidity constraint it has never seen before, it should not simply halt and create a manual queue. Instead, it should recognize the anomaly, consult its learned patterns, attempt alternative routing, and only escalate to a human trader if all intelligent fallbacks have been exhausted. This is adaptive automation in practice.

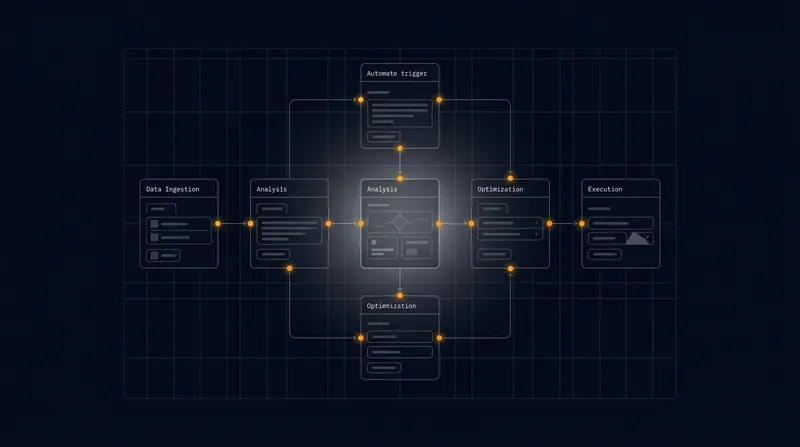

Traditional automation in financial services has operated under what researchers call the "supervisory control" model - humans design the rules, systems execute them, and exceptions bubble up for manual resolution. Adaptive automation inverts this: systems are designed with built-in learning loops, anomaly detection, and escalation thresholds that respect human expertise while minimizing unnecessary interruptions. This approach aligns perfectly with the playbook methodology, where workflows are treated as composable templates that can be adjusted based on real-world conditions and feedback.

Adaptive automation systems that incorporate anomaly detection and self-healing mechanisms can reduce manual intervention by 40-60% while simultaneously improving compliance posture by ensuring consistent, auditable responses to exceptions.

In regulated environments like financial services, the stakes are uniquely high. Every workflow must maintain an audit trail, adhere to regulatory requirements, and operate within defined risk parameters. Adaptive automation achieves this by embedding governance directly into the system's decision-making logic. Rather than bypassing controls, adaptive systems strengthen them by making control mechanisms more intelligent and responsive.

Core Components of Self-Healing Workflows

Self-healing workflows represent the operational expression of adaptive automation. These are systems designed to detect, diagnose, and remediate failures without human intervention whenever possible - and to escalate intelligently when they cannot. In financial services, self-healing workflows typically comprise four interdependent components: anomaly detection, automated rollback, fallback routing, and human-in-the-loop escalation. Learn more in our post on Troubleshooting Self-Healing Workflows: Common Failure Modes and Fixes.

Anomaly Detection and Real-Time Monitoring

The foundation of any self-healing workflow is the ability to recognize when something has gone wrong. Unlike traditional exception handling, which relies on explicit error codes and predefined failure conditions, anomaly detection uses statistical models and behavioral baselines to identify deviations from normal operation.

In a payment processing workflow, for example, anomaly detection might monitor transaction volumes, processing times, success rates, and message formats simultaneously. If a sudden spike in transaction failures occurs - even if the individual transactions are technically "valid" - the system recognizes the pattern as anomalous and triggers diagnostic protocols. Similarly, if a counterparty's settlement messages begin arriving in an unexpected format, the system detects the deviation and initiates investigation before attempting to process them.

The key innovation is that anomaly detection operates continuously and proactively, rather than reactively. Systems are trained on historical data to establish behavioral baselines for normal operation. When live data deviates significantly from these baselines, alerts are triggered at graduated severity levels. This allows the workflow to take corrective action before a minor issue becomes a critical failure.

Automated Rollback and State Recovery

When a workflow detects that it has entered an anomalous or failed state, the most reliable recovery mechanism is often to revert to a known-good state and retry the operation with modified parameters. Automated rollback is the mechanism that enables this without human intervention.

Consider a trade confirmation workflow that processes a batch of derivatives trades. Partway through the batch, the system detects that a downstream pricing service has become unreliable - responses are intermittently delayed or returning stale data. Rather than continuing to process trades with potentially incorrect pricing, an adaptive system would automatically roll back the batch to its pre-processing state, pause the workflow, and trigger fallback routing logic. Once the pricing service is confirmed to be healthy again, the batch is replayed from the rollback point.

Automated rollback requires careful state management. Every workflow must maintain transaction-like semantics where operations can be undone cleanly. In financial services, this is particularly critical because many workflows involve state changes in multiple systems - trade booking systems, position databases, compliance monitoring systems, and settlement systems. A true self-healing workflow must be able to coordinate rollback across all these systems atomically, ensuring that partial states never persist.

Fallback Routing and Graceful Degradation

Not every anomaly requires rollback. Many can be resolved by routing the workflow through an alternative path. Fallback routing is the mechanism that enables workflows to degrade gracefully when primary paths become unavailable.

A practical example: a loan origination workflow typically routes credit decisions through a primary underwriting system. If that system becomes unavailable, a well-designed adaptive workflow would automatically route requests to a secondary underwriting system, or to a simplified underwriting model with tighter risk parameters, or to a queue for manual underwriting - depending on the criticality of the request and the duration of the outage. The workflow adapts its behavior based on real-time conditions, rather than failing entirely.

Fallback routing requires maintaining a topology of alternative paths and the decision logic to select among them. This is where the playbook approach proves invaluable. Rather than hard-coding fallback sequences, workflows are designed as composable templates where different steps can be substituted based on conditions. A payment processing workflow might have a primary routing step, a secondary routing step, and a tertiary manual intervention step - all defined as interchangeable plays in the workflow playbook.

Human-in-the-Loop Escalation Patterns

Despite sophisticated automation, some situations require human judgment. The art of designing self-healing workflows lies in escalating exactly the right exceptions to humans at exactly the right time, with exactly the right context.

Escalation patterns define the rules for when, how, and to whom exceptions are escalated. A well-designed escalation pattern might specify: if an anomaly is detected but automated recovery succeeds within 30 seconds, do not escalate - just log it. If recovery takes longer than 30 seconds but completes within 5 minutes, escalate to the operations team with a summary. If recovery fails entirely, escalate immediately to the senior trader with full diagnostic context and recommended actions.

The key principle is escalation with context. When an exception reaches a human, it should arrive with a clear explanation of what went wrong, what the system has already tried, what the current state is, and what the recommended next action is. This allows humans to make informed decisions quickly, rather than starting from scratch.

Effective escalation patterns reduce mean time to resolution by 50-70% because humans receive exceptions with full diagnostic context rather than raw error messages, enabling faster decision-making and more precise interventions.

Anomaly Detection Strategies for Financial Workflows

Anomaly detection is the sensory system of adaptive automation. Without reliable anomaly detection, workflows cannot recognize when they need to self-heal. In financial services, several anomaly detection strategies have proven particularly effective. Learn more in our post on Building Autonomous AI Agents for Customer Service Automation.

Statistical Baseline Modeling

The simplest and often most effective anomaly detection approach is statistical baseline modeling. For each key metric in a workflow - transaction volume, processing latency, success rate, message size, counterparty concentration - establish a statistical model of normal behavior based on historical data. Then, monitor live data against these models and flag deviations that exceed defined thresholds.

For example, a settlement workflow might establish that normal settlement success rates follow a distribution with mean 99.7% and standard deviation 0.2%. Any hour where the success rate drops below 98.5% (more than 6 standard deviations below the mean) would be flagged as anomalous and trigger investigation. This approach is computationally efficient, interpretable, and highly effective for detecting gradual degradation.

Behavioral Fingerprinting

Beyond aggregate statistics, behavioral fingerprinting tracks the detailed patterns of individual entities - counterparties, traders, accounts, message sources. Each entity develops a behavioral signature based on historical interactions. Deviations from an entity's signature are flagged as anomalous.

A counterparty that normally settles trades within 2 hours but suddenly begins settling within 30 minutes might be flagged as anomalous, even though fast settlement is technically positive. The anomaly indicates that something has changed - perhaps a new settlement system, perhaps a change in operational procedures - and warrants investigation before assuming everything is fine.

Unsupervised Learning Clustering

For more complex anomaly detection, unsupervised learning techniques like isolation forests or autoencoders can identify transactions or events that are statistically unusual relative to the broader population, even if they don't violate any explicit rules.

A wire transfer that is technically valid - correct format, sufficient funds, legitimate counterparty - but has characteristics that are rare in the historical data (e.g., unusual combination of amount, destination, and time of day) would be flagged by a clustering-based anomaly detector. This catches potential fraud or operational errors that rule-based systems would miss.

Cross-System Correlation Analysis

Many anomalies only become apparent when data from multiple systems are correlated. A transaction might look normal in the trading system and normal in the settlement system, but the combination of characteristics across both systems might be anomalous.

For instance, if a trader books a trade for an unusually large notional amount at an unusual spread, and simultaneously the same trader attempts to hedge that position through an unusual counterparty, the correlation between these events might flag potential operational risk or compliance issues that wouldn't be caught by analyzing either system in isolation.

Automated Rollback and Recovery Mechanisms

Once an anomaly has been detected, the workflow must decide how to respond. Automated rollback is one of the most powerful response mechanisms available, but it requires careful design to be effective and safe.

Transaction-Based State Management

The foundation of reliable automated rollback is transaction-based state management. Each workflow should be designed as a series of atomic transactions, where either all changes within a transaction are committed or all are rolled back. This ensures that partial states never persist.

In practice, this often means wrapping workflow steps in distributed transaction frameworks that coordinate commits and rollbacks across multiple systems. For financial workflows, this might involve using two-phase commit protocols or eventual consistency patterns with compensation transactions (where rollback is achieved by executing compensating transactions rather than undoing changes directly).

Checkpoint and Recovery Points

Rather than rolling back an entire workflow to the beginning, efficient recovery often involves rolling back only to the most recent checkpoint where the workflow was in a known-good state. This minimizes the amount of work that must be redone and reduces recovery time.

A batch processing workflow might establish checkpoints at the beginning of each transaction group. If a failure is detected partway through processing group 47, the workflow rolls back to the beginning of group 47 and retries, rather than rolling back to the beginning of the entire batch.

Conditional Retry Logic

Not every failure warrants immediate retry. Conditional retry logic determines whether a failure should be retried immediately, retried after a delay, or escalated without retry.

A workflow might retry immediately if the failure is classified as transient (e.g., temporary network timeout), wait and retry with exponential backoff if the failure suggests congestion (e.g., downstream system overloaded), and escalate without retry if the failure suggests a permanent problem (e.g., invalid account number). This prevents thrashing - repeatedly attempting operations that cannot possibly succeed - while maximizing the probability of successful recovery.

Recovery Playbooks

For common failure scenarios, pre-defined recovery playbooks accelerate the recovery process. These are sequences of actions known to resolve specific types of failures, documented in advance and executed automatically when those failures are detected.

A recovery playbook for "downstream system timeout" might specify: wait 5 seconds, retry once, if still failing, route to fallback system, if fallback system also times out, escalate to operations team. By pre-defining these sequences, the system can execute them immediately upon detecting the failure condition, rather than having to figure out the recovery strategy in real time.

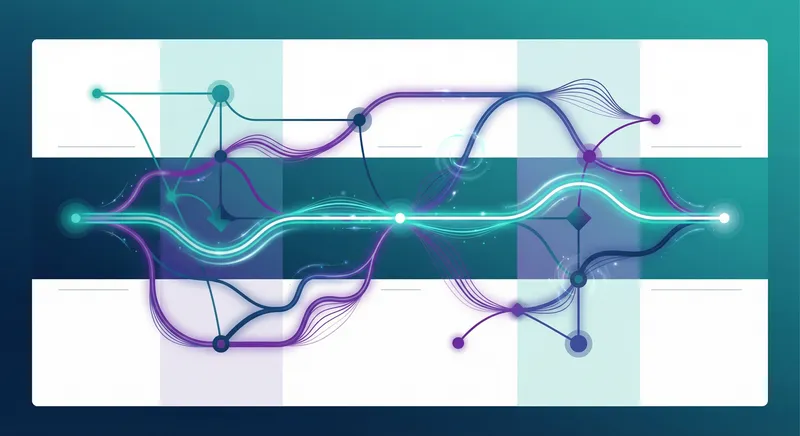

Fallback Routing Patterns and Design

Fallback routing allows workflows to continue operating even when preferred paths are unavailable. Effective fallback routing requires careful design to balance resilience with risk management.

Primary-Secondary-Tertiary Routing

The simplest fallback routing pattern involves defining multiple paths for each workflow step, prioritized by preference. The workflow attempts the primary path first. If it fails, it automatically attempts the secondary path. If that fails, it attempts the tertiary path. Only if all paths are exhausted does the workflow escalate to manual intervention.

For a payment processing workflow, the primary path might be the preferred settlement network, the secondary path might be an alternative settlement network with slightly higher fees, and the tertiary path might be a batch settlement process with longer settlement windows. Each path involves trade-offs - cost, speed, risk - but all are viable alternatives to manual intervention.

Capability-Based Routing

Rather than pre-defining static fallback paths, capability-based routing dynamically selects paths based on current system capabilities and conditions. The workflow specifies what capabilities it needs (e.g., "settle a payment within 2 hours for less than $100,000") and the routing engine selects from available systems that meet those criteria.

This approach is more flexible than static routing because it adapts automatically as systems come online or go offline. If the primary settlement system goes offline, the routing engine automatically selects an alternative system that meets the capability requirements, without requiring manual reconfiguration.

Risk-Adjusted Routing

Different fallback paths carry different risk profiles. A fallback path might be faster but less reliable, or more reliable but more expensive. Risk-adjusted routing selects paths based on the risk tolerance of the current operation.

A workflow processing a high-value trade might route through the most reliable settlement path even if it is more expensive, while the same workflow processing a low-value trade might route through a faster but slightly less reliable path. The routing decision is adjusted based on the risk profile of the operation.

Degraded-Mode Operations

When all primary paths are unavailable, workflows can shift into degraded mode - operating with reduced functionality but maintaining core capabilities. This is particularly valuable in financial services where complete system unavailability is often not an option.

A trading platform in degraded mode might accept new trade orders but queue them for processing rather than executing them immediately. A settlement system in degraded mode might accept settlement instructions but process them in batch mode rather than real-time. Degraded mode keeps the system operational while acknowledging that full functionality is temporarily unavailable.

Human-in-the-Loop Escalation Design

The most sophisticated adaptive automation still requires human judgment for certain decisions. Designing effective human-in-the-loop escalation patterns is critical to ensuring that humans are engaged at the right time with the right information.

Escalation Tiers and Routing

Escalation patterns typically define multiple tiers, each suited to different types of exceptions. Tier 1 might be the operations team, who handle routine exceptions and can resolve them quickly. Tier 2 might be senior operations staff or business analysts, who handle more complex exceptions. Tier 3 might be management or specialized domain experts, who handle exceptions with significant business or compliance implications.

Routing logic determines which tier an exception is escalated to based on the type of exception, its severity, and its business impact. An exception that is easy to diagnose but requires business judgment might be routed directly to Tier 2, skipping Tier 1. An exception that is routine might be routed to Tier 1 even if it is technically complex, because Tier 1 has pre-defined procedures for handling it.

Context-Rich Escalation Messages

When an exception is escalated to a human, it should arrive with comprehensive context: what operation was being performed, what went wrong, what the system has already tried, what the current state is, and what the recommended next action is. This allows humans to make informed decisions quickly.

Rather than escalating a raw error message like "Connection timeout," escalate with context: "Payment processing workflow for account XYZ encountered timeout when attempting to route to Settlement Network A at 14:32:15 UTC. The system automatically retried once with exponential backoff but the timeout persisted. Settlement Network B is currently available and has processed similar payments successfully in the past 24 hours. Recommended action: approve rerouting to Settlement Network B. Approve / Escalate to Senior Trader / Rollback and Retry."

Escalation Thresholds and Severity Levels

Not every exception requires immediate escalation. Escalation thresholds determine the conditions under which exceptions should be escalated to humans. These thresholds are typically based on severity (how bad is the exception), business impact (how much does it matter), and time sensitivity (how urgently does it need to be resolved).

An exception might be classified as low severity if the system has successfully recovered automatically and no manual action is needed. It would be logged for analysis but not escalated. An exception might be classified as medium severity if the system has recovered but the recovery involved fallback paths with higher cost or risk - these are escalated to the operations team for awareness. An exception might be classified as high severity if the system has not been able to recover and manual intervention is required - these are escalated immediately to senior staff.

Escalation Fatigue Prevention

One of the greatest risks in designing human-in-the-loop systems is escalation fatigue - overwhelming humans with so many escalations that they become desensitized and start ignoring them. This is particularly dangerous in financial services where each escalation might represent a genuine problem requiring attention.

Escalation fatigue is prevented by being disciplined about what gets escalated. The goal should be to escalate only exceptions that genuinely require human judgment. Exceptions that can be resolved automatically should be resolved automatically and logged, not escalated. Exceptions that represent expected, normal variation should not be escalated even if they are technically anomalous. Only exceptions that require human judgment or represent genuine problems should be escalated.

Organizations that implement rigorous escalation discipline see escalation volumes drop by 70-80% compared to organizations that escalate every anomaly, while simultaneously improving resolution quality because humans are focused on exceptions that truly require their expertise.

Compliance and Governance in Adaptive Workflows

Financial services operate in heavily regulated environments where compliance is not optional. Adaptive automation must strengthen compliance posture, not weaken it. This requires embedding governance directly into the adaptive system's decision-making logic.

Audit Trail and Explainability

Every decision made by an adaptive workflow - whether to retry, which fallback path to select, whether to escalate - must be logged and explainable. Regulators need to understand why the system made the decisions it did, particularly when those decisions involve exceptions or deviations from normal procedures.

This requires designing workflows so that the decision logic is transparent and auditable. Rather than using black-box machine learning models to make routing decisions, use interpretable models or explicit decision logic where the reasoning can be explained. When the system selects a fallback path, log the criteria that were considered, the alternatives that were evaluated, and why the selected path was chosen.

Risk Guardrails and Control Limits

Adaptive systems should not adapt in ways that violate risk limits or compliance requirements. Guardrails define the boundaries within which adaptation is permitted. The system can adapt its behavior, retry operations, and select fallback paths - but only within the guardrails defined by risk management and compliance.

For example, a workflow might be permitted to adapt its settlement routing to use alternative settlement networks, but only if those networks meet minimum regulatory requirements. A workflow might be permitted to retry a failed operation, but only if the retry does not violate transaction limits or compliance thresholds. Guardrails ensure that adaptation never leads to compliance violations.

Regulatory Reporting and Monitoring

Adaptive workflows generate rich data about system behavior, exceptions, and recovery actions. This data should feed directly into regulatory reporting and compliance monitoring systems. Rather than treating adaptive automation as a black box, use the transparency of adaptive systems to enhance regulatory reporting.

For instance, detailed logs of settlement exceptions and recovery actions can feed into regulatory reports on settlement efficiency and operational risk. Anomaly detection results can feed into fraud detection and anti-money laundering monitoring. The adaptive system becomes not just more efficient, but also a better source of regulatory and compliance insights.

Change Management and Governance

Adaptive systems that learn and adjust their behavior over time require careful change management. Changes to the system's models, decision logic, or thresholds must be reviewed, approved, and tested before being deployed to production. This is particularly critical in financial services where changes can have significant compliance and risk implications.

Implement a formal change management process for adaptive systems that includes: definition of the proposed change, analysis of potential impacts, testing in a controlled environment, approval by risk and compliance, gradual rollout with monitoring, and documentation for audit purposes. This ensures that adaptation remains under control and subject to appropriate governance.

Implementation Roadmap and Best Practices

Deploying adaptive automation in financial services is a significant undertaking. A phased approach that starts with simpler use cases and builds toward more complex ones minimizes risk and maximizes learning.

Phase 1: Foundation - Anomaly Detection and Monitoring

The first phase should focus on establishing robust anomaly detection and monitoring capabilities. This involves selecting key workflows, establishing statistical baselines, deploying monitoring infrastructure, and defining alerting thresholds. The goal is to gain visibility into workflow behavior and anomalies, without yet attempting to automate recovery.

During this phase, all anomalies are escalated to humans for resolution. The focus is on understanding the types of anomalies that occur, how frequently they occur, and how long they take humans to resolve. This data informs the design of automated recovery mechanisms in later phases.

Phase 2: Resilience - Automated Rollback and Fallback Routing

Once anomaly detection is established, the second phase adds automated recovery mechanisms. Start with simple automated rollback for transient failures - if an operation fails, roll back and retry. Add fallback routing for scenarios where primary paths are unavailable. Define escalation patterns for exceptions that cannot be automatically resolved.

During this phase, monitor the effectiveness of automated recovery. Track how many exceptions are resolved automatically versus escalated to humans. Measure the time savings and cost reduction achieved through automation. Use this data to refine recovery logic and escalation patterns.

Phase 3: Intelligence - Adaptive Decision-Making

Once automated recovery mechanisms are working reliably, the third phase adds adaptive intelligence. Implement capability-based routing that dynamically selects optimal paths based on current conditions. Add risk-adjusted routing that considers the risk profile of each operation. Implement learning loops that allow the system to improve its decision-making over time based on outcomes.

During this phase, focus on safety and governance. Ensure that adaptive decision-making remains within defined guardrails and that all decisions are auditable and explainable. Implement formal change management for model updates and threshold adjustments.

Phase 4: Optimization - Continuous Improvement

The final phase focuses on continuous optimization. Use historical data to identify patterns in exceptions and recovery actions. Optimize escalation thresholds to minimize both false positives (unnecessary escalations) and false negatives (missed escalations). Refine anomaly detection models as the system learns more about normal behavior. Implement feedback loops that allow humans to improve system performance by providing corrections and insights.

Best Practices Throughout Implementation

Several best practices should be followed throughout the implementation journey. First, start with high-impact, lower-risk use cases. Choose workflows that have clear, measurable benefits from automation but are not critical to core business operations. This allows you to build expertise and confidence before automating more critical workflows.

Second, maintain strong governance and oversight throughout. Establish a governance committee that reviews changes to adaptive systems, approves escalation thresholds, and monitors compliance. Ensure that business stakeholders, risk management, and compliance are represented on this committee.

Third, invest in monitoring and observability. Adaptive systems are more complex than traditional systems and require more sophisticated monitoring. Implement comprehensive logging, metrics collection, and dashboarding to provide visibility into system behavior. This visibility is essential for both operational management and regulatory compliance.

Fourth, prioritize explainability and auditability. Design systems so that decisions can be explained and justified. Avoid black-box approaches that cannot be audited or explained to regulators. The goal is not just to automate, but to automate in a way that is transparent and defensible.

Finally, build a culture of continuous improvement. Treat the implementation of adaptive automation as an ongoing journey, not a one-time project. Regularly review performance metrics, gather feedback from operations teams, and identify opportunities for improvement. Use this feedback to refine models, adjust thresholds, and enhance system capabilities.

Measuring Success: KPIs and ROI Tracking

Adaptive automation initiatives must be measured rigorously to demonstrate value and guide continuous improvement. Key performance indicators should span operational efficiency, compliance, and cost reduction.

Operational Efficiency Metrics

Mean Time to Resolution (MTTR) measures how long it takes to resolve exceptions. Adaptive automation should reduce MTTR by enabling faster automated recovery and more efficient human escalation. Track MTTR for different exception types to identify where automation is most effective.

Automation Rate measures the percentage of exceptions that are resolved automatically without human intervention. Higher automation rates indicate more effective self-healing capabilities. However, this should not be maximized at the expense of accuracy - a 95% automation rate with 98% accuracy is better than a 99% automation rate with 90% accuracy.

Workflow Throughput measures the number of operations processed per unit time. Adaptive automation that reduces exceptions and manual interventions should increase throughput. Measure throughput for each workflow and track changes over time as adaptive capabilities are enhanced.

Compliance and Risk Metrics

Compliance Exception Rate measures the number of operations that violated compliance requirements. Adaptive automation should reduce this rate by catching and preventing compliance violations through built-in guardrails and anomaly detection. Track this metric carefully and investigate any increases.

Audit Finding Resolution Time measures how quickly audit findings related to workflow exceptions are resolved. Adaptive automation that generates comprehensive audit trails and explainable decisions should reduce the time needed to address audit findings.

Regulatory Escalation Incidents measures the number of incidents that require escalation to regulators. Adaptive automation should reduce this metric by preventing issues from occurring in the first place and enabling rapid resolution of issues that do occur.

Cost and Financial Metrics

Cost per Transaction measures the total cost of processing each operation, including labor, infrastructure, and fallback costs. Adaptive automation should reduce this metric by reducing manual labor and optimizing routing decisions. Track this metric by workflow type to identify where automation is most cost-effective.

Labor Cost Reduction measures the reduction in labor hours required to operate workflows. Calculate the number of FTEs that can be redeployed or eliminated through adaptive automation. This is often one of the largest sources of ROI.

Avoided Cost from Prevented Failures measures the cost of failures that would have occurred without adaptive automation. This includes failed transactions, regulatory fines, customer compensation, and reputational damage. Quantifying avoided costs requires careful analysis but can represent significant value.

Establishing Baseline and Tracking Progress

Before implementing adaptive automation, establish baseline metrics for current operations. Measure MTTR, automation rate, throughput, compliance exception rate, and cost per transaction for existing workflows. These baselines provide the reference point for measuring improvement.

After implementation, track these metrics monthly or quarterly. Establish targets for improvement and monitor progress toward those targets. Share results with stakeholders to demonstrate value and build support for continued investment. Use results to identify areas where additional investment might yield the highest returns.

Conclusion: Transforming Financial Operations Through Adaptive Automation

The financial services industry stands at an inflection point. Legacy workflows built for stability are increasingly inadequate for an environment characterized by complexity, speed, and continuous change. Regulatory requirements grow more stringent. Competitive pressure intensifies. Customer expectations rise. In this context, traditional automation - rigid, rule-based, brittle - is not enough.

Adaptive automation offers a fundamentally different approach. Rather than treating workflows as fixed sequences of steps, adaptive automation treats them as intelligent systems that learn from experience, detect anomalies, self-correct when possible, and escalate intelligently when human judgment is required. This approach is not just more efficient - it is more resilient, more compliant, and more aligned with how sophisticated organizations actually need to operate.

The path to adaptive automation requires investment in monitoring and anomaly detection, in designing self-healing mechanisms, in establishing governance and guardrails, and in building a culture of continuous improvement. But the returns are substantial: reduced operational costs, faster exception resolution, improved compliance posture, and the ability to scale operations without proportional increases in headcount.

At A.I. PRIME, we specialize in helping financial services organizations design and deploy adaptive automation systems that deliver these benefits while maintaining the control and compliance rigor that regulated environments demand. Our approach combines deep domain expertise in financial services with cutting-edge capabilities in autonomous agents, workflow orchestration, and governed AI. We work with your team to understand your specific workflows, design adaptive systems tailored to your needs, and implement them with the governance and oversight that financial services requires.

Whether you are struggling with manual exceptions in your settlement workflows, facing challenges with compliance monitoring, or looking to scale operations more efficiently, adaptive automation offers a path forward. Our workflow design and automation blueprinting services help you identify the highest-impact use cases for adaptive automation, design systems that deliver measurable ROI, and implement them with the rigor and governance that financial services demands. We also provide ongoing governance integration and continuous enablement support to ensure that your adaptive systems continue to deliver value as your business evolves.

The future of financial services operations is adaptive, intelligent, and self-healing. Organizations that embrace these capabilities will outcompete those that cling to rigid, manual processes. Let us help you make that transition. Contact us today to discuss how adaptive automation can transform your financial operations.

Next step

Book the Opportunity Sprint