A.I. PRIME - Article

Balancing Autonomy and Compliance: Best Practices for Enterprise AI Governance

Discover how to balance AI autonomy with compliance using governed AI guardrails and enterprise governance frameworks.

Enterprise leaders today face a paradox: accelerate AI adoption to stay competitive, yet maintain iron-clad governance to satisfy auditors, regulators, and risk teams. The stakes are high. A single uncontrolled AI decision can expose your organization to regulatory penalties, reputational damage, and operational chaos. Yet overly restrictive governance stifles innovation and leaves your enterprise lagging behind competitors who move faster. This tension between governed AI guardrails and operational autonomy is no longer a nice-to-have conversation - it is the defining challenge of enterprise AI deployment in 2025 and beyond. The organizations winning this battle are those that treat governance not as a constraint, but as an enabler of responsible, scalable AI that drives measurable business outcomes while keeping risk in check.

Understanding the Enterprise AI Governance Imperative

The regulatory landscape for artificial intelligence has shifted dramatically. From the EU AI Act to NIST frameworks and evolving global standards, governments are raising the bar for safety, transparency, and accountability. For enterprises, this means governance is no longer optional - it is a prerequisite for responsible AI deployment. Yet governance carries real operational costs: slower decision-making, reduced AI autonomy, and friction in workflows that were designed to be seamless and fast. Learn more in our post on Governed AI Guardrails: Implementing Compliant Oversight for Agentic Systems.

The core challenge lies in recognizing that governance and autonomy are not opposing forces. Instead, they exist on a spectrum, and the most mature enterprises are learning to calibrate this balance based on risk appetite, use case complexity, and regulatory context. A sales forecasting model operating on historical data presents vastly different governance requirements than an autonomous agent making credit decisions or adjusting healthcare treatment protocols. The key is moving beyond one-size-fits-all governance to a risk-stratified, policy-driven approach that grants autonomy where it is safe and justified, while maintaining tight controls where the stakes are highest.

Consider the operational impact: enterprises that implement governance poorly often see AI projects stall in pilot phases, unable to transition to production because compliance requirements were not built in from the start. Others deploy AI systems that operate freely until a breach or regulatory inquiry forces a painful retrofit of controls. The winners are those who embed governance into the architecture itself - making compliance checks automated, transparent, and integrated into the AI decision-making workflow rather than bolted on afterward.

Governance is not a speed bump on the path to AI value - it is the foundation that enables sustainable, trustworthy AI at scale. Organizations that view compliance as a design principle rather than a constraint will outpace those that treat it as an afterthought.

This shift in mindset is critical. Enterprises deploying governed AI guardrails from inception see faster time-to-production, fewer compliance violations, and greater stakeholder confidence - from board members to end users. The investment in upfront governance architecture pays dividends across the entire AI lifecycle.

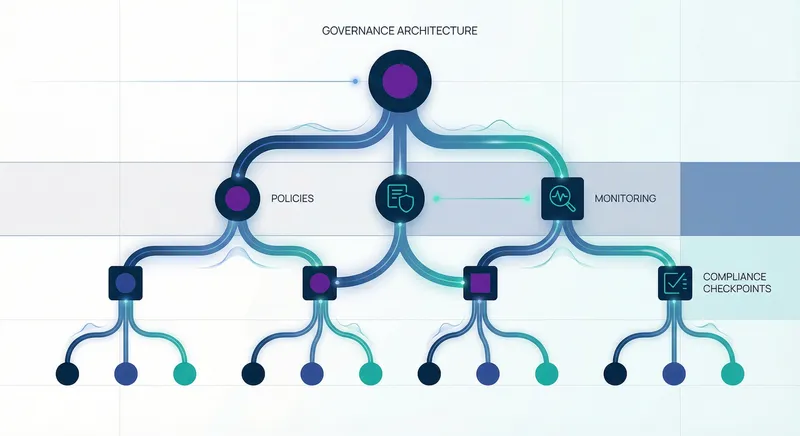

Building a Policy Taxonomy for Governed AI Systems

The foundation of effective AI governance is a well-structured policy taxonomy - a clear, hierarchical framework that defines what AI systems can and cannot do. This is not a static document; it is a living system that evolves as your AI capabilities mature, regulatory requirements shift, and business needs change. A robust policy taxonomy serves multiple audiences: compliance teams need to audit against it, engineers need to implement it, business leaders need to understand the trade-offs, and auditors need to verify adherence. Learn more in our post on Building Autonomous AI Agents for Customer Service Automation.

Start by categorizing your AI use cases by risk level. High-risk decisions - those affecting customer eligibility, pricing, resource allocation, or safety - require stricter governance than low-risk analytical tasks. A common framework divides AI systems into three tiers. Tier 1 encompasses autonomous, high-impact decisions where AI operates with minimal human oversight. These demand the most rigorous controls: explainability requirements, continuous performance monitoring, human appeal mechanisms, and regular audits. Tier 2 includes AI systems that inform human decisions or operate with guardrails and periodic human review. These require moderate controls: clear documentation, performance thresholds, and escalation paths. Tier 3 covers low-impact analytical or exploratory AI with minimal business consequence. These can operate with lighter governance: basic logging, periodic review, and self-service controls.

Within each tier, define specific policy domains. Data governance policies specify what data can be used to train or operate AI systems - addressing bias, privacy, and security concerns. Model governance policies define acceptable accuracy thresholds, drift detection triggers, and retraining schedules. Operational policies govern how AI systems interact with other systems, what actions they can take, and what escalation paths exist. Audit and transparency policies require logging, explainability, and regular performance reviews. Human oversight policies define when human review is mandatory, what constitutes sufficient review, and how conflicts are resolved.

A practical example: a financial institution deploying an AI system for loan underwriting would establish policies such as: "Model accuracy must exceed 92% on holdout test data; any drift below 90% triggers retraining. All loan denials must include human-readable explanations. Decisions affecting loans over $500,000 require human review before final approval. Monthly bias audits must be conducted across demographic groups. All training data must be at least 18 months old to reflect stable economic conditions." These policies translate business risk tolerance into concrete, implementable rules that the AI system must follow.

A policy taxonomy is the bridge between business strategy and technical implementation. It translates risk appetite into actionable rules that govern AI behavior without requiring constant human intervention.

The taxonomy should be documented in a centralized repository accessible to all stakeholders. Use clear language that engineers can implement and compliance teams can audit. Avoid vague terms like "reasonable" or "appropriate" - instead, specify thresholds, metrics, and decision logic. Version control your policies and maintain a changelog so you can track how governance evolves over time. This documentation becomes invaluable during audits, regulatory inquiries, and post-incident reviews.

Automating Compliance Checks and Guardrails

Policy is only valuable if it is enforced. Manual compliance reviews are slow, inconsistent, and prone to error - they do not scale as your AI footprint grows. The next level of governance maturity is automated compliance checking, where policies are encoded into the AI system itself and checked continuously, not just during periodic audits. Learn more in our post on Security and Compliance for Agentic AI Automations.

Automated guardrails operate at multiple levels. At the data intake level, guardrails validate that training data meets policy requirements: checking for prohibited data sources, verifying data freshness, detecting and flagging potential bias indicators, and ensuring proper data lineage. At the model development level, guardrails enforce standards: requiring minimum accuracy thresholds before a model can advance to testing, checking for explainability compliance, validating that fairness metrics meet policy targets, and flagging models that show excessive drift from previous versions. At the deployment level, guardrails gate production release: confirming that all required documentation is complete, verifying that monitoring infrastructure is in place, ensuring that rollback procedures are tested, and validating that human oversight mechanisms are active.

At the runtime level - where the AI system is actively making decisions - guardrails become the most critical. These include confidence thresholds that route low-confidence decisions to human review, rate limiters that prevent the system from making too many risky decisions in short windows, decision boundary checks that flag unusual patterns, and real-time bias monitoring that alerts teams if model behavior diverges from expected fairness metrics. For example, an autonomous pricing system might have a guardrail that routes any price adjustment exceeding 15% to human review, or that flags if the system is consistently offering lower prices to certain customer segments.

Implementation requires technical infrastructure. A compliance engine - a dedicated system that evaluates AI decisions against policy rules - sits between the AI system and its downstream actions. Before the AI system executes a decision, the compliance engine checks: Is this decision within the approved decision space? Does it violate any hard constraints? Does it trigger a warning that requires human acknowledgment? Has this decision pattern been seen before, or is it anomalous? Only after passing these checks does the decision proceed. If it fails, the system logs the violation, alerts relevant stakeholders, and escalates according to policy.

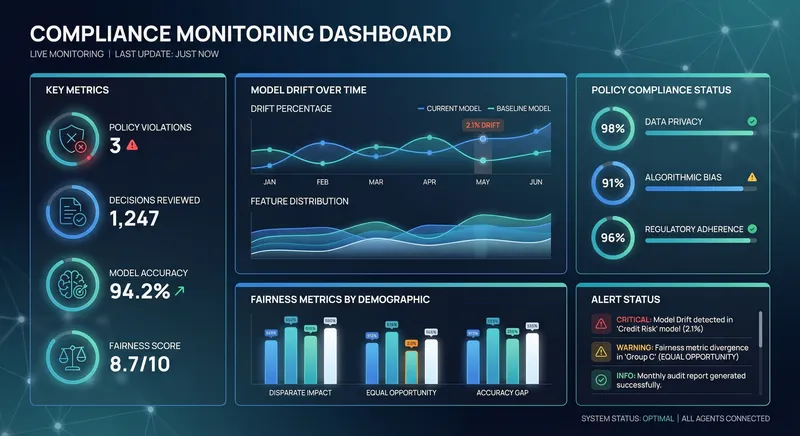

Automated monitoring is equally critical. Continuous compliance dashboards track key metrics: percentage of decisions that required human review, types and frequency of policy violations, model performance drift, fairness metrics by demographic group, and audit readiness status. These dashboards should be real-time or near-real-time, updated as decisions flow through the system. They serve multiple audiences: compliance teams use them to identify systemic issues, business leaders use them to understand AI system behavior and trust, and engineers use them to detect when models need retraining or guardrails need adjustment.

Automated guardrails transform compliance from a periodic checkbox exercise into a continuous, embedded practice. They enable AI systems to operate autonomously while maintaining real-time visibility into policy adherence.

The technical implementation varies by platform and use case, but the principle is consistent: encode policies as executable rules, evaluate them continuously, log all decisions and policy checks, and surface violations through alerting mechanisms. This approach scales far better than manual review. A team of three compliance specialists can manually review perhaps 100 AI decisions per day. An automated system can evaluate millions of decisions per day while maintaining perfect consistency in policy application.

Implementing Role-Based Access and Control Models

Governance is not just about constraining AI behavior - it is also about controlling who can modify, deploy, or override AI systems. Role-based access control (RBAC) ensures that only authorized individuals can make changes to governed AI systems, and that changes are auditable and traceable. This is critical for regulatory compliance and operational security.

Define clear roles aligned to your organizational structure and risk tolerance. A typical model includes: AI System Owners who have overall responsibility for an AI system and can approve deployments; Data Scientists who develop and train models but cannot deploy to production without approval; Compliance Officers who review policies and audit adherence; System Administrators who manage infrastructure and can grant access; Business Analysts who configure decision logic within guardrails but cannot modify core policies; and Auditors who have read-only access to all systems for verification purposes.

Each role should have a clearly defined set of permissions. A Data Scientist might have permission to: create new models in development environments, run experiments, access training data, and request production deployment. They would NOT have permission to: deploy models directly to production, modify guardrails, access customer data beyond what is needed for their work, or override compliance checks. A System Owner might have permission to: approve model deployments, modify guardrails within policy constraints, override low-risk compliance checks in emergency situations (with logging), and authorize access for other team members. They would NOT have permission to: bypass audit logging, modify core policies without compliance review, or grant access to restricted data sources.

Implement these controls through technical systems. Most modern AI platforms support RBAC through identity and access management (IAM) systems integrated with your enterprise directory (Active Directory, Okta, etc.). Ensure that access is granular - team members should only see and access the specific AI systems and data relevant to their role. Use multi-factor authentication for sensitive operations like production deployments or guardrail modifications. Implement approval workflows where high-risk changes require multiple sign-offs - for example, deploying a new model to production might require approval from a Data Science Lead, a Compliance Officer, and a System Owner, with each approval logged and timestamped.

Separation of duties is a critical principle. The person who develops a model should not be the same person who approves its deployment. The person who modifies guardrails should not be the person who monitors compliance. This prevents individual actors from bypassing controls and ensures that decisions are reviewed by independent parties. In smaller teams, this can be challenging, but it is worth the organizational effort to maintain.

Audit trails are essential. Every action taken on a governed AI system should be logged: who accessed it, when, what changes they made, and what the system did in response. These logs should be immutable (tamper-proof) and retained for the duration required by your industry and regulatory environment. They become invaluable during compliance reviews, incident investigations, and legal disputes. Tools like centralized logging systems (ELK stack, Splunk, etc.) can aggregate logs from multiple AI systems and make them searchable and analyzable.

Regular access reviews ensure that permissions remain appropriate as roles change. Quarterly, review who has access to each AI system and whether that access is still justified. Remove access for team members who have changed roles or left the organization. This is particularly important for high-risk systems where inappropriate access could lead to policy violations or data breaches.

Role-based controls are not constraints on innovation - they are the infrastructure that allows multiple teams to work on AI systems simultaneously while maintaining accountability and preventing accidental (or intentional) policy violations.

Continuous Monitoring and Adaptive Governance

Governance is not a one-time implementation - it is an ongoing practice that evolves as your AI systems learn, your business needs change, and the regulatory landscape shifts. Continuous monitoring provides the visibility needed to detect when AI systems are drifting from expected behavior and when policies need adjustment.

Establish monitoring baselines during the initial deployment of an AI system. Measure baseline performance: accuracy, fairness metrics, decision latency, and other relevant KPIs. Measure baseline behavior: what types of decisions does the system make, how often does it escalate to humans, what is the distribution of decisions across different customer segments or scenarios. Once baselines are established, continuous monitoring tracks deviations. If model accuracy drops below 92%, this triggers investigation and potential retraining. If the system is escalating more than 5% of decisions to humans (when the baseline was 2%), this signals that guardrails may be too conservative or that the system needs updating. If fairness metrics show that the system is making different decisions for similar customers based on protected characteristics, this is a red flag requiring immediate remediation.

Implement monitoring across multiple dimensions. Performance monitoring tracks whether the AI system is still achieving its intended outcomes. Fairness monitoring checks for bias across demographic groups, geographic regions, or other relevant segments. Drift monitoring detects when the patterns in live data diverge from training data, which can cause model performance to degrade. Security monitoring looks for unusual access patterns or attempts to manipulate the system. Compliance monitoring tracks whether the system is adhering to policies and regulations. Business monitoring measures the actual business impact: is the AI system driving the expected ROI, reducing costs, or improving customer satisfaction as intended?

Use automated alerting to notify relevant teams when monitoring metrics cross thresholds. A Data Science team should be alerted when model accuracy drifts. A Compliance Officer should be alerted when policy violations spike. A Business leader should be alerted when the AI system stops delivering expected ROI. Different stakeholders need different alerts - engineers care about technical metrics, business leaders care about outcomes, compliance cares about policy adherence. Configure alerts with appropriate severity levels: a minor drift in accuracy might be an informational alert, while a sudden spike in bias would be critical.

Establish a governance review cadence. Monthly reviews should examine monitoring dashboards and discuss any anomalies or trends. Quarterly reviews should assess whether policies remain appropriate given new data, regulatory changes, or business evolution. Annual reviews should conduct a comprehensive governance audit: are all systems compliant? Have any new systems been deployed without proper governance? Have policies been updated to reflect lessons learned? This cadence ensures that governance stays current and effective.

Use monitoring data to drive policy refinement. If you discover that a guardrail is triggering too frequently, investigate whether the guardrail is too conservative or whether the AI system needs retraining. If you find that certain policy violations are common but low-risk, consider whether the policy should be adjusted. If you see patterns in how humans override AI decisions, this might indicate that the system needs better training or that the policy should be revised. Governance should be data-driven - policies should evolve based on evidence about what actually works in your environment.

Adaptive governance goes further - it means that guardrails and policies can adjust automatically based on monitored conditions. For example, if fairness monitoring detects that the system is showing bias against a demographic group, the system could automatically tighten guardrails to require human review for decisions affecting that group. If performance monitoring shows that accuracy is declining, the system could automatically increase the escalation threshold to route more decisions to humans until the model is retrained. This adaptive approach maintains safety and compliance while allowing the system to continue operating and learning.

Continuous monitoring transforms governance from a static checklist into a dynamic practice. By measuring what matters and adjusting policies based on evidence, enterprises can maintain compliance while optimizing AI autonomy for business value.

Integrating Governed AI Guardrails Into Your Enterprise Architecture

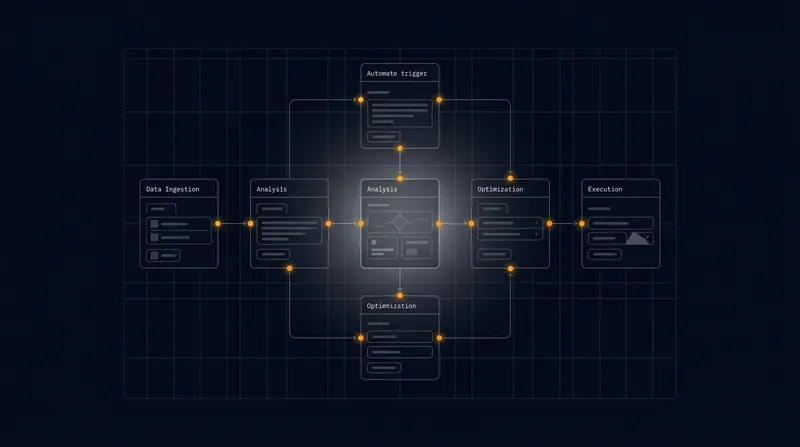

Effective governance requires integration with your broader enterprise technology stack. Governed AI systems do not operate in isolation - they interact with data platforms, business applications, workflow systems, and human teams. Integration ensures that governance rules flow through the entire AI lifecycle and that compliance is maintained across system boundaries.

Start with data integration. Your AI systems need access to high-quality, compliant data. This means integrating with data governance platforms that track data lineage, enforce data quality standards, and ensure that sensitive data is properly protected. When an AI system requests training data, data governance systems should automatically verify that the data meets policy requirements: Is it from an approved source? Has it been properly anonymized if it contains personal information? Does it meet freshness requirements? Has it been flagged for any quality issues? This integration ensures that AI systems are built on reliable, compliant foundations.

Next, integrate with model development platforms and registries. As data scientists develop new models, these platforms should automatically check: Does the model meet minimum accuracy thresholds? Has explainability been documented? Have fairness metrics been evaluated? Are all required tests complete? Only models that pass these automated checks should be eligible for promotion to production. This prevents non-compliant models from ever reaching customers.

Integrate governed AI systems with your workflow and process automation platforms. When an AI system makes a decision, this decision should flow seamlessly into downstream workflows. If the AI system routes a decision to human review, this should automatically create a task in your workflow system with all relevant context. If a human approves or rejects the AI decision, this feedback should flow back to monitoring and model improvement systems. This bidirectional integration ensures that governance is embedded in actual business processes, not just in abstract policies.

Integrate with your identity and access management (IAM) systems. This ensures that role-based access controls are consistently enforced across all AI systems. When an employee changes roles, their access to AI systems should automatically adjust. When a contractor's engagement ends, their access should be automatically revoked. This integration reduces the burden on security teams and ensures that access remains compliant.

Integrate with your audit and logging infrastructure. All decisions made by AI systems, all policy checks, all human overrides, and all policy changes should be logged to a centralized system. This creates an immutable audit trail that can be queried during compliance reviews. Logs should be retained according to regulatory requirements - typically 3-7 years depending on your industry. This integration ensures that you can always demonstrate to auditors exactly how your AI systems operated and whether they complied with policies.

Integrate with your business intelligence and analytics platforms. Monitoring metrics should flow into BI systems where they can be analyzed, visualized, and shared with stakeholders. This integration ensures that business leaders have visibility into AI system performance and can make informed decisions about whether to expand, modify, or retire AI systems.

Finally, integrate with your incident management and response systems. If an AI system violates a critical policy or experiences a failure that affects customers, this should automatically trigger an incident ticket. Incident response teams should have easy access to relevant logs, monitoring data, and policy documentation so they can investigate and remediate quickly. This integration ensures that governance violations are treated with appropriate urgency.

Integration is the glue that holds governance together. Without integration, governance rules exist only in documentation. With integration, governance is embedded in every system and process.

Satisfying Auditors and Risk Teams Through Transparent Governance

Ultimately, governed AI systems must satisfy external and internal scrutiny. Auditors - whether internal audit teams, external auditors, or regulators - need to verify that your AI systems comply with policies and regulations. Risk teams need confidence that AI systems are not exposing the organization to unacceptable risk. Transparency is the key to building this confidence.

Create comprehensive documentation of your AI governance framework. This should include: your policy taxonomy and how it maps to regulatory requirements, technical architecture diagrams showing where guardrails are enforced, descriptions of monitoring systems and what metrics they track, access control matrices showing who has permissions to what systems, audit logs and how they are retained, incident response procedures for governance violations, and evidence of regular testing and validation. This documentation should be organized, up-to-date, and readily available for auditors.

Establish regular audit readiness reviews. Quarterly, conduct a self-assessment: Is every AI system properly documented? Are all policies current? Are guardrails functioning as designed? Are monitoring systems capturing the right metrics? Are audit logs complete? Are access controls properly enforced? By conducting these self-assessments before external audits, you can identify and remediate gaps proactively.

Implement a policy exception management process. Sometimes, business needs require operating outside normal governance constraints. A high-value customer might need a decision to be made faster than normal policy allows. A new use case might require temporary deviation from policy while permanent policy is developed. Rather than allowing ad-hoc exceptions, establish a formal process: exceptions must be documented, justified, approved by appropriate stakeholders, time-limited, and monitored. This shows auditors that you are not ignoring governance - you are managing exceptions in a controlled, documented way.

Demonstrate the business value of governance. Auditors and risk teams sometimes see governance as purely a cost center. Help them understand the value: governance reduces regulatory risk and potential penalties, governance improves customer trust and brand reputation, governance enables faster scaling because systems are reliable and compliant, governance reduces operational risk from AI failures. By quantifying these benefits, you build support for governance investments.

Engage auditors early in new AI system deployments. Rather than building a system and then asking auditors to approve it, involve them during design. Ask: What do you need to see to be confident this system is compliant? What documentation is required? What monitoring must be in place? What exceptions would require escalation? By addressing audit concerns upfront, you accelerate deployment and reduce the risk of costly rework.

Maintain relationships with your regulatory contacts. If your industry is regulated (financial services, healthcare, telecommunications), establish relationships with relevant regulators. Share your governance framework and ask for feedback. Demonstrate that you are taking AI governance seriously. This proactive engagement often results in more favorable treatment during actual regulatory reviews.

Transparency is not a burden - it is a competitive advantage. Organizations that openly demonstrate their governance maturity build trust with auditors, regulators, customers, and employees. This trust translates into faster approvals, lower compliance costs, and greater organizational agility.

Practical Implementation Roadmap for Enterprise AI Governance

Implementing comprehensive AI governance is a multi-phase effort. Most enterprises benefit from a phased approach that builds capability incrementally while delivering value at each stage.

Phase 1: Assessment and Planning (Weeks 1-4) - Inventory all AI systems currently deployed or in development. Document their purpose, scope, and current governance maturity. Identify regulatory requirements applicable to your industry and organization. Define your target governance model and policy taxonomy. Establish governance leadership and assign clear accountability. Conduct a gap analysis comparing current state to target state. This phase typically involves interviews with business leaders, technical teams, compliance, and risk to understand current practices and constraints.

Phase 2: Policy Development (Weeks 5-8) - Develop your policy taxonomy based on risk stratification. Create detailed policy documents for each tier and domain. Define specific, measurable requirements that engineers can implement. Establish exception management processes. Get buy-in from key stakeholders including business leaders, technical teams, and compliance. This phase is critical because policies must be both technically implementable and business-aligned. Policies that are too strict will stifle innovation; policies that are too loose will not provide adequate protection.

Phase 3: Technical Implementation (Weeks 9-16) - Build or configure the technical infrastructure for governed AI guardrails. This might include deploying a compliance engine, implementing monitoring dashboards, configuring access controls, and setting up audit logging. Start with one or two pilot AI systems to test the governance framework before rolling out broadly. Use these pilots to identify gaps and refine processes. This phase requires close collaboration between compliance teams and engineering teams to ensure that policies are correctly translated into technical controls.

Phase 4: Pilot Deployment and Validation (Weeks 17-20) - Deploy the governance framework with pilot AI systems. Monitor closely to ensure guardrails are functioning as designed. Collect feedback from business teams, data scientists, and compliance. Validate that monitoring systems are capturing the right metrics. Conduct a pilot audit to ensure auditors can verify compliance. Make refinements based on pilot experience before broader rollout.

Phase 5: Rollout and Scaling (Weeks 21+) - Roll out the governance framework to all existing AI systems and establish it as standard practice for new systems. Provide training to all teams on the governance framework and their roles within it. Establish governance review cadences and monitoring routines. Continuously refine policies and controls based on monitoring data and lessons learned. This phase is ongoing - governance is not a destination but a continuous practice.

Throughout this roadmap, maintain clear communication with stakeholders. Governance can be perceived as restrictive or burdensome if not properly explained. Frame governance as enabling faster, safer AI deployment. Show how governance reduces risk and accelerates time-to-value. Celebrate wins - when governance prevents a problematic AI decision or when monitoring detects and corrects model drift before it causes customer impact, highlight these as successes.

Emerging Trends and Future Considerations in AI Governance

The field of AI governance is rapidly evolving. Several trends are shaping how enterprises approach this challenge. Explainable AI (XAI) is becoming increasingly important as regulators demand that organizations can explain why AI systems make decisions. This is driving investment in interpretability techniques and tools that make AI decision-making transparent. Federated governance is emerging as enterprises with multiple business units or geographic locations recognize that one-size-fits-all governance does not work - different units may need different policies based on their risk profile and regulatory environment. AI risk quantification is advancing, enabling organizations to assign numerical risk scores to AI systems and use these to prioritize governance investments.

The regulatory landscape continues to evolve. The EU AI Act, which went into effect in 2024, establishes requirements for high-risk AI systems. Similar regulations are being developed in other jurisdictions. Organizations need governance frameworks flexible enough to adapt to changing regulations without complete redesign. Automated compliance checking will become standard practice - manual compliance reviews will be seen as insufficient and too slow. AI governance platforms - dedicated software systems designed to manage AI governance - are emerging as a product category and will likely become as standard as data governance platforms are today.

Finally, the relationship between AI governance and AI ethics is evolving. Governance has traditionally focused on regulatory compliance and risk mitigation. Ethics is increasingly seen as equally important - organizations are asking not just "Is this legal?" but "Is this right?" This is driving governance frameworks that incorporate ethical considerations alongside compliance requirements. Organizations that can balance governance, ethics, and business value will be best positioned to lead in the AI era.

Conclusion: Governance as a Competitive Advantage

Balancing autonomy and compliance in enterprise AI is not a constraint on innovation - it is the foundation that enables sustainable, trustworthy AI at scale. Organizations that implement comprehensive governed AI guardrails see measurable benefits: faster time-to-production for new AI systems because governance is built in rather than bolted on afterward, higher confidence from stakeholders including auditors and customers, reduced regulatory risk and potential penalties, improved AI system reliability and performance, and greater organizational agility to respond to market changes.

At A.I. PRIME, we understand that governance is not a checkbox exercise - it is a strategic capability that distinguishes leading enterprises from followers. Our governance integration services help you build comprehensive AI governance frameworks tailored to your industry, regulatory environment, and business strategy. We work with your teams to develop policy taxonomies, implement automated compliance checking, establish role-based controls, and deploy continuous monitoring. We help you satisfy auditors and risk teams while enabling your AI systems to operate autonomously where appropriate.

Whether you are just beginning your AI governance journey or refining existing frameworks, we can help. Our AI consulting and workflow design services include governance architecture as a core component. We help you assess your current governance maturity, identify gaps, and implement solutions that balance autonomy with compliance. We deploy governed AI guardrails that integrate seamlessly with your existing systems and processes. We establish monitoring and continuous improvement practices that keep governance current as your AI footprint and regulatory landscape evolve.

The enterprises winning in the AI era are those that treat governance as a strategic advantage rather than a burden. They move faster because their governance is built in. They take more risk in areas where it is justified because their controls give them confidence. They scale AI across the organization because they have the governance infrastructure to manage it. If you are ready to build this capability, we are ready to help. Contact us today to discuss how our governance integration and AI consulting services can accelerate your enterprise AI transformation while maintaining the compliance and risk management your organization requires.

The future of enterprise AI belongs to organizations that master the balance between autonomy and compliance. Let us help you get there.

Next step

Book the Opportunity Sprint