A.I. PRIME - Article

Governed AI Guardrails: Implementing Compliant Oversight for Agentic Systems

Embed governance directly into agentic AI architecture to balance autonomy with compliance, audit trails, and real-time policy enforcement.

As autonomous agents become central to enterprise operations, a critical tension emerges: how do you empower these systems to act with velocity while maintaining the control, transparency, and compliance your organization demands? Many enterprises deploying agentic AI face this exact dilemma. They want agents to make decisions in real time, execute workflows without human intervention, and drive measurable ROI. Yet regulators, risk teams, and internal audit functions rightfully demand visibility into every decision an agent makes, proof that those decisions align with policy, and the ability to intervene when necessary. The answer lies not in choosing between speed and safety, but in embedding governance integration directly into your agentic architecture from day one. This approach ensures that compliance, auditability, and explainability become operational advantages rather than bottlenecks. In this guide, we explore how to design and deploy governed AI guardrails that let your autonomous agents thrive within a framework of intelligent oversight, reducing regulatory risk while accelerating outcomes.

The Core Challenge: Balancing Autonomy with Accountability

Autonomous agents represent a fundamental shift in how enterprises automate work. Unlike traditional rule-based workflows that execute predefined sequences, agentic systems learn from context, adapt to new scenarios, and make decisions that humans may not have explicitly programmed. This flexibility is their superpower - it enables rapid response to market changes, personalized customer interactions, and operational efficiencies that manual processes cannot match. Learn more in our post on CEO Guide: Overcoming the Gen-AI Paradox with Agentic AI.

However, this autonomy creates a governance paradox. The more freedom an agent has to act independently, the harder it becomes for enterprises to ensure compliance, maintain audit trails, and demonstrate that decisions align with regulatory requirements. A sales agent autonomously reaching out to prospects needs guardrails that prevent discriminatory targeting. A financial processing agent handling transactions must leave an immutable record of every decision and its justification. A healthcare scheduling agent cannot violate patient privacy regulations, even in pursuit of operational efficiency.

The challenge is not whether to govern autonomous agents - it is how to govern them in ways that enhance rather than hinder their effectiveness. Governance integration means embedding policy enforcement, auditability, and explainability into the agent's decision-making engine itself, rather than bolting oversight on afterward.

Traditional governance approaches treat compliance as a post-hoc verification layer. Agents execute actions, and then human auditors review logs to confirm adherence to policy. This reactive model creates delays, increases costs, and introduces blind spots where non-compliant behavior goes undetected until it causes damage. Modern governance integration flips this model: compliance becomes a real-time, embedded constraint that shapes every decision an agent makes before it acts.

This shift requires a new architecture. Your agentic systems must integrate governance at multiple layers - from the data inputs the agent accesses, to the decision logic it applies, to the outputs it generates and the audit records it maintains. When designed correctly, this integration actually accelerates agent performance by reducing friction, eliminating post-action corrections, and building trust with stakeholders who might otherwise resist agentic automation.

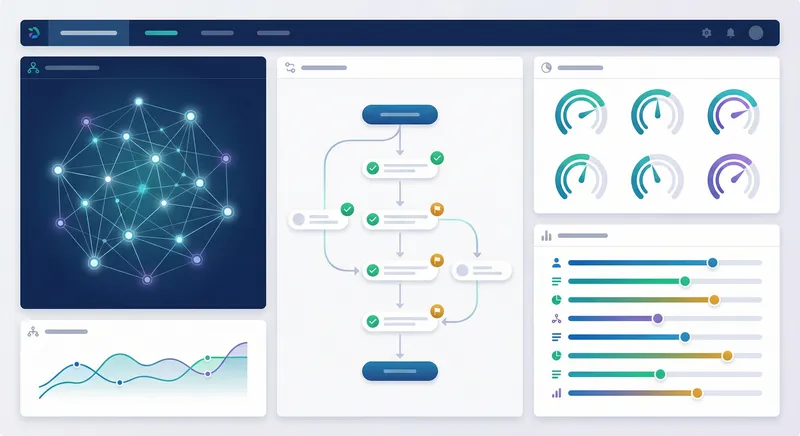

Governance Integration: The Five Pillars of Compliant Agentic Systems

Effective governed AI guardrails rest on five interconnected pillars. Each addresses a distinct dimension of compliance and control, yet together they form a unified framework that allows agents to operate autonomously within defined boundaries. Learn more in our post on Integration Patterns: APIs, Event Streaming, and Connectors for Autonomous Agents.

Pillar One: Auditability and Immutable Decision Trails

Auditability is the foundation of any compliant autonomous system. Regulators, auditors, and risk teams must be able to reconstruct exactly what an agent did, why it did it, and what information informed each decision. This requires more than simple logging. A comprehensive audit trail captures not just the final action, but the reasoning chain that led to it.

In practice, this means recording the agent's input data, the policies and constraints it considered, the alternatives it evaluated, and the decision weights it applied. For a loan approval agent, the audit trail should show which applicant characteristics were evaluated, how they were weighted against lending policy, what thresholds triggered approval or denial, and which human policies were applied at each decision point. If a regulator later questions whether the agent applied fair lending practices, your organization can produce a complete, timestamped record that demonstrates compliance.

Immutable decision trails go further. Using technologies like blockchain or cryptographic hashing, you create a tamper-proof record that cannot be altered retroactively. This is particularly critical in highly regulated industries like finance and healthcare, where the integrity of decision records directly impacts legal liability. An immutable trail proves not only what the agent decided, but that the record has never been modified - a powerful defense in audits or regulatory investigations.

Implementation requires careful architecture. Your agent framework must capture decision data at the moment of action, store it in a secure, append-only system, and make it easily accessible to audit tools. This should happen transparently - agents should not need to pause or slow down to create audit records. The logging infrastructure runs in parallel, capturing data without impacting agent velocity.

Pillar Two: Access Controls and Data Boundaries

Autonomous agents are only as trustworthy as the data they can access. An agent with unrestricted access to customer records, financial systems, or proprietary information poses massive compliance and security risks. Governed AI guardrails include fine-grained access controls that define exactly which data sources an agent can query, under what conditions, and with what restrictions.

This moves beyond traditional role-based access control (RBAC), which assumes human operators. Agents operate continuously, autonomously, and at scale - they may make thousands of decisions per hour. Your access control framework must be equally dynamic and precise. An agent handling customer service inquiries might have read-only access to customer contact information and order history, but zero access to payment methods or social security numbers. A procurement agent might access vendor databases and inventory systems, but not employee salary information or strategic sourcing plans.

Data boundaries also include temporal constraints. An agent might have access to real-time transaction data for the current day, but only historical summaries for older periods. It might have access to live customer data during business hours, but only anonymized data for model training after hours. These boundaries are enforced at the data layer, not the agent layer - the agent cannot even request data it is not authorized to see.

Implementation requires integration with your data governance infrastructure. A modern data platform should support attribute-based access control (ABAC), where access decisions are based on attributes of the agent, the data, the context, and the policy. Your agents connect through a governance-aware data layer that enforces these controls transparently. When an agent requests data, the system evaluates whether the request complies with policy, grants or denies access, and logs the attempt for audit purposes.

Pillar Three: Policy Enforcement and Constraint-Based Reasoning

Policies define the rules that autonomous agents must follow. These range from high-level business rules (never approve loans to applicants with credit scores below 650) to compliance constraints (do not process transactions from sanctioned jurisdictions) to operational guidelines (escalate decisions involving amounts over $100,000 to human review). Governed AI guardrails embed these policies directly into the agent's decision-making engine.

Constraint-based reasoning treats policies as hard constraints that shape what actions an agent can take. Rather than allowing the agent to decide freely and then checking whether its decision violates policy, the framework limits the agent's decision space to actions that are policy-compliant from the start. This is more efficient and more reliable than post-hoc policy checking.

Consider a sales agent that autonomously reaches out to prospects. Without embedded policy enforcement, the agent might make outreach decisions based purely on predicted conversion probability, potentially targeting protected classes or violating anti-discrimination regulations. With constraint-based governance, the agent's decision engine includes fairness constraints that prevent it from considering protected attributes, geographic constraints that prevent outreach to certain regions, and opt-out constraints that prevent contacting prospects who have requested exclusion.

Implementing policy enforcement requires a policy language that is expressive enough to capture complex business rules, yet interpretable enough that auditors can verify compliance. Many organizations use declarative policy frameworks where policies are written as rules or logical statements, separate from the agent's core logic. This separation makes policies easier to update, audit, and version control. When regulations change, you update the policy framework without touching the agent code itself.

Pillar Four: Explainability and Decision Transparency

Even with perfect auditability and policy enforcement, autonomous agents face a credibility problem: stakeholders do not trust decisions they cannot understand. Explainability addresses this by providing clear, human-comprehensible explanations for why an agent made a particular decision. This is essential for building trust with business stakeholders, regulators, and the customers affected by agent decisions.

Explainability takes multiple forms. At the simplest level, an agent should be able to articulate which factors influenced a decision. A credit approval agent might explain: "This application was approved because the applicant's debt-to-income ratio is 0.35 (below our 0.43 threshold), credit score is 745 (above our 700 minimum), and employment tenure is 8 years (above our 2-year minimum). All three factors met policy requirements." This explanation is specific, policy-grounded, and verifiable.

More sophisticated explainability techniques provide counterfactual explanations: what would have to change for the decision to be different? For the loan applicant above, the explanation might continue: "If the credit score were below 700, the application would be denied. The other factors are sufficient for approval." This helps applicants understand what they would need to do to change the outcome, and helps regulators verify that the decision boundary is appropriate.

Explainability also serves quality assurance. By examining explanations across many decisions, you can identify patterns - perhaps the agent is systematically overweighting certain factors, or systematically making decisions that violate implicit business intent. These patterns surface problems early, before they scale into widespread compliance violations.

Implementing explainability requires designing agents with interpretability in mind from the start. Some agent architectures are inherently more explainable than others. Rule-based agents, where decisions flow from explicit if-then policies, are highly explainable. Deep learning models, while powerful, are often opaque. Many organizations use hybrid approaches: a neural component for pattern recognition and feature extraction, combined with a rule-based or decision tree component for final decision-making. This preserves the power of machine learning while maintaining explainability.

Pillar Five: Automated Policy Enforcement and Real-Time Guardrails

The final pillar is automation. Governance integration is not just about creating policies and recording decisions - it is about enforcing policies in real time, automatically, as agents operate. This requires guardrail systems that monitor agent behavior continuously and intervene when necessary.

Real-time guardrails operate at multiple levels. At the decision level, guardrails prevent an agent from taking actions that violate policy. At the behavioral level, guardrails detect patterns of decisions that collectively violate policy, even if each individual decision is compliant. At the outcome level, guardrails monitor the real-world results of agent decisions and flag unexpected patterns that might indicate a problem.

For example, a hiring agent might be policy-compliant in each individual hiring decision - it never explicitly considers protected characteristics, it applies consistent evaluation criteria, it documents its reasoning. Yet if you analyze the aggregate outcomes, you discover that the agent is systematically hiring fewer women than men, despite receiving equally qualified female applicants. This pattern violation would be caught by behavioral guardrails that analyze aggregate outcomes, not just individual decisions.

Automated enforcement means guardrails trigger interventions without human involvement. A decision that violates hard constraints is simply prevented - the agent cannot execute it. A decision that violates soft constraints might be flagged for human review, with the agent pausing until a human approves or rejects the action. A behavioral pattern that suggests systemic bias might trigger automatic model retraining or policy adjustment.

Implementing real-time guardrails requires infrastructure that can evaluate policies continuously, at scale, with minimal latency. This is where modern observability and monitoring platforms become essential. Your agent framework should emit decision events in real time, feeding them into a monitoring system that evaluates them against guardrail policies. When a violation is detected, the system can trigger automated responses - blocking the action, escalating for review, logging an alert, or updating the agent's constraints.

Architecting Governance into Your Agentic System

Understanding the five pillars of governance integration is one thing; implementing them in your actual agentic systems is another. Architecture matters enormously. A system designed without governance in mind will be difficult and expensive to retrofit with compliance controls. A system designed with governance as a core architectural principle will be more efficient, more trustworthy, and easier to operate at scale. Learn more in our post on Security and Compliance for Agentic AI Automations.

The Governance-First Architecture Pattern

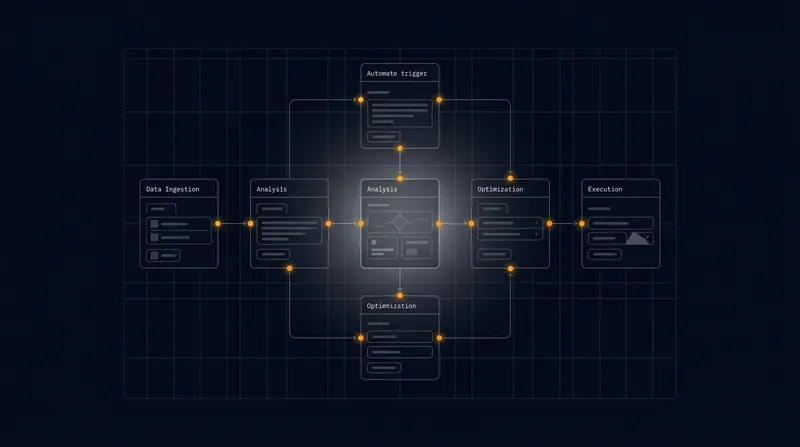

A governance-first architecture treats compliance as a first-class concern, not an afterthought. This means building governance controls into every layer of your agentic system - from data ingestion, through decision-making, to action execution and audit logging.

At the data layer, implement a governance-aware data access service. Rather than agents querying data sources directly, they request data through a service that enforces access controls, applies data transformations required by policy (like anonymization or aggregation), logs all data access, and returns data with metadata about its provenance and policy constraints. This service is the single point of enforcement for data governance.

At the decision layer, implement a policy engine that evaluates agent decisions against compliance constraints before they are executed. This engine takes as input the proposed decision, the relevant policies, and the decision context, and returns a verdict: approve, deny, or escalate. The policy engine should support multiple policy languages - some organizations use declarative rule languages, others use decision trees, others use more sophisticated constraint satisfaction approaches. The key is that policies are defined separately from agent code, versioned independently, and auditable.

At the execution layer, implement controls that prevent agents from executing decisions that have not been approved by the policy engine. This is a hard technical boundary - the agent simply does not have the capability to take actions that bypass governance. If a decision requires human approval, the agent pauses and waits for a human decision, which is then logged as part of the audit trail.

At the logging layer, implement an immutable audit system that captures every decision, its justification, the policies applied, the data accessed, and the outcome. This audit system should be separate from operational systems - it is write-only, append-only, and designed for long-term retention and analysis. Audit data feeds into compliance reporting tools and forensic analysis systems.

Integration with Existing Enterprise Systems

Most enterprises have existing governance infrastructure - data catalogs, access management systems, policy repositories, compliance reporting tools. Governance integration means connecting your agentic systems to these existing tools, not building parallel governance systems.

This integration happens at multiple points. Your agents should query your data catalog to understand data lineage, ownership, and quality before using data in decisions. Your policy engine should pull policies from your central policy repository, ensuring agents follow the same policies as human employees. Your audit system should feed into your central audit log and compliance reporting infrastructure. Your access control system should integrate with your identity and access management platform.

This integration approach has multiple benefits. It ensures consistency - agents follow the same policies as human employees. It reduces duplication - you maintain one policy repository, not separate ones for agents and humans. It simplifies compliance - auditors can examine a unified governance infrastructure rather than trying to understand separate systems. And it makes governance updates efficient - when policies change, the change automatically applies to all agents that consume those policies.

Balancing Governance with Performance

A common concern with governance integration is performance overhead. If every agent decision must be evaluated against policies, logged to an audit system, and checked against access controls, will this slow down agent execution to unacceptable levels?

In practice, well-designed governance infrastructure adds minimal overhead. Access control checks are fast - they are simple attribute comparisons. Policy evaluation is fast - most policies are simple rules that evaluate in milliseconds. Logging is fast - it is asynchronous and does not block agent execution. The key is designing the infrastructure to minimize latency without compromising governance.

This requires careful architectural choices. Use caching to avoid repeated policy evaluations for similar decisions. Use asynchronous logging so audit writing does not block agent execution. Use distributed policy engines so evaluation scales horizontally. Use edge computing so governance enforcement happens close to where decisions are made, minimizing network latency.

In many cases, governance integration actually improves performance. By preventing invalid decisions at the point of decision-making, rather than catching them after the fact, you reduce the cost of error correction. By providing agents with clear policy constraints, you reduce the search space the agent must explore, making decision-making faster. By integrating with your data governance infrastructure, you ensure agents access optimized data pipelines rather than querying raw data sources.

Practical Implementation: From Design to Deployment

Implementing governed AI guardrails is not a single project - it is a continuous practice that evolves as your agentic systems mature. However, there are concrete steps you can take to move from design to deployment.

Step One: Map Your Compliance Requirements

Before you implement any governance controls, understand what you need to comply with. This includes regulatory requirements (industry-specific regulations like HIPAA or GDPR), internal policies (your organization's standards for decision-making), and stakeholder expectations (what your customers, partners, and employees expect from your systems).

Create a compliance map that documents these requirements and traces them to your agentic systems. Which agents handle regulated data? Which agents make decisions that could harm customers or violate regulations? Which agents operate in jurisdictions with specific compliance requirements? For each agent, identify the specific compliance obligations it must meet.

This map becomes your governance roadmap. It prioritizes which agents need which governance controls, and in what order to implement them. Agents handling sensitive data or making high-impact decisions get comprehensive governance first. Agents with lower risk can have simpler governance that can be enhanced over time.

Step Two: Design Policy Frameworks

For each agent, design a policy framework that captures the compliance requirements it must meet. This framework should be expressed in a form that is both machine-readable (so your policy engine can evaluate it) and human-readable (so auditors and business stakeholders can verify it).

A good policy framework includes constraints (hard rules that must never be violated), thresholds (decision boundaries that trigger human review or escalation), and fairness requirements (rules that ensure decisions are not discriminatory). For a credit approval agent, constraints might include "never approve applicants with credit scores below 650" and "never use age or gender in approval decisions." Thresholds might include "escalate decisions involving loan amounts over $500,000 to human review." Fairness requirements might include "ensure approval rates are within 5 percentage points across demographic groups."

Document these policies clearly, with business justification for each. This documentation becomes part of your compliance evidence - it shows regulators that your governance is intentional and well-thought-out, not accidental.

Step Three: Implement Governance Infrastructure

With your policies defined, implement the infrastructure to enforce them. This includes a policy engine that evaluates decisions against policies, an audit system that logs all decisions and their justifications, an access control system that restricts agent data access, and monitoring systems that detect policy violations.

Start with the highest-risk agents first. Implement comprehensive governance for agents handling sensitive data or making high-impact decisions. As you build experience, expand governance to other agents. This phased approach reduces implementation risk and lets you learn from early deployments.

Use existing tools where possible. Many organizations already have policy engines, audit systems, and access control infrastructure. Integrate your agentic systems with these existing tools rather than building new ones. This reduces implementation cost and complexity.

Step Four: Test and Validate

Before deploying governed agents to production, test them thoroughly. This includes testing that governance controls work as intended, testing that policies are correctly implemented, and testing that governance does not introduce unintended side effects.

Create test scenarios that exercise your governance controls. For a credit approval agent, create test applications that should be approved, denied, and escalated. Verify that the agent makes the correct decisions and logs them correctly. Create test scenarios that attempt to violate policies - verify that the agent cannot execute policy-violating decisions.

Involve compliance and audit teams in testing. They understand regulatory requirements and can verify that governance implementation actually achieves compliance objectives. This early involvement builds confidence in the governed system and identifies problems before production deployment.

Step Five: Monitor and Improve

After deployment, continuously monitor your governed agents. Track metrics like policy violation rates, decision distribution across policy thresholds, escalation rates, and audit log completeness. These metrics tell you whether governance is working as intended.

Use audit logs to analyze agent behavior. Are agents making decisions that are compliant but unintended? Are certain policies being violated repeatedly, suggesting they need adjustment? Are there patterns in decisions that suggest bias or unfairness? This analysis feeds into continuous improvement of your governance framework.

Update policies as business needs and regulatory requirements change. Governance is not static - it evolves as your organization learns and as external requirements change. Treat policy updates as normal operational changes, with version control, testing, and change management.

Overcoming Common Implementation Challenges

Organizations implementing governed AI guardrails typically encounter several challenges. Understanding these challenges and how to address them accelerates your implementation.

Challenge One: Policy Definition Complexity

Policies are often harder to define than they initially appear. What seems like a simple rule - "never approve high-risk applicants" - becomes complex when you try to operationalize it. How do you define risk? What data do you use? How do you handle edge cases? How do you handle conflicts between multiple policies?

Address this by starting simple. Define policies at a high level first, then decompose them into specific, testable rules. Involve business stakeholders in policy definition - they understand the business intent behind policies. Involve compliance teams - they understand regulatory requirements. Involve data teams - they understand what data is available and reliable. This collaborative approach produces policies that are both compliant and operationally feasible.

Use policy versioning and testing to manage policy complexity. Treat policies like code - version them, test them, review them before deployment. This discipline prevents policy bugs that could lead to compliance violations.

Challenge Two: Legacy System Integration

Most enterprises have legacy systems that were not designed with governance integration in mind. Integrating governance with these systems is often complex and costly. A legacy data warehouse might not support fine-grained access controls. A legacy business system might not expose audit trails. A legacy policy system might not integrate with modern policy engines.

Address this by building an integration layer that sits between your agentic systems and legacy systems. This layer enforces governance even when the underlying systems do not natively support it. For example, if a legacy data warehouse does not support fine-grained access control, your integration layer can apply access controls at query time, masking or filtering data before returning it to agents.

Prioritize modernizing the most critical systems first. If your most sensitive data lives in a legacy system that cannot be governed, that becomes a priority for modernization. Over time, as you modernize systems, governance integration becomes easier and less costly.

Challenge Three: Performance and Scalability

Governance infrastructure adds overhead. At small scale, this overhead is negligible. At large scale - when agents are making thousands of decisions per second - governance overhead can become significant. Policy evaluation, access control checks, and audit logging all consume compute resources.

Address this through architectural optimization. Use caching to avoid repeated evaluations. Use asynchronous processing for audit logging. Use distributed systems to scale governance infrastructure horizontally. Use edge computing to move governance enforcement closer to decision-making. These techniques allow governance to scale with agent volume without becoming a bottleneck.

Measure overhead carefully. Many organizations find that governance overhead is much smaller than expected - often less than 5% latency impact for well-designed systems. In some cases, governance actually improves performance by preventing invalid decisions that would otherwise require correction.

Challenge Four: Stakeholder Buy-In

Governance adds visible constraints to agentic systems. Business stakeholders may perceive governance as slowing down agents or preventing them from taking optimal actions. Compliance teams may perceive governance as insufficient. IT teams may perceive governance as adding operational complexity.

Address this through clear communication about governance value. Governance is not just about compliance - it is about building trust and reducing risk. It is about enabling agents to operate at scale without creating liability. It is about providing the transparency and auditability that customers, partners, and regulators demand. Frame governance as an enabler of agentic automation, not a constraint on it.

Demonstrate governance value through metrics. Show how governance prevents compliance violations that would be costly. Show how governance enables faster deployment of agents because stakeholders trust them. Show how governance reduces operational risk. These metrics build buy-in across the organization.

The Future of Governed Agentic Systems

Governance integration is not a one-time implementation - it is an evolving practice as agentic AI technology matures and regulatory environments change. Several trends are shaping the future of this space.

First, governance is becoming more sophisticated. Early governance systems were relatively simple - they enforced basic rules and logged decisions. Modern systems are moving toward adaptive governance that learns from experience. If an agent is repeatedly making decisions that comply with policy but produce poor outcomes, governance systems can suggest policy adjustments. If certain policy combinations consistently cause conflicts, governance systems can recommend policy refinement. This adaptive approach makes governance more effective and less burdensome.

Second, governance is becoming more distributed. Rather than centralized governance infrastructure, future systems will push governance decision-making closer to where decisions are made. Edge agents will have local governance that understands their specific context and constraints. These local governance systems will coordinate with central governance to ensure consistency and compliance. This distributed approach reduces latency and increases resilience.

Third, governance standards are emerging. Industry groups and standards bodies are developing common frameworks for agentic AI governance. These standards will make it easier for organizations to implement governance, easier for auditors to verify compliance, and easier for agents from different vendors to operate together in a governed ecosystem.

Fourth, governance tooling is improving. Today, governance integration requires significant custom development. As the market matures, governance will be increasingly built into agentic platforms and tools. Organizations will be able to implement governance through configuration rather than custom coding. This democratization of governance will accelerate adoption.

The organizations that will lead in agentic AI are not those that move fastest without governance - they are those that figure out how to move fast while maintaining control. Governed AI guardrails are not a constraint on agentic velocity; they are the foundation that makes velocity sustainable and trustworthy at enterprise scale.

Conclusion: Governance as Competitive Advantage

Implementing governed AI guardrails is one of the most important decisions you will make in your agentic AI journey. It determines whether your autonomous agents become trusted, scalable assets that drive competitive advantage, or risky systems that create liability and constrain your organization's ability to innovate.

The good news is that governance integration is achievable. By embedding auditability, access controls, explainability, and automated policy enforcement into your agentic architecture from the start, you create systems that are simultaneously more powerful and more trustworthy. These systems move faster because they do not need post-hoc verification. They scale further because stakeholders trust them. They create less risk because they operate within clear boundaries.

At A.I. PRIME, we specialize in helping enterprises implement governance integration as a core part of their agentic AI strategy. Our governance integration services go beyond policy definition - we help you design agentic architectures that are inherently compliant, implement infrastructure that enforces governance at scale, and establish practices that keep governance effective as your systems evolve.

We work with your compliance, risk, and IT teams to map your requirements and design governance frameworks that are both rigorous and operationally feasible. We integrate governance with your existing enterprise systems - data catalogs, policy repositories, audit infrastructure - so you have a unified governance approach. We implement monitoring and continuous improvement practices so your governance evolves with your business. And we provide ongoing enablement so your teams understand how to operate governed agentic systems effectively.

If you are deploying autonomous agents at scale and need to maintain compliance and control, governance integration is not optional - it is essential. The question is not whether to govern your agents, but how to govern them in ways that enhance rather than hinder their effectiveness. We can help you answer that question and implement the answer.

Start by assessing your current agentic systems against the five pillars of governance integration: auditability, access controls, policy enforcement, explainability, and automated guardrails. Where are you strong? Where are you vulnerable? What governance investments would reduce your risk and enable faster deployment? Our governance integration experts can help you answer these questions and develop a roadmap for implementation. Reach out to discuss how governed AI guardrails can accelerate your autonomous agent strategy while maintaining the control and compliance your organization demands.

Next step

Book the Opportunity Sprint