A.I. PRIME - Article

Composable Agent Playbooks: Rapidly Reconfigurable Automation for Dynamic Operations

Discover how composable agent playbooks enable rapid automation reconfiguration across IT, finance, and support functions.

Your enterprise faces a persistent challenge: workflows that worked yesterday no longer fit today's demands. IT operations teams scramble to reconfigure automation when business priorities shift. Finance departments struggle to adapt approval chains when organizational structures change. Customer support can't quickly pivot response protocols when new product lines launch. The result is operational friction, delayed time-to-value, and automation investments that fail to scale across functions.

This friction point reveals a critical gap in how most enterprises approach automation. Traditional workflow automation is rigid, monolithic, and tightly coupled to specific business contexts. When requirements change - and they always do - rebuilding entire automation systems from scratch becomes necessary. The cost in development time, testing cycles, and operational disruption compounds quickly.

Composable agent playbooks represent a fundamental shift in this paradigm. Rather than building single-purpose automation systems, composable playbooks enable you to construct modular, parameterized workflows that adapt rapidly to changing business needs. Think of them as intelligent building blocks that snap together, reconfigure, and redeploy across departments without requiring engineering-level intervention. This approach transforms automation from a static asset into a dynamic capability that evolves with your business.

In this comprehensive guide, we'll explore how to design, implement, and scale composable agent playbooks that accelerate automation across IT operations, finance, support, and beyond. You'll discover practical patterns for creating reusable components, establishing robust testing pipelines, and building governance frameworks that enable rapid reconfiguration while maintaining control and compliance.

Understanding Composable Agent Playbooks: The Foundation

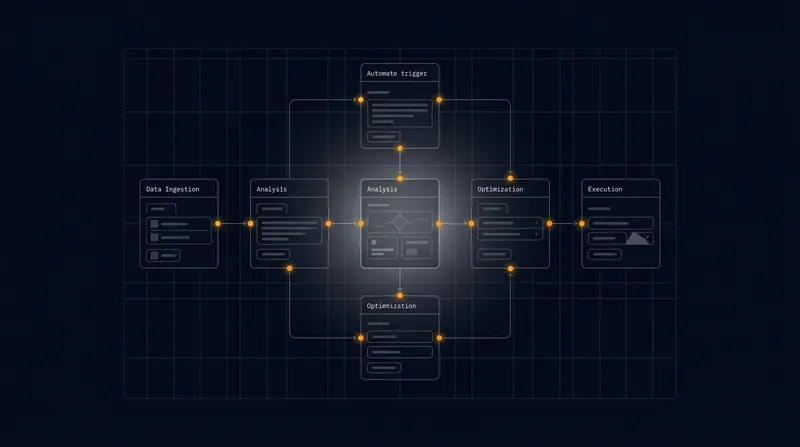

Composable agent playbooks represent a modern approach to enterprise automation that prioritizes flexibility, reusability, and rapid adaptation. At their core, they are parameterized workflows where individual steps, decision points, and agent interactions can be mixed, matched, and reconfigured without touching underlying code. This modularity enables teams to create new automation scenarios in hours rather than weeks. Learn more in our post on Agent Network Deployment: Scaling Multi-Agent Orchestration for the Enterprise.

The fundamental difference between traditional automation and composable playbooks lies in architectural philosophy. Traditional workflows are often designed for a single use case, with decision logic, data transformations, and action sequences tightly bound together. Composable playbooks, by contrast, decouple these elements into independently deployable components. Each component - whether it's a data validation step, an approval workflow, or a multi-agent coordination sequence - can be versioned, tested, and reused across multiple playbooks.

Consider a practical scenario: your IT operations team manages incident response workflows. Today's playbook handles network outages. Tomorrow, you need similar automation for security alerts. With traditional automation, you'd rebuild the entire system. With composable playbooks, you'd reuse the core escalation component, the notification sequence, and the status tracking logic - changing only the incident classification and initial detection parameters. What might have taken two weeks now takes two hours.

The shift toward composable automation reflects a broader enterprise truth: the only constant is change. Organizations that can reconfigure their automation rapidly gain competitive advantage through operational agility.

The technical foundation of composable playbooks rests on several key principles. First, parameterization - allowing playbook behavior to be controlled through configuration rather than code. Second, modular design - breaking complex workflows into discrete, reusable components. Third, clear interfaces - ensuring components communicate through well-defined contracts. Fourth, versioning - enabling multiple versions of components to coexist, supporting gradual migration and rollback. These principles work together to create automation systems that are both flexible and reliable.

Composable playbooks also introduce a crucial capability: the ability to compose multi-agent workflows dynamically. Rather than hardcoding which agents participate in a workflow, composable playbooks enable you to declare agent roles, responsibilities, and interaction patterns through configuration. This means you can add specialized agents, adjust their sequence, or modify their decision criteria without disrupting the overall automation architecture.

Designing Reusable, Parameterized Playbook Components

Creating truly reusable playbook components requires a different mindset than traditional workflow design. Instead of optimizing for a single use case, you must think about how a component might be used across multiple contexts, industries, and business scenarios. This generalization process is where many organizations struggle, but mastering it unlocks exponential returns on automation investment. Learn more in our post on Designing Playbooks: Template Library for Common Enterprise Use Cases.

Identifying Reusable Patterns Across Functions

The first step in designing reusable components is recognizing patterns that transcend specific functions. While IT operations, finance, and support appear to have distinct needs, they share fundamental workflow patterns. Both IT incident response and finance exception handling require escalation logic. Both support workflows and finance approvals need notification sequencing. Both IT change management and finance policy enforcement require audit trails.

By identifying these cross-functional patterns, you create components that serve multiple purposes. An escalation component designed for IT incident management can be parameterized to handle finance approval escalations, support ticket escalations, or HR request escalations. The underlying logic - determining who to notify, when to escalate, and how to track escalation history - remains identical. Only the parameters change.

Effective pattern identification requires analyzing workflows across your organization and extracting common structures. Look for decision points that recur - approval gates, validation checks, priority assessments, and routing logic. Look for data transformations that appear in multiple contexts. Look for notification and communication sequences that follow similar patterns. These recurring structures become your reusable component library.

Parameterization Strategies for Maximum Flexibility

Once you've identified reusable patterns, the next challenge is parameterizing them effectively. Parameterization means replacing hardcoded values and logic with configurable inputs. However, not all parameterization is equally valuable. The goal is to expose the right parameters - those that change frequently across use cases - while keeping the underlying logic stable.

Consider an approval workflow component. Rather than hardcoding approval thresholds, approval chains, and notification templates, these become parameters. A finance team might configure thresholds of $5,000 for manager approval and $50,000 for director approval. An IT team might configure different chains for security changes versus infrastructure changes. A support team might configure approval chains based on customer tier. The same component serves all three functions because the approval logic remains constant while the parameters vary.

Effective parameterization typically involves several categories. Configuration parameters control behavior without changing logic - approval amounts, notification recipients, timeout durations. Data mapping parameters define how input data transforms into component inputs - which field contains the requester ID, which field contains the amount, which field contains the category. Conditional parameters enable or disable features - whether to require additional approval at certain thresholds, whether to send notifications, whether to log to audit systems.

The key to successful parameterization is maintaining a clear boundary between what's configurable and what's not. If you expose too many parameters, components become overwhelming and difficult to use. If you expose too few, components lack flexibility. The sweet spot is exposing parameters that address 80% of the variation you observe across use cases, while keeping the core logic stable and testable.

Structuring Components for Composition

Reusable components must be structured with composition in mind. This means designing clear input and output contracts, handling errors gracefully, and providing visibility into component execution. Components should be atomic enough to be independently useful but substantial enough to provide real value when composed together.

Input contracts define what data a component expects and in what format. Rather than assuming a specific data structure, well-designed components accept inputs with clear schemas. A validation component might accept any data object along with a set of validation rules, enabling it to validate different data types across playbooks. Output contracts define what a component produces - success or failure status, transformed data, execution metadata. These contracts become the glue that enables seamless composition.

Error handling within components is critical for composable systems. Rather than failing silently or crashing, components should produce clear error outputs that downstream components can handle. A component might return a success flag, error code, error message, and suggested remediation steps. Downstream components can then decide how to handle different error scenarios - retry, escalate, skip, or fail the entire playbook.

Metadata and observability are often overlooked but essential for composable playbooks. Components should emit execution metadata - how long they took, what data they processed, what decisions they made. This metadata enables monitoring, debugging, and optimization of playbook performance across use cases.

Building Robust Testing and Versioning Pipelines

As composable playbooks become critical to your operations, the stakes for reliability increase dramatically. A playbook bug that affects a single use case is manageable. A bug in a reusable component that affects dozens of playbooks across multiple functions is a business crisis. This reality demands sophisticated testing and versioning strategies. Learn more in our post on Change Management for Automation: Engaging Stakeholders and Building AI Fluency.

Testing Strategies for Reusable Components

Testing composable components requires a different approach than testing monolithic workflows. You must test components in isolation, test them in various composition contexts, and test the entire playbook end-to-end. This multi-layered testing strategy ensures reliability across all use cases.

Unit testing focuses on individual components. Does the validation component correctly identify invalid data? Does the escalation component select the right approvers? Does the notification component format messages correctly? Unit tests should cover normal cases, edge cases, and error scenarios. They should be fast, deterministic, and not dependent on external systems.

Integration testing verifies that components work correctly when composed together. When an approval component feeds its output to a notification component, does the data flow correctly? When multiple components access the same data store, do concurrency issues arise? Integration tests should cover the most common composition patterns and verify that component outputs match downstream component input expectations.

Playbook testing validates complete workflows end-to-end. This includes both happy-path scenarios where everything works correctly and failure scenarios where components encounter errors. Effective playbook testing uses realistic data, exercises all decision paths, and verifies that the final outcome matches expectations. Many organizations use synthetic test data or sandbox environments to run playbooks without affecting production systems.

Regression testing becomes increasingly important as you add new components and modify existing ones. When you update a component, you need to verify that existing playbooks using that component still function correctly. A comprehensive test suite that covers all playbooks using a modified component prevents regressions from reaching production.

Performance testing ensures that components perform acceptably across various data volumes and complexity levels. A component that executes in 100 milliseconds with small datasets might time out with large datasets. Performance testing identifies these limitations and helps you set realistic expectations about where components can be safely used.

Versioning Strategies for Safe Evolution

As your component library grows and business needs evolve, you'll inevitably need to modify components. Versioning strategies enable you to make these modifications safely without breaking existing playbooks. The key is allowing multiple versions of a component to coexist, with playbooks explicitly declaring which version they use.

Semantic versioning provides a clear framework for this. Major versions indicate breaking changes - modifications that require playbooks using the component to be updated. Minor versions indicate new functionality that's backward compatible. Patch versions indicate bug fixes. A component might exist in versions 1.0, 1.1, 1.2, and 2.0 simultaneously. Playbooks using version 1.2 continue working unchanged. Playbooks using version 2.0 benefit from new capabilities. Teams can migrate to version 2.0 on their own schedule.

Deprecation policies guide the lifecycle of component versions. A policy might state that minor versions are supported for one year, major versions for two years. This gives teams time to migrate while preventing the burden of supporting infinite versions indefinitely. Clear deprecation announcements and migration guides help teams plan their upgrades.

Component registries serve as the central repository for your component library. A well-organized registry includes component documentation, version history, test results, performance characteristics, and usage examples. Teams can search the registry to discover components that might address their needs, understanding both capabilities and limitations before deciding to use them.

Dependency management becomes critical when components depend on other components. If component B depends on component A, you need to track this dependency and ensure compatibility across versions. A component registry should surface these dependencies, alerting teams when updates might affect dependent components.

Continuous Integration and Deployment for Playbooks

Treating playbook components like software code enables you to apply software engineering best practices. Continuous integration pipelines automatically test components whenever they're modified. Continuous deployment pipelines automatically promote tested components to production. This automation reduces manual effort, catches bugs early, and accelerates the pace at which you can safely evolve your automation.

A typical CI/CD pipeline for playbook components includes several stages. First, automated unit tests verify the component works in isolation. Second, integration tests verify the component works with other components. Third, playbook tests verify end-to-end workflows function correctly. Fourth, performance tests ensure the component meets performance requirements. Fifth, security tests check for vulnerabilities or compliance violations. Only components that pass all stages proceed to production.

Staging environments enable you to test playbooks in a production-like environment before deploying to actual production systems. This catches issues that might not appear in development environments - concurrency problems, data volume challenges, integration issues with production systems. Many organizations use a canary deployment approach, initially directing a small percentage of traffic to new components while monitoring for issues before rolling out to 100%.

Organizations that implement robust testing and versioning pipelines for their playbook components gain the confidence to innovate rapidly. When you trust your automation, you can reconfigure it frequently without fear of breaking critical business processes.

Cross-Functional Playbook Reuse: IT Ops, Finance, and Support

The true power of composable playbooks emerges when you move beyond single-function use cases and enable reuse across IT operations, finance, customer support, and other critical functions. This cross-functional reuse multiplies the return on investment in building reusable components and creates organizational momentum toward comprehensive automation.

IT Operations Automation Patterns

IT operations encompasses incident management, change management, asset management, and capacity planning. Each of these domains contains workflows that benefit from composable playbooks. Incident management playbooks handle detection, classification, escalation, resolution, and post-incident review. Change management playbooks handle request submission, approval, scheduling, execution, and rollback. Asset management playbooks handle procurement, deployment, lifecycle tracking, and decommissioning.

Core components that IT operations teams build and reuse include escalation logic that determines who should be notified based on incident severity, impact, and service criticality. Notification components format and send alerts through various channels - email, SMS, chat systems, incident management platforms. Approval components implement multi-level approval chains for changes and requests. Status tracking components maintain audit trails and provide visibility into workflow progress.

A particularly valuable reusable component in IT operations is the decision logic for incident routing. Rather than hardcoding which team handles which incident type, parameterized routing logic accepts incident characteristics and determines the appropriate team. The same component works for security incidents, infrastructure incidents, application incidents, and network incidents - each configured differently but using identical underlying logic.

IT operations teams also benefit from composable playbooks for self-healing automation. When certain conditions arise - disk space running low, CPU utilization exceeding thresholds, failed backups - playbooks can automatically initiate remediation. These playbooks often combine detection components, decision components that determine if automatic remediation is safe, action components that execute the remediation, and verification components that confirm the issue is resolved.

Finance and Compliance Automation Patterns

Finance functions rely heavily on structured workflows with clear approval chains, audit requirements, and compliance controls. Expense approval playbooks route expenses through approval chains based on amount and category. Invoice processing playbooks extract data from invoices, match them to purchase orders, verify accuracy, and route for payment. Reconciliation playbooks compare data across systems and flag discrepancies for investigation.

Reusable components in finance automation include approval chain logic that routes requests based on amount thresholds and requester attributes. Validation components verify that data meets compliance requirements. Audit trail components record all actions for compliance and investigation purposes. Notification components alert stakeholders of approvals, rejections, and exceptions.

A powerful finance automation pattern involves parameterized policy enforcement. Rather than hardcoding approval rules, policies become data that playbooks interpret. When policies change - perhaps new thresholds for automatic approval, new approval chains, new compliance requirements - you update policy data rather than modifying playbooks. This separation of policy from implementation enables finance teams to adapt to regulatory changes and business policy updates without IT involvement.

Finance teams also leverage composable playbooks for predictive analytics and anomaly detection. Playbooks can analyze transaction patterns, flag unusual activity, and route suspicious transactions for investigation. Because these playbooks are composable, teams can quickly adjust detection rules, add new data sources, or modify routing logic as fraud patterns evolve.

Customer Support and Service Delivery Patterns

Customer support organizations handle ticket routing, escalation, knowledge base recommendations, and customer communication. Ticket routing playbooks classify incoming tickets and route them to the appropriate team based on category, urgency, and customer tier. Escalation playbooks identify tickets that aren't being resolved quickly and escalate them to senior support staff. Knowledge base playbooks search for relevant articles and recommend them to customers.

Reusable components in support automation include classification logic that categorizes tickets based on content and metadata. Routing logic determines which team should handle each ticket. Priority assessment logic evaluates urgency based on customer tier, issue impact, and SLA requirements. Notification components alert support staff of new tickets and escalations. Communication components format and send responses to customers.

Support teams benefit significantly from composable playbooks for handling common scenarios. A high-volume support organization might have playbooks for password resets, account unlocks, billing inquiries, and product troubleshooting. Rather than building these independently, teams build reusable components - authentication verification, account lookup, permission checking, action execution - and compose them into playbooks for different scenarios.

A particularly valuable pattern in support automation involves multi-agent orchestration for complex issues. When a customer issue requires expertise from multiple teams - perhaps a billing issue involving product functionality - composable playbooks can coordinate between agents. One agent handles the initial classification, routes to the appropriate specialist, coordinates information sharing, and ensures the customer receives a unified response. Because this coordination logic is composable, it works across different issue types and team structures.

Enabling Cross-Functional Reuse Through Abstraction

The key to successful cross-functional reuse is abstraction - building components that work across functions by focusing on underlying patterns rather than function-specific details. An approval component that works across IT, finance, and support doesn't need to know whether it's approving a change, an expense, or a refund. It simply manages approval chains, tracks approvals, and notifies stakeholders.

This abstraction requires careful component design. Components should accept inputs that describe the approval scenario without assuming specific business context. Rather than a component designed for "expense approval," you build a component for "multi-level approval with threshold-based routing" that works for any approval scenario. The parameterization then specializes this generic component for specific use cases.

Cross-functional reuse also requires organizational alignment. Teams need to agree on standards for how components are built, documented, and tested. They need shared component registries where teams can discover reusable components. They need governance processes that ensure components meet security and compliance requirements. Organizations that invest in these organizational structures dramatically accelerate the pace at which they can build and deploy new automation.

Implementation Best Practices and Governance Frameworks

Successfully deploying composable agent playbooks at scale requires more than technical architecture. It requires governance frameworks that ensure playbooks remain secure, compliant, and aligned with business objectives. It requires operational practices that enable teams to build and deploy playbooks efficiently. It requires organizational structures that support collaboration and knowledge sharing.

Governance and Control Mechanisms

As composable playbooks become central to your operations, the risk of misconfigured playbooks affecting critical processes increases. Governance frameworks establish controls that prevent problems while enabling innovation. These frameworks typically include several layers.

Component governance ensures that components meet organizational standards before they're approved for use. This might include security reviews to ensure components don't introduce vulnerabilities, compliance reviews to ensure components meet regulatory requirements, and performance reviews to ensure components perform acceptably. A component approval process prevents substandard components from entering your library.

Playbook governance ensures that playbooks are properly designed, tested, and approved before they're deployed to production. This includes design reviews that verify playbooks implement processes correctly, security reviews that identify potential risks, and testing verification that playbooks have been thoroughly tested. A playbook approval process prevents buggy or risky playbooks from affecting production operations.

Access controls determine who can create, modify, and deploy playbooks and components. Different organizations implement these differently - some allow any employee to create playbooks, with approval gates before production deployment. Others restrict creation to automation specialists, with business stakeholders providing requirements. The right approach depends on your organization's risk tolerance and automation maturity.

Audit and logging ensure that you can track what playbooks do, who modified them, and when changes occurred. Comprehensive audit trails support compliance investigations, help debug issues, and provide visibility into automation behavior. Many organizations integrate playbook audit logs with centralized security information and event management systems.

Change management processes govern how playbook modifications are made and deployed. Rather than allowing ad hoc changes, formal change management ensures modifications are reviewed, tested, and approved before deployment. This prevents accidental breakage and ensures stakeholders are aware of changes that might affect them.

Operational Practices for Efficient Playbook Development

Beyond governance, operational practices determine how efficiently teams can build and deploy playbooks. These practices include standards for how playbooks are designed and documented, tools and platforms that support playbook development, and processes that enable teams to collaborate effectively.

Playbook design standards establish conventions for how components are named, how data flows between components, and how errors are handled. When teams follow consistent standards, playbooks become easier to understand, modify, and debug. Standards might specify naming conventions - perhaps components are named as "verb-noun" like "validate-expense" or "route-ticket." Standards might specify how data is transformed between components or how errors propagate through playbooks.

Documentation practices ensure that playbooks and components are understandable by teams who didn't build them. This includes high-level documentation describing what a playbook does, how it's configured, and what it requires. It includes detailed documentation of each component - what it does, what inputs it accepts, what outputs it produces, and what errors it might encounter. It includes examples of how to use components in different contexts.

Development environments enable teams to build and test playbooks without affecting production systems. A typical development environment includes test data, mock external systems, and the ability to run playbooks without triggering real-world actions. Teams can develop confidently knowing their mistakes won't affect actual customers or operations.

Collaboration tools facilitate teamwork on playbook development. Version control systems track changes to playbooks and components, enabling multiple team members to work together without conflicts. Code review processes ensure quality and knowledge sharing. Shared component libraries make it easy for teams to discover and reuse existing components rather than building duplicates.

Monitoring and alerting systems provide visibility into playbook behavior in production. Teams can see how often playbooks run, how long they take, what errors they encounter, and what actions they take. This visibility enables teams to optimize playbooks, identify and fix bugs, and understand the business impact of their automation.

Building a Composable Playbook Platform

Many organizations eventually realize that managing composable playbooks requires dedicated platform capabilities. Rather than relying on general-purpose workflow tools, specialized playbook platforms provide features specifically designed for composable automation.

A playbook platform should provide a component library with search and discovery capabilities. Teams should be able to find components by name, by function, or by tag. The platform should show component documentation, usage examples, and which playbooks use each component. When a component is updated, the platform should identify all affected playbooks.

The platform should support playbook design through visual or code-based interfaces. Visual interfaces enable non-technical users to compose playbooks by connecting components. Code-based interfaces enable developers to build complex playbooks with precise control. The best platforms support both approaches, enabling different teams to work in their preferred style.

The platform should provide testing capabilities that enable teams to test playbooks before deployment. This includes the ability to run playbooks with test data, mock external systems, and verify that playbooks produce expected outputs. Testing should be fast so teams can test frequently during development.

The platform should provide deployment capabilities that manage the promotion of playbooks from development through testing to production. Deployments should be traceable and reversible - if a playbook causes problems, you should be able to quickly roll back to the previous version. The platform should enforce governance policies - requiring approvals, running tests, checking compliance - before allowing deployment.

The platform should provide observability capabilities that give visibility into playbook execution. Teams should see how often playbooks run, what data they process, what decisions they make, and what errors they encounter. This observability enables optimization and debugging.

The platform should integrate with your existing systems - your incident management system, your approval system, your notification systems, your data sources. Rather than forcing teams to manually move data between systems, the platform should handle these integrations seamlessly.

Organizations that build dedicated playbook platforms gain significant competitive advantages. A well-designed platform accelerates playbook development, improves quality through automated testing and governance, and enables rapid scaling of automation across the organization.

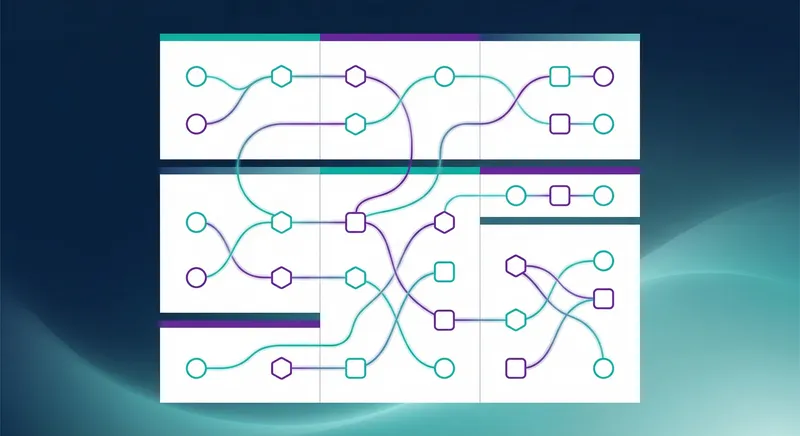

Advanced Patterns: Multi-Agent Orchestration and Dynamic Composition

As your composable playbook practice matures, you'll encounter scenarios that require more sophisticated patterns. Multi-agent orchestration involves coordinating multiple AI agents to handle complex workflows that no single agent can address alone. Dynamic composition involves constructing playbooks at runtime based on conditions and context.

Multi-Agent Orchestration Patterns

Complex business processes often require expertise from multiple specialized agents. In customer support, one agent might handle initial ticket classification while another handles technical troubleshooting and a third handles billing questions. In finance, one agent might handle invoice extraction, another might handle matching to purchase orders, and a third might handle exception handling. Rather than routing to human specialists sequentially, multi-agent orchestration coordinates these agents to work together efficiently.

Effective multi-agent orchestration requires clear role definition. Each agent should have a well-defined responsibility and expertise area. The orchestration logic should determine which agents are needed for a given scenario, in what sequence they should participate, and how they should communicate with each other. This logic becomes a reusable component that works across different multi-agent scenarios.

Agent communication patterns are critical for multi-agent orchestration. Agents might communicate through shared context - all agents can see and update a shared data structure. Agents might communicate through explicit message passing - one agent produces output that becomes input for another agent. Agents might communicate through a coordinator agent that manages the overall workflow. The best approach depends on the complexity of the workflow and the independence of the agents.

Handling disagreement between agents is an important consideration. When multiple agents analyze the same data, they might reach different conclusions. Orchestration logic might require consensus - all agents must agree before proceeding. It might implement voting - the majority opinion prevails. It might implement escalation - when agents disagree, route to human judgment. The approach should reflect the criticality of the decision and the cost of errors.

Multi-agent orchestration also requires careful attention to performance. When multiple agents process sequentially, execution time multiplies. Orchestration logic should identify opportunities for parallel execution - when multiple agents can work independently, they should run concurrently. This requires careful dependency analysis to ensure agents have the data they need before they start executing.

Dynamic Playbook Composition

In many scenarios, the optimal playbook structure depends on context and conditions that aren't known until runtime. A support playbook might need different components depending on the customer tier, the issue category, and the current system load. Rather than creating separate playbooks for every combination of conditions, dynamic composition enables you to construct playbooks at runtime.

Dynamic composition requires a playbook builder component that can construct playbooks based on input parameters. This builder accepts a scenario description and produces a playbook tailored to that scenario. The builder might select which components to include, in what sequence to arrange them, and what parameters to configure for each component.

Rules or policies guide dynamic composition. A policy might state that high-priority issues should include manual review steps while low-priority issues should be handled entirely by agents. A policy might state that enterprise customers should include escalation to human specialists while self-service customers should be handled by agents alone. These policies enable the composition builder to make intelligent decisions about playbook structure.

Dynamic composition requires robust error handling. If the composition builder encounters an unexpected scenario or if a dynamically constructed playbook fails, the system needs to gracefully handle these failures. This might involve falling back to a default playbook, escalating to human intervention, or logging the scenario for analysis.

Machine learning can enhance dynamic composition by learning which playbook structures work best for different scenarios. Over time, the system learns that certain combinations of components produce better outcomes for specific conditions. This learning enables the system to continuously optimize playbook composition.

Measuring Success: ROI and Continuous Improvement

Composable agent playbooks represent significant investment in automation infrastructure. To justify this investment and guide continuous improvement, you need robust measurement frameworks that track both the efficiency gains and the business impact of your automation.

Efficiency metrics measure how much work automation accomplishes. Throughput metrics track how many workflows playbooks process. Latency metrics track how quickly playbooks complete. Automation rate metrics track what percentage of processes are handled by playbooks versus human intervention. These metrics demonstrate the scale of automation and how it's growing over time.

Quality metrics measure how well playbooks perform their intended functions. Accuracy metrics track whether playbooks make correct decisions. Error rate metrics track how often playbooks fail or produce incorrect outputs. Rework metrics track how often playbook outputs require human correction. These metrics ensure that automation benefits don't come at the cost of quality.

Cost metrics measure the financial impact of automation. Labor cost savings track how much human effort automation eliminates. Operational cost savings track reductions in system costs, tool costs, and infrastructure costs. Development cost metrics track the cost of building and maintaining automation. By comparing savings against costs, you can calculate return on investment and justify continued investment in automation.

Business impact metrics connect automation to business outcomes. Revenue impact metrics track whether automation enables faster sales cycles or higher conversion rates. Customer satisfaction metrics track whether automation improves or degrades customer experience. Compliance metrics track whether automation reduces compliance violations and audit findings. Risk metrics track whether automation reduces operational risks or introduces new risks.

Continuous improvement processes use these metrics to guide evolution of your playbook library. Metrics might reveal that certain playbooks have high error rates, signaling the need for improvement. Metrics might reveal that certain components are used frequently, indicating opportunities to optimize them. Metrics might reveal that certain playbooks are rarely used, raising questions about whether they're still needed.

Feedback loops from teams using playbooks provide qualitative insights that complement quantitative metrics. Support teams might report that a playbook is too rigid and needs additional parameterization. Finance teams might report that a playbook doesn't handle certain edge cases. IT teams might report that a playbook is too slow. This feedback guides prioritization of improvements.

Benchmarking against industry standards and competitors helps you understand whether your automation is competitive. If industry leaders achieve 85% automation rates while you're at 60%, that signals opportunity. If competitors achieve faster resolution times through automation, that indicates areas to focus your efforts. Benchmarking helps you set realistic targets and identify areas for improvement.

Conclusion: Transforming Operations Through Composable Automation

The evolution from rigid, monolithic automation to composable agent playbooks represents a fundamental shift in how enterprises approach operational transformation. Rather than building automation systems that are difficult to adapt, you're building modular, parameterized systems that evolve with your business. Rather than requiring weeks to reconfigure automation when business needs change, you can reconfigure playbooks in hours. Rather than maintaining separate automation systems for IT operations, finance, and support, you're building a unified automation platform that serves all functions.

The benefits compound as your composable playbook practice matures. Early playbooks might achieve 30% automation of a specific process. As you build reusable components, subsequent playbooks achieve 60% automation because they leverage existing components. As your component library grows and teams become proficient with composable patterns, new playbooks achieve 80%+ automation because they're primarily composition of proven components with minimal custom logic. This acceleration of automation deployment is the true power of composable playbooks.

However, realizing these benefits requires more than adopting new tools. It requires organizational commitment to building reusable components, establishing governance frameworks that maintain quality and compliance, and investing in platforms and practices that enable efficient playbook development. It requires breaking down silos between functions and establishing shared component libraries that serve the entire organization. It requires measuring success and continuously improving your automation systems based on what you learn.

At A.I. PRIME, we understand the complexity of implementing composable agent playbooks at scale. Our Workflow Design and Automation Blueprinting services help enterprises architect playbook systems that are flexible, scalable, and aligned with your business objectives. Our Agent Network Deployment capabilities enable you to deploy sophisticated multi-agent orchestration patterns that handle complex workflows. Our Governance Integration services establish the controls and processes that ensure your automation remains secure and compliant as it scales.

We work with you to identify high-impact automation opportunities, design reusable components that serve multiple functions, establish testing and versioning pipelines that ensure quality, and build the organizational practices that enable efficient playbook development. Our ROI Tracking Implementation services help you measure the business impact of your automation investments, guiding decisions about where to focus future efforts. Our Continuous Enablement Support ensures your teams have the training, tools, and guidance they need to evolve your automation systems as your business evolves.

If your organization is struggling with rigid automation systems, slow time-to-value from automation investments, or silos between automation efforts across functions, composable agent playbooks offer a path forward. We invite you to explore how composable automation can transform your operations, accelerate your digital transformation, and drive competitive advantage through operational agility. Contact our team to discuss your automation challenges and discover how we can help you build the flexible, scalable automation systems your enterprise needs.

Next step

Book the Opportunity Sprint