A.I. PRIME - Article

Governance and Security for Autonomous Agents in Regulated Industries: A Practical Framework

I need the DESCRIPTION_TEXT to complete this task. Could you please provide the full text that should replace "DESCRIPTION_TEXT"?

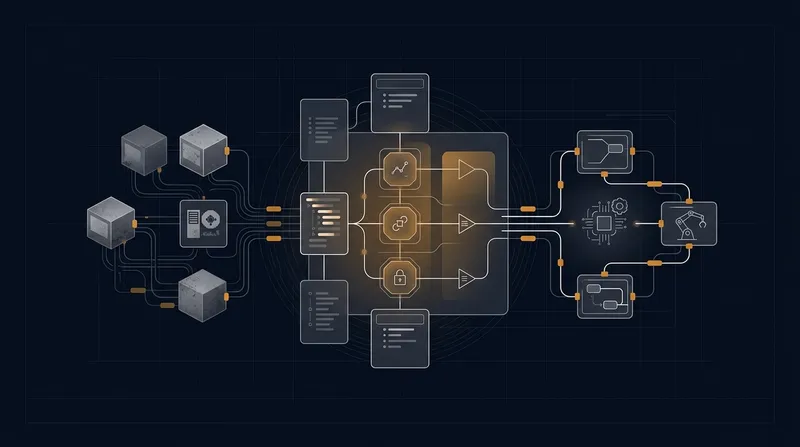

Autonomous agents are reshaping how enterprises operate - automating workflows, accelerating decision-making, and unlocking efficiency gains that were unimaginable just years ago. Yet in regulated industries like healthcare, finance, and telecommunications, deploying these intelligent systems creates a fundamental tension: how do you harness the power of autonomous decision-making while maintaining the control, transparency, and accountability that regulators and customers demand? Many organizations rush to implement autonomous agents without establishing the governance frameworks necessary to operate safely and compliantly. The result is exposure to privacy breaches, audit failures, regulatory fines, and reputational damage. This comprehensive guide explores the governance and security considerations that B2B teams must address when deploying autonomous agents in regulated environments, providing actionable threat models, control frameworks, and governance strategies to protect your organization while unlocking the transformative potential of AI-driven automation.

Understanding the Regulatory Landscape for Autonomous Agent Deployments

Regulated industries operate under strict compliance requirements designed to protect consumers, ensure fair competition, and maintain systemic stability. When autonomous agents enter this landscape, they don't simplify compliance - they complicate it. Healthcare organizations must navigate HIPAA privacy rules and FDA oversight of AI-driven medical devices. Financial institutions face SEC and FINRA requirements around algorithmic trading and automated lending decisions. Telecommunications providers must comply with FCC regulations, TCPA rules governing automated communications, and emerging AI governance mandates. Each sector brings unique regulatory expectations around transparency, auditability, and human oversight. Learn more in our post on Ethical and Security Considerations for Autonomous Agents in Regulated Industries.

The challenge intensifies because regulatory frameworks are evolving faster than agent technologies mature. The EU's AI Act introduces risk-based classification for AI systems, with high-risk applications requiring rigorous governance and ongoing monitoring. The SEC's guidance on AI and algorithmic decision-making emphasizes the need for explainability and bias testing. Meanwhile, healthcare regulators increasingly scrutinize the clinical validity and safety of AI-assisted diagnostic systems. Enterprise teams deploying autonomous agents must therefore build governance frameworks that exceed current minimum compliance requirements - anticipating where regulation is heading and embedding controls that make future compliance transitions smoother.

The governance playbook that built yesterday's companies is increasingly strained in today's economy. Organizations that embed governance integration from day one of agent deployment gain competitive advantage through faster innovation cycles, reduced audit friction, and stronger stakeholder trust.

The most successful regulated enterprises treat governance integration not as a compliance checkbox, but as a strategic capability that accelerates deployment while reducing risk. This mindset shift - from "governance as constraint" to "governance as enabler" - fundamentally changes how teams approach autonomous agent design, testing, and monitoring. For B2B operators specifically, this means governance frameworks that don't slow down your ability to deploy working agents in 14 days, but rather ensure those agents operate safely and compliantly from day one.

Privacy Considerations and Data Protection in Autonomous Agent Systems

Autonomous agents by nature process sensitive data at scale and speed that exceeds human capability to manually review. In healthcare, an agent might access millions of patient records to identify candidates for a clinical trial. In finance, agents might analyze customer transaction histories to detect fraud or assess creditworthiness. In telecommunications, agents might monitor network activity and customer communications to optimize service and identify security threats. Each scenario introduces privacy risks that traditional data protection approaches struggle to address. Learn more in our post on Security and Compliance Checklist for Deploying Autonomous Workflows.

Data Minimization and Purpose Limitation

The foundational privacy principle of data minimization requires that autonomous agents access only the minimum data necessary to accomplish their specific function. Yet in practice, many agent implementations adopt a "maximum data" approach - feeding agents access to entire databases on the assumption that more data improves decision quality. This creates unnecessary privacy exposure and complicates compliance with regulations like GDPR and CCPA that require explicit purpose limitation.

Effective governance for agent privacy starts with rigorous data scoping. Before deploying an autonomous agent, teams should conduct a privacy impact assessment that documents precisely which data fields the agent requires, why each field is necessary, and what happens if that data is unavailable. This assessment should identify opportunities to use synthetic data, aggregated data, or anonymized data rather than personal information. For example, a healthcare agent designed to optimize patient scheduling might operate on appointment duration and resource availability rather than accessing detailed patient medical histories. A financial agent assessing credit risk might use credit scores and income verification rather than full transaction histories.

Technical controls should enforce data minimization at the architecture level. Implement role-based access controls that limit agent permissions to specific data fields and tables. Use data masking and tokenization to prevent agents from accessing sensitive identifiers. Deploy query filters that restrict agents to data relevant to their specific workflow. These technical controls work in concert with governance policies that define what data agents can access and establish review processes for any exceptions.

Consent and Transparency in Agent Decision-Making

When autonomous agents make decisions that affect individuals - approving a loan, recommending a medical treatment, adjusting service parameters - those individuals have a right to understand how the decision was made and to contest it if they believe it was unfair or inaccurate. This transparency requirement creates practical challenges in agent deployments because modern machine learning models often lack interpretability. A neural network might identify patterns in data that improve decision accuracy, but cannot easily explain why it reached a specific conclusion in a specific case.

Privacy-preserving transparency requires governance that operates at multiple levels. At the system level, organizations should maintain detailed logs of all agent decisions, including the input data, the decision made, the confidence level, and any human review or override. These logs serve as the audit trail that regulators expect and that individuals can request to understand how an agent affected them. At the individual level, organizations should provide clear, accessible explanations of agent decisions. For high-stakes decisions, this might mean generating a summary of the key factors the agent considered and how they weighted them relative to other factors.

Consent becomes more complex in agent systems because the specific use cases and decision patterns may not be fully predictable at the time individuals consent to agent involvement. A customer might consent to having their transaction data analyzed for fraud detection, but not anticipate that an autonomous agent would use that data to assess credit risk for a targeted loan offer. Effective governance requires organizations to be transparent about the scope of agent analysis and to provide ongoing consent options that let individuals opt out of specific agent use cases without losing access to core services.

Auditability and Provenance - Building Trustworthy Agent Records

In regulated industries, auditability is not optional - it is fundamental to operating legally and maintaining stakeholder trust. When human employees make decisions, organizations maintain records of who made the decision, when they made it, what information they considered, and what process they followed. Auditors can review these records to verify compliance with policies and regulations. Autonomous agents must provide equivalent auditability, yet the technical and organizational challenges are substantial. Learn more in our post on Building Autonomous AI Agents for Customer Service Automation.

Complete Decision Logging and Audit Trails

The foundation of auditability is comprehensive logging. Every autonomous agent deployment should generate immutable logs that capture the complete decision context. This includes the timestamp of the decision, the agent version and configuration that made the decision, all input data the agent accessed, the decision output, any confidence scores or uncertainty measures, and any human review or override that occurred. These logs should be stored in a format that prevents tampering and supports efficient querying by regulators, auditors, and internal compliance teams.

In practice, comprehensive logging creates significant data volume. A high-frequency trading agent might generate millions of decision logs per day. A healthcare system deploying agents across hundreds of workflows might create terabytes of audit data annually. Effective governance requires organizations to establish log retention policies that balance regulatory requirements with storage and performance constraints. Some jurisdictions require logs to be retained for years; others specify shorter retention windows. Organizations should document the specific retention requirements for each agent deployment and implement automated processes that enforce these policies.

The challenge extends beyond volume to structure and accessibility. Audit logs must be queryable by regulators who may not be familiar with the organization's technical systems. A compliance officer should be able to ask "show me all agent decisions made on behalf of customer X between date Y and date Z" without requiring data engineering expertise. Effective governance includes building audit dashboards and query tools that make logs accessible to non-technical stakeholders while maintaining security controls that prevent unauthorized access to sensitive decision data.

Provenance and Model Lineage

Auditability extends beyond individual decisions to the agents themselves. When an agent makes a poor decision or violates a regulation, auditors need to understand not just what happened, but why - and that requires understanding the agent's training data, model architecture, and configuration. Provenance tracking documents the complete lineage of an agent from initial conception through deployment and ongoing updates.

Effective provenance governance captures several critical dimensions. First, data provenance documents where the agent's training data originated, how it was collected, what transformations were applied, and what quality controls were performed. This is essential for identifying whether biases in the training data influenced agent decisions. Second, model provenance documents which algorithms were evaluated, what hyperparameters were selected, and what performance metrics drove model selection. This supports audits that verify the agent was built using sound methodology. Third, deployment provenance documents which agent version is running in production, what configuration parameters are active, and when any updates or patches were applied.

In regulated environments, provenance documentation should be maintained in a centralized repository that integrates with governance workflows. When an agent is updated, the new version should be documented alongside the previous version, with clear records of what changed and why. When an agent makes a decision that triggers regulatory scrutiny, auditors should be able to pull the complete provenance record and trace the decision back through the training data and model development process.

Organizations that treat provenance as a first-class governance requirement - not an afterthought - dramatically reduce audit cycle times and strengthen their ability to demonstrate compliance to regulators.

Security and Adversarial Risk Management in Agent Systems

Autonomous agents operate in threat environments where adversaries actively work to manipulate or compromise agent behavior. In financial systems, adversaries might craft transactions designed to fool fraud detection agents. In healthcare, adversaries might manipulate patient data to trigger inappropriate treatment recommendations. In telecommunications, adversaries might craft network traffic patterns designed to evade security detection agents. Effective governance requires threat modeling that anticipates these adversarial scenarios and implements controls to prevent or mitigate them.

Adversarial Attack Vectors and Threat Models

Adversarial attacks on autonomous agents fall into several categories. Data poisoning attacks manipulate the training data used to develop agents, introducing subtle biases that cause agents to make systematically unfair or incorrect decisions. Model evasion attacks craft inputs designed to fool trained models into making wrong decisions. Prompt injection attacks manipulate the instructions given to large language model-based agents, causing them to behave in unintended ways. Model theft attacks attempt to extract or reverse-engineer proprietary agent models. Model inversion attacks attempt to reconstruct sensitive training data from model outputs.

Effective threat modeling starts by identifying which attack vectors pose the greatest risk to your specific agent deployments. A healthcare organization deploying agents to recommend treatments faces high risk from data poisoning and model evasion attacks - adversaries might manipulate training data or craft patient information designed to trigger inappropriate recommendations. A financial institution deploying agents to detect fraud faces high risk from evasion attacks - fraudsters continuously evolve their tactics to evade detection. A telecommunications provider deploying agents to optimize network routing faces high risk from prompt injection and model theft attacks.

Once threat vectors are identified, governance requires implementing specific technical controls. Against data poisoning, implement data validation and quality controls that detect anomalous training data. Against model evasion, implement ensemble methods that combine multiple agents and require agreement before high-stakes decisions. Against prompt injection, implement input sanitization and prompt validation that prevents malicious instructions from reaching agent systems. Against model theft, implement access controls that limit who can interact with agents and monitoring that detects suspicious query patterns.

Adversarial Testing and Red Teaming

Governance frameworks for regulated agent deployments should mandate adversarial testing before and after deployment. Red team exercises where internal or external security experts attempt to compromise or manipulate agents help identify vulnerabilities that standard testing misses. Adversarial test datasets crafted to include edge cases and attack patterns help verify that agents behave safely under challenging conditions.

Effective adversarial testing requires domain expertise. Security professionals need to understand both attack techniques and the specific domain where agents operate. A red team testing a healthcare agent should include clinicians who understand how medical knowledge can be manipulated, not just security experts. A red team testing a financial agent should include fraud specialists who understand evolving fraud patterns. This interdisciplinary approach helps identify domain-specific attack vectors that generic security testing might miss.

Governance should establish a regular cadence for adversarial testing. Initial red team exercises should occur before deployment to identify vulnerabilities in agent design. Ongoing red team exercises should occur periodically after deployment to identify vulnerabilities introduced by agent updates or changes in the threat landscape. The results of adversarial testing should be documented, tracked, and used to prioritize remediation efforts.

Control Frameworks and Governance Integration Best Practices

Building effective governance for autonomous agents in regulated industries requires establishing control frameworks that span people, processes, and technology. The most effective frameworks adapt industry standards like COBIT and NIST to the specific context of agent deployments, creating accountability for agent behavior while enabling the speed and scale that make agents valuable.

Governance Structure and Accountability

Effective agent governance requires clear accountability structures. Many organizations create an AI Governance Board or similar oversight body responsible for approving new agent deployments, reviewing agent performance, and ensuring compliance with policies. This board should include representation from multiple functions - technology leadership, compliance, risk, business operations, and domain experts. The board's charter should clearly define what types of agent deployments require approval, what evaluation criteria must be satisfied, and what ongoing monitoring is required.

Beyond the governance board, organizations should assign specific roles and responsibilities. An agent owner or sponsor - typically a business leader - is accountable for the agent's performance and compliance. An agent technical lead is responsible for agent design, testing, and deployment. A compliance officer is responsible for ensuring the agent meets regulatory requirements. This role clarity prevents gaps where no one is accountable for critical governance functions.

Governance also requires establishing clear escalation paths. When an agent makes a decision that triggers regulatory concern, or when adversarial testing identifies a vulnerability, there should be clear processes for escalating the issue to decision-makers who can authorize remediation. These escalation paths should be documented, tested, and regularly reviewed to ensure they function effectively under pressure.

Policy and Standard Development

Effective agent governance requires documented policies and standards that guide agent development, deployment, and operation. These policies should address several key areas. Data governance policies should define what data agents can access, how data is protected, and what audit trails must be maintained. Agent development policies should define the processes for building agents, testing requirements before deployment, and approval gates. Agent monitoring policies should define what metrics are tracked, what thresholds trigger alerts, and what actions are taken in response to alerts. Agent decommissioning policies should define how agents are retired and what happens to historical decision data.

Policies should be specific enough to guide decision-making, but flexible enough to adapt to evolving agent technologies and regulatory requirements. Rather than prescribing specific technical approaches, effective policies define outcomes and constraints. For example, rather than requiring "all agents must use explainable machine learning models," a more effective policy might require "all high-stakes agent decisions must be explainable to affected individuals and auditors, using methods appropriate to the decision context."

Policy development should involve stakeholders from across the organization. Compliance teams help ensure policies meet regulatory requirements. Technology teams help ensure policies are technically feasible. Business teams help ensure policies don't create unnecessary friction that slows deployment. This collaborative approach produces policies that balance governance rigor with operational practicality.

Monitoring, Metrics, and Performance Management

Governance requires ongoing monitoring that verifies agents continue to operate safely, fairly, and compliantly after deployment. This monitoring should track multiple dimensions of agent performance. Functional metrics measure whether the agent is achieving its intended business objectives - is the scheduling agent reducing wait times, is the fraud detection agent catching fraud, is the treatment recommendation agent improving patient outcomes. Fairness metrics measure whether the agent is treating different populations equitably - is the lending agent approving loans at similar rates across demographic groups, is the diagnostic agent performing equally well across different patient populations. Compliance metrics measure whether the agent is operating within regulatory constraints - are all decisions being logged, are audit trails complete, are data access patterns within expected bounds.

Effective governance establishes specific thresholds for each metric that trigger investigation or escalation. If the fraud detection agent's accuracy drops below a certain level, that triggers an investigation into what changed. If fairness metrics show disparate impact on a protected group, that triggers a review of the training data and model. If audit logs show unexpected data access patterns, that triggers a security investigation. These thresholds should be established based on regulatory requirements, business impact, and risk tolerance.

Monitoring should be automated wherever possible. Dashboards that track key metrics in real time allow governance teams to identify problems quickly. Alerts that notify teams when metrics cross thresholds enable rapid response. Automated reports that summarize agent performance help governance boards stay informed without requiring manual data gathering.

Organizations that establish comprehensive monitoring frameworks for autonomous agents gain the visibility needed to catch problems early, demonstrate compliance to regulators, and continuously improve agent performance.

Industry-Specific Governance Considerations

While the governance principles above apply broadly, each regulated industry brings specific requirements and challenges that shape how organizations should approach agent governance.

Healthcare Governance and Clinical Safety

Healthcare organizations deploying autonomous agents face unique governance challenges centered on patient safety and clinical effectiveness. Agents that recommend treatments, triage patients, or interpret diagnostic images make decisions that directly affect patient health. Healthcare governance frameworks must ensure these agents meet clinical safety standards equivalent to those applied to human clinicians and medical devices.

FDA oversight adds a regulatory layer that most industries don't face. AI-based diagnostic systems may be classified as medical devices subject to FDA approval. This requires clinical validation studies, risk management processes, and ongoing post-market surveillance. Healthcare organizations should engage FDA early in agent development to understand classification and approval requirements. Even agents not classified as medical devices should follow FDA guidance on AI and algorithmic decision-making in healthcare.

Clinical governance should include involvement of clinician stakeholders in agent development. Clinicians understand the nuances of medical decision-making that pure data science might miss. Clinician input helps ensure agents are trained on clinically relevant data, validated against clinically meaningful outcomes, and deployed in workflows that preserve clinical judgment and human oversight. Clinical advisory boards should review agent performance regularly and recommend adjustments based on clinical experience.

Healthcare governance frameworks should establish clear protocols for human oversight of agent decisions. In high-stakes scenarios like treatment recommendations, agents should be designed as decision support tools that enhance clinician judgment rather than replace it. Governance policies should define when clinician review of agent recommendations is required, what training clinicians need to effectively use agent recommendations, and how to handle cases where clinicians disagree with agent recommendations.

Financial Services Governance and Algorithmic Fairness

Financial institutions deploying autonomous agents face governance challenges centered on fairness, transparency, and market integrity. Agents that make lending decisions, set pricing, or execute trades affect customers, markets, and systemic stability. Financial governance frameworks must ensure these agents operate fairly and don't violate regulations designed to protect consumers and maintain market confidence.

Fairness governance in financial services requires rigorous testing for disparate impact. Lending agents must not systematically deny credit to members of protected groups. Pricing agents must not systematically charge different prices based on protected characteristics. Trading agents must not engage in market manipulation. These requirements go beyond legal compliance to encompass ethical obligations and business sustainability. Unfair agent behavior damages customer relationships, invites regulatory scrutiny, and creates reputational risk.

Financial governance frameworks should include model risk management processes adapted to agent deployments. These processes should document the agent's design, validate its performance against historical data, assess its resilience to market stress, and monitor its ongoing performance. When agents are updated or market conditions change significantly, governance processes should require re-validation before the agent continues operating.

Transparency governance in financial services must address regulatory requirements around explainability. Customers denied credit, charged higher prices, or affected by algorithmic trading decisions have rights to understand why. Financial institutions should implement governance processes that generate clear, accurate explanations of agent decisions and make these explanations available to customers and regulators on demand.

Telecommunications Governance and Consumer Protection

Telecommunications providers deploying autonomous agents face governance challenges centered on consumer protection, network security, and service quality. Agents that manage customer service interactions, optimize network resources, or detect security threats affect millions of customers and critical infrastructure. Telecommunications governance frameworks must ensure these agents operate safely, transparently, and in compliance with consumer protection regulations.

Consumer protection governance in telecommunications requires careful attention to automated communications. TCPA regulations govern robocalls and automated texts. Agents that initiate customer communications must comply with these rules - obtaining prior consent, respecting do-not-call preferences, providing clear identification of the sender, and offering easy opt-out options. Governance frameworks should include processes that ensure agents comply with these requirements before deployment and monitor compliance continuously.

Network security governance requires agents that detect threats to operate with high reliability and minimal false positives. False positives in threat detection can disrupt legitimate customer service and network operations. Governance frameworks should establish performance thresholds for security agents and require human review of suspicious activity before taking disruptive actions like blocking customers or throttling traffic.

Service quality governance requires monitoring that agents don't degrade customer experience in pursuit of cost optimization or other objectives. An agent optimizing network resources might reduce costs but degrade service quality for some customers. Governance frameworks should establish service level objectives and require agents to maintain these standards. Trade-offs between cost and quality should be explicit governance decisions, not implicit in agent objectives.

Implementation Roadmap and Governance Integration Strategy

Implementing comprehensive governance for autonomous agents is not a one-time project but an ongoing capability development process. Organizations should approach this as a phased roadmap that builds governance maturity over time while enabling agent deployments to proceed.

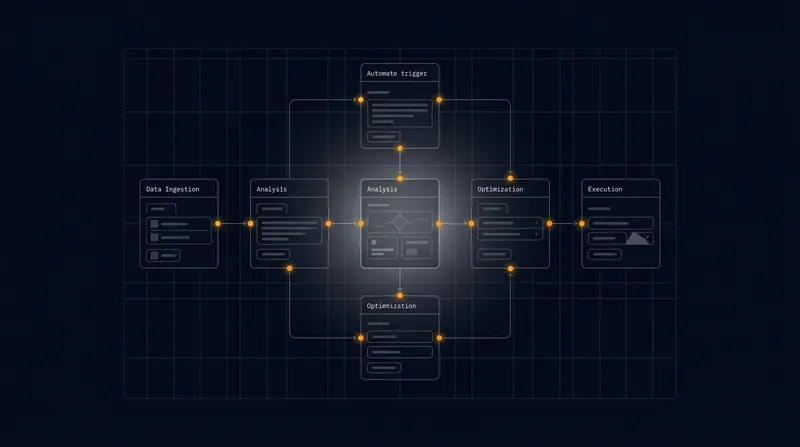

Phase 1 - Assessment and Foundation Building

The first phase focuses on understanding current state and establishing governance foundations. This includes conducting an inventory of existing or planned agent deployments, understanding their data flows, decision processes, and regulatory context. Organizations should assess current governance maturity - what governance structures, policies, and controls exist today, and what gaps need to be addressed. This assessment should involve stakeholders from compliance, risk, technology, and business teams.

Foundation building includes establishing governance structures like an AI Governance Board, defining roles and responsibilities, and developing initial policies. Organizations should also establish baseline controls like decision logging and audit trails. This foundation enables subsequent phases to build on solid ground.

Phase 2 - Pilot Deployments with Enhanced Governance

The second phase involves deploying agents in controlled pilot environments where governance controls can be tested and refined. Pilot deployments should be selected strategically - ideally agents that address important business problems but not mission-critical functions where governance failures would cause severe harm. Pilots should be sized to generate meaningful data about governance effectiveness without creating unmanageable risk.

During pilots, governance teams should actively monitor agent performance, test governance processes, and gather feedback from business teams operating agents. Monitoring should track not just agent performance but governance process effectiveness - are audit trails being generated correctly, are alerts triggering appropriately, are escalation processes working as designed. This hands-on experience helps teams refine governance frameworks before scaling to larger deployments.

Phase 3 - Scaling and Continuous Improvement

The third phase involves scaling agent deployments to broader use cases while continuously improving governance frameworks. As more agents are deployed, governance teams gain experience with diverse agent types and use cases. This experience should inform updates to policies, standards, and controls. Organizations should establish regular governance reviews - quarterly or semi-annual - that assess what's working, what needs adjustment, and how governance should evolve.

Continuous improvement should include learning from industry peers and regulatory guidance. As regulations evolve and best practices emerge, governance frameworks should be updated. Organizations should participate in industry forums and regulatory engagement that help shape governance evolution.

Scaling also requires investing in governance infrastructure - tools and platforms that automate governance processes and make them scalable. As agent deployments grow from dozens to hundreds, manual governance processes become infeasible. Organizations should invest in platforms that automate decision logging, generate audit reports, track model provenance, and monitor agent performance across large populations of agents.

The organizations that will lead in autonomous agent deployment are those that treat governance integration not as a burden but as a competitive advantage - enabling faster, safer, more trustworthy automation at scale.

Practical Recommendations for B2B Teams

B2B teams preparing to deploy autonomous agents in regulated industries should prioritize several concrete actions. First, conduct a regulatory assessment that identifies the specific compliance requirements applicable to your agent deployments. Don't assume generic compliance frameworks apply - regulations differ significantly across industries and jurisdictions. Engage compliance and legal teams early to understand requirements and build them into agent design from the beginning.

Second, establish governance structures and accountability before deploying agents. Define who owns each agent, who reviews agent decisions, who monitors agent performance, and who escalates problems. Clear accountability prevents governance failures where critical functions fall through cracks between teams.

Third, implement comprehensive decision logging and audit capabilities. This is foundational to all other governance functions - without complete audit trails, you cannot demonstrate compliance, investigate problems, or explain decisions to regulators. Invest in logging infrastructure that captures complete decision context at scale.

Fourth, establish fairness and bias testing as a standard part of agent development and deployment. Don't wait for regulators to identify fairness problems - identify them yourself through rigorous testing. This demonstrates good governance and gives you opportunity to remediate problems before regulators discover them.

Fifth, implement ongoing monitoring that tracks agent performance against multiple dimensions - functional performance, fairness, compliance, and security. Establish thresholds that trigger investigation or escalation when performance degrades. Automate monitoring wherever possible to enable continuous oversight without overwhelming governance teams.

Sixth, build governance frameworks that scale. As agent deployments grow, manual governance processes become infeasible. Invest in governance platforms and tools that automate routine governance functions and provide visibility across large populations of agents.

Finally, recognize that governance integration doesn't slow down your ability to deploy working agents - it accelerates it. Teams with clear governance frameworks can deploy with confidence, knowing they've addressed regulatory requirements upfront. Teams without governance frameworks spend months in remediation after deployment, discovering compliance gaps that should have been addressed during design.

Conclusion and Path Forward

Autonomous agents represent a transformational opportunity for B2B enterprises seeking to accelerate operations, reduce costs, and improve customer experiences. Yet in regulated industries, deploying agents without robust governance creates unacceptable risk - regulatory violations, data breaches, unfair treatment of customers, and reputational damage. The organizations that will lead in autonomous agent adoption are those that recognize governance not as a constraint but as an enabler - building governance frameworks that allow agents to operate safely, transparently, and compliantly at scale.

Implementing effective governance requires commitment across your organization. It requires compliance and risk teams to understand agent technologies and engage in design decisions. It requires technology teams to prioritize auditability and explainability alongside performance. It requires business teams to embrace governance processes that add rigor to agent deployments. It requires leadership to allocate resources to governance infrastructure and to establish clear accountability for agent behavior.

At A.I. PRIME, we specialize in helping founder-led B2B teams navigate exactly these challenges. Our 14-day engagement model incorporates governance integration into agent deployment from day one. We work with your teams to understand your regulatory context, design agents that meet compliance requirements, implement decision logging and audit capabilities, and establish monitoring that keeps agents operating safely. We help you move from governance as an afterthought to governance as a competitive advantage. Whether you're deploying your first autonomous agent or scaling across your enterprise, we can help you build the governance capabilities that enable confident, compliant agent deployment. Let's discuss how we can help your organization unlock the potential of autonomous agents while maintaining the control, transparency, and accountability that regulated industries demand.

Next step

Book the Opportunity Sprint