A.I. PRIME - Article

How to Assess Your Legacy Systems for Agentic AI Integration

Learn how to systematically assess your legacy systems for agentic AI integration with proven frameworks and governance best practices.

Your enterprise runs on systems that have served you well for years - perhaps decades. ERP platforms, mainframe databases, custom-built applications, and interconnected workflows keep operations moving. Yet as autonomous AI agents promise to revolutionize how work gets done, a critical question emerges: Can your legacy infrastructure actually support agentic AI?

Many mid to large enterprises face a paradox. The systems that drive revenue and maintain compliance are often the hardest to evolve. Adding agentic AI on top of fragmented, siloed, or poorly documented legacy systems can introduce risk, slow deployment, and undermine the very efficiency gains you're seeking. Without a clear diagnostic framework, you risk expensive false starts, security vulnerabilities, and wasted investment.

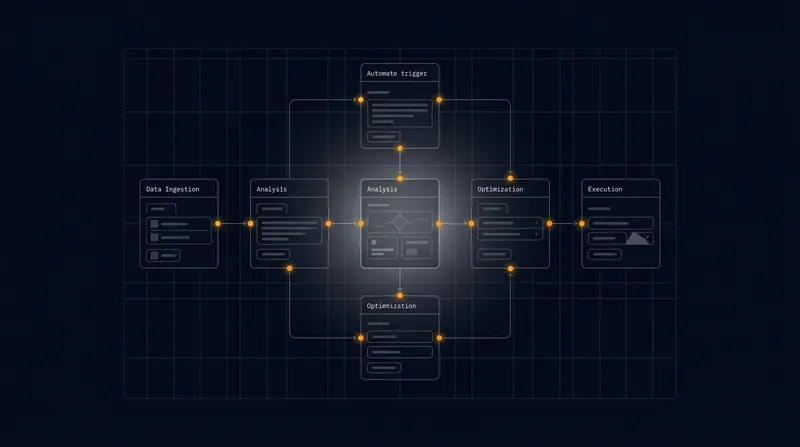

This playbook provides a structured approach to assess your legacy systems for agentic AI integration. You'll learn how to evaluate data quality, interface constraints, integration readiness, and organizational maturity. By the end, you'll have a clear readiness score and a phased adoption roadmap that minimizes business disruption while maximizing your path to autonomous workflow excellence.

Understanding the Legacy System and Agentic AI Gap

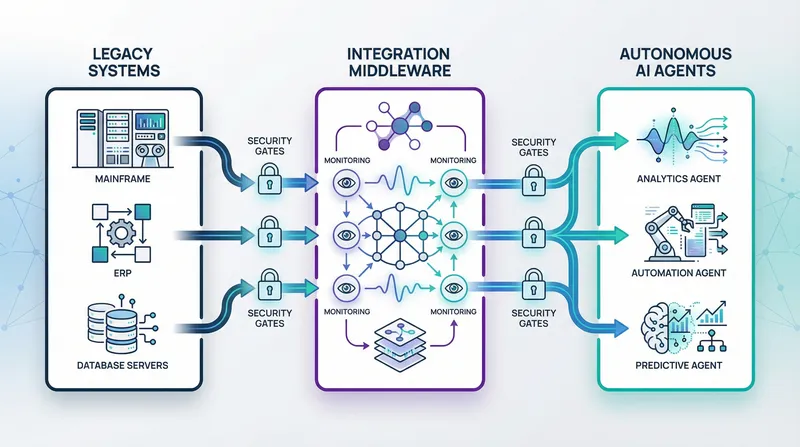

Before diving into assessment frameworks, it's essential to understand why legacy systems and agentic AI often seem incompatible. Legacy systems were designed for stability, predictability, and human-in-the-loop decision-making. They prioritize data consistency, regulatory compliance, and known workflows. Agentic AI, by contrast, thrives on real-time data access, autonomous decision-making, continuous learning, and rapid adaptation to changing conditions. Learn more in our post on How to Assess Your Legacy Systems for Agentic AI Integration.

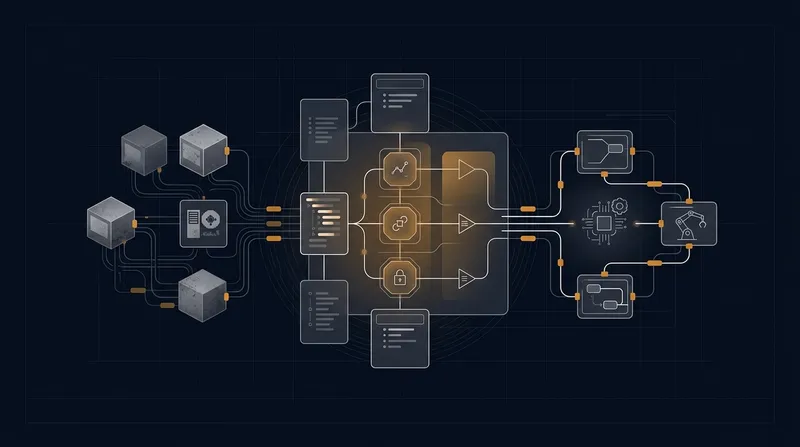

The gap between these paradigms manifests in several ways. Legacy systems typically operate in batch cycles - daily, weekly, or monthly processing runs. Agentic AI requires event-driven, millisecond-level responsiveness. Legacy data is often stored in isolated silos: customer data in one system, operational metrics in another, financial records in a third. Agentic AI agents need unified, contextualized data access to make intelligent decisions. Legacy APIs, if they exist, were often built for human-facing applications, not machine-to-machine orchestration at scale.

The challenge isn't that legacy systems can't work with AI - it's that most weren't designed with autonomous agents in mind. A thoughtful assessment reveals where adaptation is needed and where your existing infrastructure becomes a competitive advantage.

Additionally, legacy systems often lack the observability and instrumentation that agentic systems require. You can't effectively govern an autonomous agent if you can't see what data it's accessing, what decisions it's making, or why. This creates a governance and compliance gap that must be bridged before deploying agents into production.

Understanding this gap is your first step. It reframes the challenge not as a wholesale replacement problem, but as an integration and orchestration challenge - one that can be solved methodically with the right assessment and planning.

The Four Pillars of Legacy System Assessment

A comprehensive readiness assessment for agentic AI integration rests on four foundational pillars: data quality and accessibility, system architecture and interfaces, integration complexity and risk, and organizational readiness and governance maturity. Each pillar has specific dimensions that must be evaluated, scored, and prioritized. Learn more in our post on Custom Integrations: Connect Agentic AI to Legacy Systems Without Disruption.

Pillar One: Data Quality and Accessibility

Agentic AI systems live and die by data quality. An autonomous agent making decisions based on incomplete, inaccurate, or stale data will produce poor outcomes - and potentially introduce compliance violations. Your first assessment task is to audit the data your agents will depend on.

Start by mapping data sources. Document every system that holds customer information, operational metrics, transactional records, or decision-relevant context. For each source, evaluate:

- Completeness: What percentage of required fields are populated? Are there systematic gaps - for example, missing customer segments or incomplete transaction histories?

- Accuracy: How often is data validated? What error rates exist in critical fields? Are there known data quality issues that have been documented?

- Timeliness: How current is the data? Is it refreshed in real-time, daily, weekly? Will the refresh cadence support agent decision-making?

- Consistency: If the same entity (a customer, product, or transaction) exists in multiple systems, do the records match? Are there reconciliation processes?

- Accessibility: Can the data be accessed programmatically? Are there APIs, database connections, or file exports? What authentication and authorization controls exist?

For each data source, assign a quality score from 1 to 5. A score of 1 indicates data that is fragmented, inaccurate, and inaccessible - unsuitable for agent decision-making without significant remediation. A score of 5 indicates clean, accessible, well-governed data ready for autonomous use. Most legacy systems fall somewhere in the middle.

Document remediation needs alongside scores. If a critical data source scores 2 or 3, what would it take to improve it to a 4? Is a data cleansing project feasible? Should the agent be designed to work around the data quality gap, or should data quality improvement be a prerequisite?

Pillar Two: System Architecture and Interfaces

The technical architecture of your legacy systems determines how easily agents can integrate with them. A monolithic mainframe application presents different challenges than a microservices-based platform, and understanding your current architecture is essential.

Begin by documenting the technology stack. What platforms, databases, and programming languages make up your legacy environment? Are systems tightly coupled or loosely integrated? Do APIs exist, and if so, are they REST, SOAP, gRPC, or proprietary protocols?

Evaluate the maturity of each interface:

- Native APIs: Well-documented, actively maintained, with clear versioning and deprecation policies. These are ideal for agent integration.

- Legacy APIs: Older interfaces that work but lack modern standards, security features, or comprehensive documentation. These require adapter layers.

- File-Based Integration: Systems that communicate through CSV, XML, or EDI files. These are slow and fragile but common in legacy environments.

- Database-Direct Access: Agents connect directly to database tables. This bypasses application logic and introduces risk but is sometimes necessary.

- Manual Interfaces: No programmatic access exists; integration requires human intermediaries or custom development.

For each critical system your agents must interact with, rate the interface maturity on a 1 to 5 scale. Interfaces rated 1 or 2 will require significant adapter development or redesign before agents can reliably use them. Interfaces rated 4 or 5 are ready for immediate integration.

Also assess scalability. Can the interface handle the increased traffic that agentic AI will generate? Legacy APIs often weren't designed for thousands of agent requests per minute. Performance testing and capacity planning become critical prerequisites.

Pillar Three: Integration Complexity and Risk

Not all integrations are created equal. Integrating an agent with a read-only reporting system carries far less risk than integrating with systems that control financial transactions or customer data. Your third assessment pillar quantifies integration complexity and identifies where risks concentrate.

For each system your agents will touch, evaluate:

- Scope of Integration: Will agents only read data, or will they also create, update, or delete records? Will they trigger workflows or transactions?

- Business Impact: If an agent makes an error in this system, what are the consequences? Could it cause financial loss, compliance violations, customer harm, or operational disruption?

- Data Sensitivity: Does the system handle personally identifiable information, financial data, health records, or other regulated content? What compliance frameworks apply?

- Interdependencies: Does this system depend on other systems to function correctly? Are there cascading effects if the agent causes an error?

- Audit and Compliance Requirements: What logging, monitoring, and approval workflows must be in place? Can the system provide the audit trail agentic governance requires?

Create a risk matrix with integration complexity on one axis and business impact on the other. Systems in the high-complexity, high-impact quadrant require the most careful planning and should typically be addressed in later phases of your rollout.

Pillar Four: Organizational Readiness and Governance Maturity

Technical readiness is only half the story. Your organization's readiness to govern, monitor, and support agentic AI is equally important. Assess your organization across several dimensions:

- Governance Framework: Do you have policies and processes for AI system oversight? Are there clear approval workflows for agent actions? Who is accountable for agent decisions?

- Data Governance: Is there a data governance function? Are data owners and stewards identified? Are there controls over who can access what data?

- Monitoring and Observability: Can your operations teams see what your agents are doing in real-time? Are there alerting systems for anomalies or errors?

- Skills and Expertise: Do you have staff who can design, deploy, and maintain agentic AI systems? Will you need external support?

- Change Management: How does your organization handle process changes? Are there formal change management practices? How do employees respond to automation?

- Compliance and Risk Management: Is there a function responsible for compliance oversight? How do you manage regulatory risk?

Organizations with mature governance frameworks and strong data management practices are better positioned to deploy agentic AI safely and effectively. Those lacking these foundations should prioritize governance and capability-building alongside technical integration work.

Building Your Readiness Scoring Model

With the four pillars assessed, you need a structured way to synthesize findings into actionable insights. A readiness scoring model provides this framework, converting qualitative assessments into quantitative scores that guide prioritization and phasing decisions. Learn more in our post on Trust & Explainability: Building Explainable Agentic Systems That Executives Accept.

Establishing Scoring Dimensions

Within each pillar, establish 3 to 5 specific dimensions and score each on a 1 to 5 scale. For data quality, dimensions might include completeness, accuracy, timeliness, consistency, and accessibility. For architecture, dimensions might include API maturity, scalability, security capabilities, and documentation quality.

Define clear criteria for each score level. A score of 1 means the dimension is a major blocker - work is required before integration can proceed. A score of 5 means the dimension is excellent and ready for immediate use. Scores of 2, 3, and 4 represent varying degrees of readiness, with associated remediation effort and timeline.

Document these definitions in a scoring rubric that your assessment team can use consistently. This ensures that different evaluators reach similar conclusions and that your scoring is defensible to stakeholders.

Weighting and Aggregation

Not all dimensions carry equal weight. A system with poor data quality but excellent APIs presents different challenges than a system with great data but no programmatic access. Establish weighting factors that reflect your organization's priorities and constraints.

For example, you might weight data quality at 40%, architecture and interfaces at 30%, integration risk at 20%, and organizational readiness at 10%. These weights should reflect your specific context - a highly regulated organization might weight governance more heavily, while a fast-moving startup might prioritize speed of integration.

Aggregate individual dimension scores into pillar scores, then aggregate pillar scores into an overall readiness score. A system with an overall score of 4 or higher is ready for near-term integration. A score of 3 suggests moderate readiness with identified remediation work. A score below 3 indicates significant work is needed before integration should proceed.

Your scoring model is not a one-time exercise. Revisit it quarterly as you progress through remediation work. As systems improve, their scores should reflect that progress, and your phasing roadmap should adjust accordingly.

Creating a Readiness Dashboard

Synthesize your assessment results into a visual dashboard that shows readiness across all systems. Plot each system on a grid with overall readiness score on one axis and integration priority on the other. This creates a visual prioritization framework that guides your phasing decisions.

Systems in the high-readiness, high-priority quadrant are your first targets - they offer the fastest path to value with minimal risk. Systems in the low-readiness, high-priority quadrant require remediation work before integration. Systems in the low-priority quadrants can be addressed later or may not need integration at all.

Share this dashboard with stakeholders. It provides clear visibility into your readiness assessment and helps align expectations about timeline and investment.

Diagnostic Playbook: Step-by-Step Assessment Process

Armed with the framework and scoring model, you're ready to conduct your assessment. Follow this step-by-step playbook to ensure comprehensive, consistent evaluation.

Step One: Assemble Your Assessment Team

Bring together representatives from IT, data management, operations, compliance, and the business units that will use agentic AI. Each perspective is essential. IT understands technical constraints; data teams understand data quality challenges; operations understands workflow requirements; compliance ensures regulatory considerations are addressed; business units understand value and urgency.

Assign a lead assessor responsible for coordinating the effort and ensuring consistency across evaluations. Provide team members with the scoring rubric and assessment guidelines in advance, and conduct a calibration session where the team evaluates a sample system together to ensure shared understanding of scoring criteria.

Step Two: Inventory Your Systems

Create a comprehensive inventory of every system that agentic AI might need to integrate with. Include ERP systems, CRM platforms, data warehouses, accounting systems, HR platforms, custom applications, and any other business-critical systems. For each system, document the owner, primary use case, technology platform, and approximate age.

Prioritize systems based on business impact and integration necessity. Which systems are essential to your agentic AI use cases? Which systems support critical business processes? Which systems are most frequently accessed or updated? This prioritization ensures your assessment effort focuses on high-impact systems first.

Step Three: Evaluate Data Quality

For each prioritized system, conduct a data quality assessment. Query the system to understand data completeness, accuracy, and timeliness. Identify data quality issues and their root causes. Are there known data entry problems? Are there systems that don't validate input? Are there reconciliation gaps between systems?

Interview data stewards and system owners. They often have institutional knowledge about data quality issues that automated analysis won't reveal. Ask about historical data quality problems, ongoing remediation efforts, and data governance controls.

Score each system's data quality dimension using your rubric. Document specific issues and estimated remediation effort for any systems scoring below 4.

Step Four: Analyze System Architecture and Interfaces

Review technical documentation for each system. How is data stored? What interfaces exist for external access? Are APIs documented? What authentication and authorization mechanisms are in place?

Conduct technical interviews with system administrators and architects. Ask about system capacity, scalability constraints, and planned modernization efforts. Understand the technology roadmap - will the system be replaced, modernized, or maintained as-is?

Test interfaces where possible. Can you successfully authenticate and retrieve data? What is the response time? Are there rate limits? Score architecture and interface maturity using your rubric.

Step Five: Map Integration Requirements and Risks

For each system, define the specific integration requirements. What data will agents read? What actions will they take? What workflows will they trigger? Document these requirements clearly.

Identify risks associated with each integration. What could go wrong? What are the consequences? What controls are needed to prevent errors? Score integration complexity and business impact using your risk matrix.

Step Six: Assess Organizational Readiness

Evaluate your organization's governance, compliance, monitoring, and skills maturity. Interview stakeholders from IT, compliance, operations, and business units. Assess the strength of your data governance function, your monitoring and observability capabilities, your change management practices, and your team's skills and expertise.

Identify gaps and prioritize capability-building. Do you need to hire staff? Invest in monitoring tools? Establish governance processes? These investments should be planned alongside technical integration work.

Step Seven: Synthesize and Score

Compile all assessment data into your scoring model. Calculate dimension scores for each system, aggregate into pillar scores, and calculate overall readiness scores. Create your readiness dashboard and prioritization matrix.

Document your findings in a comprehensive assessment report that includes:

- Executive summary with key findings and recommendations

- Detailed scoring for each system across all dimensions

- Readiness dashboard and prioritization matrix

- Specific remediation recommendations for each system

- Phased integration roadmap with timeline and resource requirements

- Governance and capability-building recommendations

- Risk assessment and mitigation strategies

Share this report with stakeholders and use it to drive decision-making about your agentic AI adoption strategy.

Phased Integration Roadmap and Implementation Strategy

Your assessment findings should directly inform your integration roadmap. Rather than attempting wholesale integration of all systems simultaneously, a phased approach reduces risk and allows your organization to build capabilities progressively.

Phase One: Quick Wins

Begin with systems that score highest on readiness and offer clear business value. These quick wins build momentum, generate ROI quickly, and allow your team to develop expertise with lower risk. Typical Phase One candidates include read-only integrations with well-documented APIs, integrations with high-quality data, and systems with low business impact if errors occur.

Phase One typically spans 2 to 4 months. Success criteria include successful agent deployment, demonstrated ROI, and team capability development. Use Phase One to validate your assessment framework, refine your processes, and build internal confidence in agentic AI.

Phase Two: Strategic Integrations

With Phase One success established, move to more strategically important systems. These might include core operational systems, high-impact business processes, or systems with moderate remediation needs that you've now addressed. Phase Two typically addresses 30 to 40% of your integration targets.

Phase Two spans 4 to 6 months and requires more governance rigor, more comprehensive testing, and more stakeholder coordination. Establish monitoring and alerting for agent activities. Implement approval workflows for high-impact actions. Conduct extensive testing in pre-production environments before production deployment.

Phase Three: Enterprise-Wide Expansion

Once your organization has demonstrated competency with agentic AI across multiple systems, expand to remaining systems. Phase Three includes complex integrations, highly regulated systems, and systems requiring significant data quality remediation. By Phase Three, your team should have deep expertise and well-established processes.

Phase Three spans 6 to 12 months or longer, depending on the complexity of remaining systems. Focus on continuous optimization, advanced agent capabilities, and expansion into new use cases.

Resource Planning and Timeline

Your assessment should quantify the resource requirements for each phase. How many engineers will you need? What external expertise should you engage? What tools and infrastructure investments are required? What is the timeline for remediation work?

For systems requiring data quality improvements, determine whether remediation should happen before agent integration or in parallel. For systems requiring API development, plan development timelines. For systems requiring governance capability-building, plan training and process establishment.

Create a detailed roadmap that shows system integration timelines, resource allocation, investment requirements, and expected ROI for each phase. This roadmap becomes your guide for execution and your communication tool for stakeholders.

Governance and Risk Mitigation in Legacy Integration

Integrating agentic AI with legacy systems introduces governance challenges that must be proactively addressed. Your assessment should identify these challenges, and your implementation strategy should include comprehensive mitigation.

Data Governance and Access Control

Establish clear policies about what data agents can access and what actions they can take. Implement role-based access control that restricts agents to the minimum data and permissions required for their function. Use attribute-based access control to enforce business rules - for example, agents might be restricted from accessing customer data for certain segments or regions.

Implement data masking and tokenization where appropriate. If agents don't need to see actual customer names or account numbers, mask this information. This reduces risk if agents are compromised or if logs are exposed.

Audit, Logging, and Monitoring

Every action an agent takes must be logged and auditable. Implement comprehensive logging that captures what data the agent accessed, what decisions it made, what actions it took, and what the outcomes were. Store logs in a centralized, immutable system that can't be altered retroactively.

Implement real-time monitoring and alerting. Alert operations teams to unusual agent behavior - for example, agents accessing data they don't normally access, or agents taking actions that exceed normal patterns. Alert compliance teams to potential violations - for example, agents accessing sensitive data they shouldn't access.

Establish dashboards that show agent activity in real-time. Operations teams should be able to see what agents are doing, drill down into specific actions, and understand decision logic.

Approval Workflows and Human Oversight

For high-impact actions, implement approval workflows that require human review before the agent can proceed. An agent might autonomously handle routine customer service inquiries but require human approval before issuing refunds above a certain threshold. An agent might autonomously route operational alerts but require human approval before taking corrective actions that affect production systems.

Define clear escalation paths. If an agent encounters a situation it can't handle autonomously, it should escalate to a human with appropriate expertise. Ensure that escalations are timely and that humans have the context they need to make good decisions.

Compliance and Regulatory Considerations

Understand your regulatory environment. If you operate in healthcare, you must comply with HIPAA and other healthcare regulations. If you handle financial data, you must comply with financial services regulations. If you operate in the EU, you must comply with GDPR and other privacy regulations.

Assess how agentic AI impacts your compliance obligations. Can agents make decisions that affect regulated processes? Do agents have access to regulated data? What audit trails and controls must be in place? Work with your compliance and legal teams to ensure your agentic AI implementation meets all regulatory requirements.

Document your governance framework clearly. Create policies and procedures that explain how agents are governed, how decisions are made, how data is protected, and how compliance is maintained. Train your teams on these policies and ensure they understand their roles and responsibilities.

Overcoming Common Assessment Challenges

As you conduct your assessment, you'll likely encounter challenges. Understanding these challenges in advance and having strategies to address them will make your assessment more effective.

Challenge One: Incomplete or Unavailable Documentation

Legacy systems often lack comprehensive documentation. No one may remember how data flows through the system, what the database schema is, or what interfaces exist. When documentation is unavailable, rely on interviews with system owners and long-time users. They often have detailed knowledge of system behavior even if it's not formally documented.

Consider this an opportunity to create documentation as a byproduct of your assessment. As you learn how systems work, document your findings. This documentation will be valuable for your integration team and for future maintenance.

Challenge Two: Competing Priorities and Stakeholder Alignment

Different stakeholders have different priorities. Operations wants to minimize disruption. Compliance wants to maximize control. Business units want fast results. IT wants sustainable, maintainable solutions. When priorities conflict, escalate to executive leadership for resolution.

Use data from your assessment to frame discussions. Show stakeholders the readiness scores, the risks, and the resource requirements. Help them understand the tradeoffs between speed, risk, and investment. Often, stakeholder alignment improves when everyone has the same data.

Challenge Three: Scope Creep and Assessment Paralysis

Your assessment can easily become all-consuming. There's always another system to evaluate, another data source to analyze, another risk to consider. Set clear scope boundaries at the outset. Decide which systems are in scope and which are out of scope. Decide which assessment dimensions are essential and which are nice-to-have.

Establish a timeline for your assessment. Allocate a fixed amount of time for each phase of the assessment. When the time is up, synthesize what you've learned and move to implementation. You can always refine your understanding as you execute.

Challenge Four: Resistance to Change and Legacy System Attachment

Legacy systems often have passionate defenders - people who have built their careers around these systems and who fear automation will make them obsolete. Address this resistance head-on. Involve these stakeholders in your assessment. Help them understand that agentic AI is not about eliminating their roles, but about freeing them from routine work so they can focus on higher-value activities.

Communicate clearly about what will change and what won't. Be honest about where automation will reduce headcount and where it will augment human work. Provide retraining and career development opportunities for people whose roles will change.

Measuring Success and Continuous Improvement

Your assessment is not a one-time event. As you implement agentic AI, continuously measure success against your assessment findings and refine your approach.

Define clear success metrics for your agentic AI implementation. These might include process cycle time reduction, cost savings, error rate reduction, customer satisfaction improvement, or employee productivity gains. Track these metrics throughout your phased rollout and compare actual results to your projections.

Establish a regular cadence for reassessing system readiness. As you complete remediation work and implement integrations, system readiness scores should improve. Quarterly readiness reviews keep your roadmap current and help you identify new integration opportunities.

Solicit feedback from operations teams, business users, and compliance stakeholders. Are agents performing as expected? Are there unexpected challenges? What improvements would make agents more effective? Use this feedback to refine your agent designs and your integration approach.

Build a culture of continuous improvement around agentic AI. Celebrate successes and learn from challenges. Share lessons learned across your organization. As your expertise grows, tackle more complex integrations and more ambitious use cases.

Conclusion: From Assessment to Transformation

Assessing your legacy systems for agentic AI integration is the essential first step toward digital transformation. Without this assessment, you risk expensive false starts, security vulnerabilities, and missed opportunities. With a structured assessment framework, clear scoring model, and phased implementation roadmap, you can navigate legacy system integration with confidence and minimize business disruption.

The assessment process itself builds organizational alignment and capability. It forces important conversations about data quality, system architecture, governance, and compliance. It surfaces hidden risks and dependencies. It creates a shared understanding of your current state and your desired future state.

Your assessment findings should drive a realistic, phased roadmap that begins with quick wins, builds organizational capability, and progressively expands agentic AI to more complex systems. This approach balances speed with risk management, allowing you to generate early ROI while building the governance and technical foundations for enterprise-wide transformation.

At A.I. PRIME, we specialize in helping founder-led B2B teams and small operators rapidly assess and integrate agentic AI into their existing workflows. Our Workflow Audit service provides a ranked assessment of your operational processes and system readiness. Our fixed 14-day engagement model delivers concrete, deployable automation without lengthy consulting cycles. Our approach focuses on measurable operational efficiency - reducing support response times, automating sales follow-up, and eliminating repetitive tasks in operations.

Whether you're a small team looking to automate customer support or a growing operation seeking to scale your sales processes, we can help you identify integration opportunities, assess your system readiness, and deploy working AI agents quickly. Our proven framework has helped dozens of founder-led teams achieve measurable operational improvements in just 14 days.

Ready to assess your systems and unlock the potential of agentic AI? Schedule a discovery conversation with our team today. We'll help you understand your current readiness, identify your highest-value automation opportunities, and develop a realistic deployment plan. Your competitive advantage in the AI era starts with a clear-eyed assessment of where you are today and a strategic plan for rapid, measurable improvement.

Next step

Book the Opportunity Sprint