Security and Compliance Checklist for Deploying Autonomous Workflows

I need the actual description text to complete this task. Could you please provide the full content that should replace "DESCRIPTION_TEXT"?

Published on

Autonomous workflows promise transformative efficiency gains - but only if they can be deployed with confidence. For decision-makers in mid to large enterprises, the challenge isn't whether AI-driven automation delivers value; it's how to validate that autonomous systems meet your security and compliance requirements before they touch production data. The stakes are high: a single misconfigured access control or unaudited transaction can expose sensitive information, violate regulatory mandates, and undermine stakeholder trust. This comprehensive checklist addresses that critical gap by providing InfoSec and compliance teams with a structured framework to assess autonomous workflow deployments across security architecture, data handling, access controls, encryption protocols, and audit logging. Whether you're deploying sales journey automation, operational process orchestration, or data-driven agent networks, this guide ensures your autonomous systems are production-ready and governance-aligned from day one.

Understanding the Autonomous Workflow Security Landscape

Autonomous workflows operate differently from traditional applications, and that difference matters profoundly for security. Unlike static software that executes predefined code paths, autonomous systems make decisions, route data, and trigger actions based on real-time intelligence and adaptive logic. This dynamism creates both opportunity and risk. Your compliance framework must account for systems that evolve their behavior, delegate tasks across multiple endpoints, and interact with data in ways that may not be fully predictable at deployment time. Learn more in our post on Security and Compliance for Agentic AI Automations.

The regulatory environment has shifted in response to this reality. Healthcare organizations now face HIPAA Security Rule requirements that explicitly address system hardening and encryption for electronic protected health information. Financial institutions contend with evolving standards around algorithmic transparency and data lineage. Manufacturing and logistics enterprises must demonstrate that autonomous agents comply with industry-specific data governance mandates. Across all sectors, the expectation is clear: autonomous systems must be as auditable, controllable, and compliant as the legacy workflows they replace.

The core challenge is that autonomous workflows introduce new attack surfaces. Traditional workflows have defined entry points, documented data flows, and static access patterns. Autonomous systems, by contrast, may spawn new workflows dynamically, route data through multiple agent nodes, and make real-time decisions about which systems to interact with. This means your security posture must evolve to address agent-to-agent communication, dynamic permission escalation, and the audit trail requirements of self-healing or adaptive workflows.

The most critical insight from recent compliance guidance is that autonomous systems require not just encryption and access controls, but a complete rethinking of how you monitor, audit, and validate system behavior. Static compliance is no longer sufficient - your framework must support continuous validation of autonomous decision-making.

This checklist is designed for the realities of modern autonomous deployment. It moves beyond generic security frameworks to address the specific challenges of systems that learn, adapt, and operate with minimal human intervention. By working through each section with your InfoSec and compliance teams, you'll establish a validation process that gives executives confidence in deployment while ensuring that autonomous systems remain under meaningful governance control.

Data Handling and Classification Framework

Before autonomous workflows can be secured, you must establish clarity on what data they will access and how that data should be treated. Data classification is the foundation of every compliance program, yet it's often overlooked in the rush to deploy automation. Autonomous systems amplify the importance of this discipline because they operate across multiple data sources, may combine datasets in novel ways, and can propagate data through systems without human oversight. Learn more in our post on Balancing Autonomy and Compliance: Best Practices for Enterprise AI Governance.

Establishing Data Inventory and Classification

Your first step is to document every data source that autonomous workflows will interact with. This includes operational databases, customer relationship systems, enterprise resource planning platforms, third-party APIs, and any data lakes or warehouses that feed your agents. For each source, classify the data according to sensitivity levels: public, internal, confidential, and restricted. Restricted data typically includes personally identifiable information, payment card data, health information, or any data subject to regulatory protection.

The classification exercise serves multiple purposes. It forces your team to understand data lineage - where information originates, how it transforms as it flows through systems, and where it ultimately resides. It also creates the foundation for access control policies, encryption requirements, and audit logging specifications. When you later define which autonomous agents can access which data, you'll reference these classifications to ensure agents never escalate their permissions beyond what their function requires.

Document your classification scheme in a data governance policy that all stakeholders can reference. Include examples of each classification level specific to your industry. For healthcare organizations, this might distinguish between de-identified patient data and protected health information. For financial services, it might separate public market data from customer account information. Make the policy explicit enough that any team member can classify new data sources consistently.

Data Residency and Geographic Compliance

Autonomous workflows often route data across systems and, in cloud-native deployments, across geographic regions. Regulatory requirements frequently impose constraints on where data can reside. The European Union's General Data Protection Regulation mandates that personal data of EU residents be processed within the EU unless specific safeguards are in place. Healthcare data in the United States must often remain within U.S. borders. Financial data may be subject to residency requirements in multiple jurisdictions.

When designing autonomous workflows, establish clear policies on data residency. Configure your workflow orchestration platform to enforce geographic boundaries - ensuring that agents processing sensitive data never route that information to restricted regions. This is particularly important for multi-tenant cloud environments where data from different customers may be processed by shared infrastructure. Your autonomous system must have built-in guardrails that prevent data residency violations, rather than relying on manual oversight.

Document these constraints in your workflow design specifications. If an autonomous agent is designed to perform sales journey automation, for instance, it should not be capable of routing customer contact information to regions where data residency rules would be violated. This requires coordination between your compliance team (which defines the rules) and your automation architects (who encode those rules into agent permissions and workflow logic).

Data Retention and Deletion Protocols

Autonomous workflows generate data - logs, decision records, intermediate processing results, and audit trails. Your compliance framework must specify how long each category of data should be retained and what triggers deletion. Many regulations require that personal data be deleted upon request or after a specified period. Autonomous systems that retain data beyond these windows create compliance violations.

Establish explicit data retention policies for each data classification level. Restricted data might be retained for seven years for regulatory audit purposes, then securely deleted. Confidential data might be retained for three years. Internal and public data may have different retention windows. Document these policies clearly and ensure your autonomous workflow platform enforces them automatically through scheduled deletion jobs or event-triggered purging.

Include in your retention policy a mechanism for handling data subject access requests - the requirement in many regulations that individuals can request access to personal data held about them. Your autonomous system should be capable of identifying and retrieving all data associated with a specific individual across all systems the workflow touches, then providing that data to the requestor or deleting it as requested. This requires careful logging and data tagging throughout your autonomous system architecture.

Access Control Architecture and Agent Permissions

Access control for autonomous workflows requires a fundamental shift in how you think about permissions. Traditional access control assigns permissions to human users - a database administrator might have read/write access to certain tables, a customer service representative might have read access to customer records. Autonomous agents, by contrast, operate continuously without human supervision, making decisions about which systems to interact with based on workflow logic and real-time data. Learn more in our post on Unified Command Center: Centralized Control for Distributed Agent Networks.

Principle of Least Privilege for Autonomous Agents

The principle of least privilege states that every user, process, or system should have only the minimum permissions necessary to perform its function. For autonomous agents, this principle becomes critical because agents operate at scale and without human review of individual decisions. An agent that has been granted excessive permissions can cause damage at scale - potentially accessing, modifying, or deleting data across entire systems without any human intervention point.

When designing agent permissions, start by defining the specific tasks the agent must perform. A sales journey automation agent might need to read customer contact information, read sales pipeline data, create calendar events, and send email notifications. It does not need to modify customer account settings, access payment information, or delete records. Define these permissions explicitly in your agent configuration, then implement them in your identity and access management system.

Use role-based access control (RBAC) to organize agent permissions logically. Define roles such as "sales-automation-agent," "process-monitoring-agent," and "data-orchestration-agent," each with a specific set of permissions. Assign agents to roles rather than granting permissions directly to individual agents. This makes it easier to audit permissions, update them consistently across multiple agents, and understand the blast radius if an agent's credentials are compromised.

Implement attribute-based access control (ABAC) to add contextual constraints. An agent might have permission to read customer records, but only for customers in a specific region, or only during specific hours, or only after passing additional authentication checks. ABAC allows you to express complex permission rules that account for context, reducing the risk that an agent will access data outside its intended scope.

Service Accounts and Credential Management

Autonomous agents require credentials to access systems - database connection strings, API keys, cloud service credentials, and similar authentication material. Managing these credentials securely is essential because compromised agent credentials provide attackers with the same permissions the agent holds, potentially across multiple systems.

Never embed credentials directly in agent code or configuration files. Use a secrets management system - a dedicated service that stores credentials securely, controls access to them, and logs all access attempts. Cloud providers offer managed secrets services; enterprises can deploy dedicated secrets management platforms. Your agents retrieve credentials from the secrets manager at runtime, using their own identity to authenticate to the secrets service.

Implement credential rotation policies. Agent credentials should be changed on a regular schedule - monthly, quarterly, or more frequently depending on risk assessment. Automate this rotation process so that credentials are updated without requiring manual intervention or workflow downtime. Your secrets management system should support automatic rotation, updating credentials in the secrets store and notifying agents to refresh their cached credentials.

Audit all credential access. Your secrets management system should log every request for credentials, including which agent requested them, when, and whether the request was granted or denied. These logs become part of your audit trail and help you detect unauthorized access attempts or suspicious patterns.

Multi-Factor Authentication for Agent Systems

Multi-factor authentication (MFA) requires that access be granted only after two or more independent verification factors are presented. For human users, this typically means something you know (a password) and something you have (a phone or hardware token). For autonomous agents, MFA takes a different form but serves the same purpose - adding a second verification step that makes it harder for attackers to gain unauthorized access.

Implement MFA at critical points in your autonomous workflow architecture. When agents authenticate to core systems - particularly those holding sensitive data - require additional verification beyond the agent's primary credential. This might involve a second API key, a time-based token from a hardware security module, or verification through a separate identity service. The specific mechanism depends on your infrastructure, but the principle is consistent: accessing sensitive systems requires multiple independent proofs of authorization.

For workflows that make high-risk decisions or access highly sensitive data, consider requiring human approval as a second factor. An autonomous agent might identify a potential customer issue and propose a solution, but human approval might be required before the agent can execute that solution. This approach combines automated efficiency with human oversight, reducing the risk of autonomous systems causing unintended harm.

Encryption Strategy and Data Protection

Encryption is the foundation of data protection. It ensures that data remains confidential even if systems are compromised, and it provides assurance to regulators and customers that your organization takes data security seriously. For autonomous workflows, encryption must be applied consistently across data in transit, data at rest, and data in processing.

Encryption in Transit

Data in transit is data being transmitted across networks - between autonomous agents and databases, between agents and external APIs, between cloud services and on-premises systems. This data is vulnerable to interception if transmitted in plaintext. Implement encryption for all data in transit using TLS (Transport Layer Security) version 1.2 or higher.

Ensure that TLS is configured securely. Use strong cipher suites that support forward secrecy - a property that ensures that even if encryption keys are compromised in the future, past communications remain secure. Disable older cipher suites and protocols that have known vulnerabilities. Validate TLS configuration using automated tools that check for weaknesses and misconfigurations.

For agent-to-agent communication, implement mutual TLS (mTLS) where both parties authenticate to each other using certificates. This prevents man-in-the-middle attacks where an attacker intercepts communication between agents and impersonates one of them. Each agent should have a unique certificate signed by your internal certificate authority, and agents should verify the certificate of any peer before accepting communication.

Manage TLS certificates carefully. Establish a certificate lifecycle management process that issues certificates with appropriate validity periods, rotates expiring certificates automatically, and revokes compromised certificates immediately. Use a certificate management platform that integrates with your agent infrastructure, automating certificate provisioning and renewal without requiring manual intervention.

Encryption at Rest

Data at rest is data stored in databases, file systems, backups, or any persistent storage. Encrypt all data at rest using strong encryption algorithms - AES-256 is the current standard for sensitive data. Encryption can be implemented at multiple layers: database-level encryption, file-system encryption, or storage-device encryption. Use layered encryption where sensitive data is encrypted at the application layer before being stored in the database, which is itself encrypted at the storage layer.

Manage encryption keys separately from the data they protect. Store encryption keys in a dedicated key management system rather than in the same location as encrypted data. This ensures that even if an attacker gains access to your database, they cannot decrypt the data without also compromising your key management system - a much harder target.

Implement key rotation policies. Encryption keys should be rotated periodically - annually for most purposes, more frequently for highly sensitive data. When a key is rotated, data encrypted with the old key is re-encrypted with the new key. Your key management system should automate this process, rotating keys on schedule and handling the re-encryption transparently to your autonomous workflows.

For cloud-based autonomous workflows, leverage cloud provider key management services that offer hardware security modules (HSMs) for key storage. HSMs are specialized devices that generate, store, and manage cryptographic keys in a tamper-resistant environment. Keys never leave the HSM in plaintext form, and the HSM enforces strict controls on how keys can be used.

Encryption Key Management and Compliance

Key management is often the weakest link in encryption implementations. Organizations encrypt data carefully but then store encryption keys insecurely, defeating the purpose of encryption. Establish a comprehensive key management policy that covers key generation, storage, rotation, revocation, and destruction.

Define key management procedures for different data classifications. Restricted data might require keys stored in hardware security modules with multi-person control - meaning that multiple administrators must authorize key operations. Confidential data might use cloud-managed keys with automatic rotation. Internal and public data might use simpler key management approaches. Document these procedures clearly so that all teams understand how keys are managed for different data types.

Implement separation of duties in key management. No single person should have access to all encryption keys. Require that sensitive key operations - such as using a key to decrypt highly sensitive data - require authorization from multiple people. This reduces the risk that a compromised administrator can decrypt data without detection.

Maintain detailed logs of all key management activities. Every time a key is generated, rotated, used, or destroyed, log the event with details about who initiated it, when it occurred, and what data was affected. These logs become critical evidence in compliance audits and security investigations.

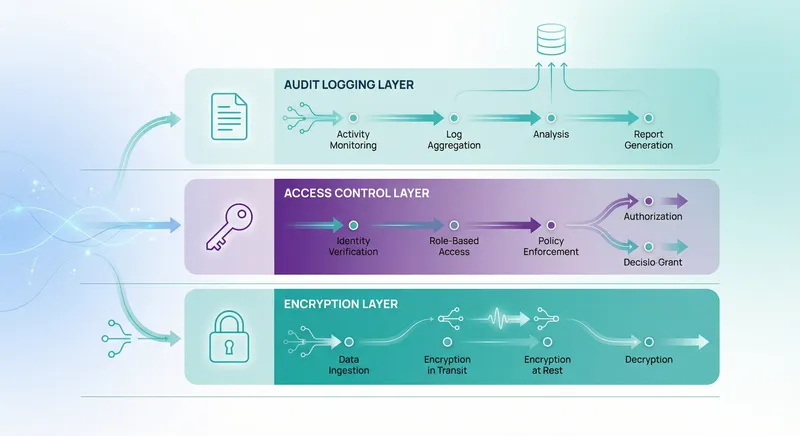

Audit Logging and Real-Time Monitoring

Audit logging is your evidence that autonomous workflows are operating as intended and complying with security policies. Comprehensive audit logs answer critical questions: Who accessed what data? When did they access it? What did they do with it? What decisions did autonomous agents make? Why did they make those decisions? Were any security policies violated? Without detailed audit logs, you cannot prove compliance, investigate security incidents, or detect unauthorized behavior.

Comprehensive Audit Logging Framework

Design your audit logging system to capture events across the entire autonomous workflow lifecycle. Log authentication events - whenever an agent or user authenticates to a system. Log authorization events - whenever an access control decision is made, whether granting or denying access. Log data access events - whenever data is read, modified, or deleted. Log system events - whenever configurations change, keys are rotated, or security policies are updated.

For autonomous workflows specifically, log decision events - whenever an agent makes a significant decision, capture what data it considered, what decision rules applied, and what action it took. Log workflow execution events - whenever a workflow starts, completes, or encounters an error. Log agent-to-agent communication events - whenever agents interact with each other, capture the communication and its outcome.

Ensure that audit logs capture sufficient detail for forensic investigation. Rather than logging only that "data was accessed," log which specific records were accessed, what fields were read, and by which agent. Rather than logging only that "a decision was made," log the decision logic that applied, the input data that influenced the decision, and the output action that resulted.

Implement immutable audit logging. Once an event is logged, it should not be modifiable or deletable. Use append-only log storage where new events are added but old events cannot be changed. Some organizations implement this using blockchain-based logging systems; others use write-once storage devices or cloud services with immutability guarantees. The goal is to ensure that audit logs cannot be tampered with to cover up unauthorized activities.

Log Aggregation and Centralization

Autonomous workflows generate logs across multiple systems - agents, databases, APIs, cloud services, and network infrastructure. Centralizing these logs in a single location makes it possible to correlate events, detect patterns, and investigate incidents comprehensively. Implement a log aggregation system that collects logs from all sources and stores them in a central repository.

Use a standard log format across all sources. Common log formats include syslog, JSON, and structured logging formats. Standardization makes it easier to parse, search, and analyze logs. Include consistent fields in every log entry - timestamp, source system, event type, user or agent identity, data affected, and outcome.

Implement log retention policies that balance compliance requirements with storage costs. Logs for restricted data might be retained for seven years. Logs for confidential data might be retained for three years. Logs for routine system events might be retained for 90 days. Ensure that logs are retained in tamper-proof storage for the full retention period, then securely deleted when the retention period expires.

Encrypt logs both in transit and at rest. Logs contain sensitive information - they reveal which users accessed which data, which systems failed, and which security events occurred. Protect logs with the same encryption standards you apply to the data they document.

Real-Time Alerting and Anomaly Detection

Comprehensive audit logs are valuable for investigation after an incident occurs, but real-time monitoring allows you to detect and respond to security issues as they happen. Implement real-time alerting that notifies your security team immediately when suspicious activities are detected.

Define alert rules that identify suspicious patterns. Alert when an agent attempts to access data outside its assigned permissions. Alert when an agent accesses an unusually large volume of data compared to historical patterns. Alert when authentication failures exceed a threshold, suggesting a brute-force attack. Alert when a system configuration is modified unexpectedly. Alert when encryption keys are accessed outside normal usage patterns.

Use anomaly detection to identify behaviors that deviate from normal patterns even if they don't match predefined rules. Machine learning algorithms can learn the normal behavior of each agent - what data it typically accesses, what operations it typically performs, what times it typically operates - then flag activities that deviate significantly from those patterns. This helps catch sophisticated attacks that might evade rule-based detection.

Implement escalation procedures that ensure alerts are handled promptly. Assign alert severity levels - critical alerts require immediate investigation, high alerts require investigation within hours, medium alerts require investigation within a day. Ensure that your security team has clear procedures for responding to each alert level, including investigation steps, escalation paths, and decision criteria for taking corrective action.

Test your alerting system regularly. Conduct security drills where you simulate unauthorized access attempts and verify that your alerting system detects them and notifies your team. Test your incident response procedures to ensure that your team can investigate alerts efficiently and take corrective action quickly.

System Hardening and Vulnerability Management

System hardening is the process of reducing your attack surface by disabling unnecessary services, applying security patches, configuring systems securely, and removing unnecessary software. For autonomous workflows, hardening applies to the agents themselves, the platforms they run on, and the systems they interact with.

Agent Infrastructure Hardening

Autonomous agents run on infrastructure - whether that's containers, virtual machines, or serverless functions in a cloud environment. Harden this infrastructure by applying the principle of least privilege. Disable all services and ports that agents don't require. Remove all software and libraries that agents don't use. Configure the operating system and runtime environment with the minimum permissions agents need to function.

Implement container security if your agents run in containers. Use minimal base images that contain only essential operating system components. Scan container images for known vulnerabilities before deploying them. Implement image signing so that agents can verify they're running authorized container images. Use container runtime security tools that monitor container behavior and prevent containers from performing unauthorized actions.

Apply security patches promptly. When vulnerabilities are discovered in operating systems, runtimes, or libraries that your agents depend on, apply patches as soon as they're available. Automate patching where possible, but ensure that patches are tested in non-production environments before being deployed to production agents.

Implement network segmentation. Autonomous agents should run in isolated network segments with restricted connectivity to other systems. An agent should only be able to communicate with systems it needs to interact with - its database, specific APIs, the workflow orchestration platform. Implement network policies and firewalls that enforce these restrictions, preventing agents from being used as pivot points to attack other systems.

Vulnerability Scanning and Penetration Testing

Proactively identify vulnerabilities before attackers do. Implement automated vulnerability scanning that regularly scans your agents, infrastructure, and connected systems for known vulnerabilities. Use vulnerability scanning tools that check for outdated software versions, missing security patches, misconfigurations, and weak credentials.

Conduct regular penetration testing - simulated attacks where security professionals attempt to compromise your autonomous workflow systems to identify vulnerabilities. Penetration testing should include attempts to compromise agents, escalate privileges, access unauthorized data, and disrupt workflow operations. Use the results to prioritize remediation efforts and improve your security posture.

Implement a vulnerability management program that tracks discovered vulnerabilities, prioritizes remediation based on severity and exploitability, and verifies that fixes have been applied successfully. Assign ownership for each vulnerability to ensure that someone is responsible for remediating it. Set target remediation timelines based on severity - critical vulnerabilities might require remediation within 24 hours, high severity within one week, medium severity within one month.

Compliance Validation and Audit Readiness

Security controls are only effective if they're documented, consistently applied, and validated regularly. Compliance validation is the process of verifying that your autonomous workflow systems meet regulatory requirements and internal security policies.

Compliance Framework Selection and Mapping

Identify which compliance frameworks apply to your organization and autonomous workflows. Healthcare organizations must comply with HIPAA Security Rule requirements. Organizations handling EU resident data must comply with GDPR. Financial institutions must comply with frameworks like PCI DSS for payment data. Manufacturing organizations in regulated industries must comply with industry-specific frameworks. Many organizations must comply with multiple frameworks simultaneously.

Map your security controls to compliance requirements. For each requirement in your applicable frameworks, document which security controls address it. For example, HIPAA requires encryption of protected health information in transit and at rest - map this to your TLS implementation and database encryption controls. GDPR requires data subject access and deletion capabilities - map this to your data inventory and retention policies. This mapping ensures that every compliance requirement is addressed by at least one control, and that you can demonstrate compliance to auditors.

Maintain a compliance matrix that documents your compliance status for each requirement. Include the control that addresses each requirement, the evidence that the control is implemented, the frequency of control validation, and the date of most recent validation. This matrix becomes your primary compliance documentation artifact and significantly speeds up audit processes.

Internal Audit Procedures

Conduct regular internal audits of your autonomous workflow security controls. Internal audits verify that controls are implemented as designed, are operating effectively, and are achieving their intended security objectives. Conduct internal audits at least annually, and more frequently for high-risk systems or after significant changes.

Develop audit procedures for each security control. For access control, the audit procedure might involve selecting a sample of agents, verifying that their permissions match documented role assignments, and confirming that permissions are appropriate for their functions. For encryption, the audit procedure might involve verifying that encryption is enabled for all data classifications that require it, and spot-checking that encryption keys are managed according to policy. For audit logging, the audit procedure might involve verifying that logs are being generated for all required events and that logs are being retained according to policy.

Document audit findings in a formal audit report that identifies control gaps, recommends remediation, and assigns responsibility for addressing findings. Prioritize findings based on risk - control failures that could lead to significant data breaches should be remediated immediately, while minor gaps in documentation might be addressed within a longer timeframe.

Implement a tracking system for audit findings and remediation activities. Ensure that findings are remediated, that remediation is verified through follow-up testing, and that evidence of remediation is maintained for auditors. This demonstrates that your organization takes audit findings seriously and is committed to continuous improvement.

External Audit and Certification Preparation

Many organizations must undergo external audits by third-party auditors or must achieve formal certifications. Healthcare organizations might undergo HIPAA compliance audits. Organizations handling payment data must achieve PCI DSS certification. Organizations in regulated industries might undergo SOC 2 audits. Prepare for external audits by ensuring that your internal controls are robust and that your documentation is complete.

Before an external audit, conduct a pre-audit self-assessment. Have your internal audit team conduct an audit using the same procedures that external auditors will use, then remediate any findings before the external audit. This significantly improves your audit results and demonstrates to external auditors that you maintain effective internal controls.

Maintain complete documentation of your security controls and compliance efforts. External auditors will request evidence of control implementation - documentation of policies, evidence of control testing, logs demonstrating control operation, and evidence of remediation for identified issues. Organize this documentation in a way that makes it easy for auditors to locate and review.

Prepare your team for auditor interviews. Auditors will interview key personnel to understand how controls are implemented and operated. Ensure that team members understand the control procedures, can explain how they work, and can provide evidence of their operation. Conduct mock interviews to prepare team members for auditor questions.

Governance Integration and Policy Enforcement

Security and compliance controls are only effective if they're integrated into your autonomous workflow governance framework and enforced consistently. Governance integration ensures that security policies are not just documented but actively enforced by your workflow systems.

Policy-Driven Workflow Design

Design your autonomous workflows to enforce security and compliance policies automatically. Rather than relying on post-deployment validation, build policy enforcement into workflow logic. If a compliance policy requires that certain data can only be accessed during business hours, configure your workflow orchestration platform to prevent access to that data outside business hours. If a policy requires that high-risk decisions be approved by a human, build approval gates into your workflow logic.

Use policy-as-code approaches where security and compliance policies are expressed in machine-readable formats that your workflow platform can enforce automatically. Policy-as-code makes policies auditable, testable, and versionable. You can review policy changes before they're deployed, test policies in non-production environments, and maintain a complete history of policy evolution.

Implement guardrails that prevent agents from violating policies even if they encounter unexpected situations. An agent might be designed to access customer data, but guardrails should prevent it from accessing data outside its assigned scope even if it receives a request to do so. Guardrails act as a safety net that catches policy violations and prevents them from causing harm.

Continuous Compliance Monitoring

Rather than validating compliance only during annual audits, implement continuous compliance monitoring that validates compliance continuously as workflows operate. Continuous compliance monitoring uses automated tools to verify that systems are operating in compliance with policies, alerting you immediately if policy violations occur.

Implement configuration management that tracks all changes to your autonomous workflow systems. When an agent's permissions change, when encryption settings are modified, or when access control policies are updated, log these changes and validate that they comply with your change management procedures. Prevent unauthorized changes by requiring approvals before changes are deployed.

Use automated compliance validation tools that periodically verify that your systems are compliant with policies. These tools might check that all databases have encryption enabled, that all agents have been assigned appropriate permissions, that all audit logging is functioning correctly, or that all security patches have been applied. Schedule these validations to run regularly - daily for high-risk checks, weekly for routine checks - and alert your team to any compliance violations.

Incident Response and Breach Notification Procedures

Despite your best efforts, security incidents may occur. Autonomous workflows can amplify the impact of incidents because they operate at scale and without human oversight. A compromised agent might perform unauthorized actions across thousands of records before being detected. Prepare for this possibility by establishing incident response and breach notification procedures.

Incident Response Plan for Autonomous Systems

Develop an incident response plan that specifically addresses autonomous workflow systems. The plan should define roles and responsibilities - who leads the incident response, who investigates, who communicates with affected parties. It should define escalation procedures - when to involve executive leadership, when to involve law enforcement, when to involve regulators. It should define communication procedures - how to communicate with affected customers, how to communicate internally, how to communicate with regulators and the public.

Include in your incident response plan procedures specific to autonomous systems. If an agent is compromised, how do you isolate it without disrupting other workflows? How do you determine what unauthorized actions the agent performed? How do you restore systems to a known-good state? How do you verify that the compromise has been fully remediated before resuming normal operations?

Establish incident response team composition and conduct regular training. Your incident response team should include representatives from security, operations, legal, and communications. Conduct tabletop exercises where you simulate security incidents and walk through your incident response procedures. Use these exercises to identify gaps in your procedures and improve your team's ability to respond effectively.

Breach Notification and Regulatory Reporting

If a security incident results in unauthorized access to sensitive data, you may be required to notify affected individuals and regulatory authorities. Breach notification requirements vary by jurisdiction and data type - HIPAA requires notification of breaches affecting protected health information, GDPR requires notification of data breaches affecting EU resident data, state privacy laws require notification of breaches affecting state residents.

Understand your breach notification obligations. Determine which breaches trigger notification requirements, what information must be included in notifications, how quickly notifications must be sent, and which regulatory authorities must be notified. Document these requirements in your incident response plan so that your team understands their obligations when an incident occurs.

Prepare breach notification templates and procedures. When a breach occurs, you must act quickly to notify affected parties. Pre-prepared templates and procedures allow you to respond rapidly without sacrificing quality. Include in your templates the specific information required by regulations - description of the breach, types of data affected, steps affected individuals should take to protect themselves, and contact information for more information.

Maintain breach notification documentation. Document every breach notification sent, including who was notified, when they were notified, what information was provided, and any responses received. This documentation becomes evidence of your compliance with breach notification requirements and helps you learn from incidents to prevent future breaches.

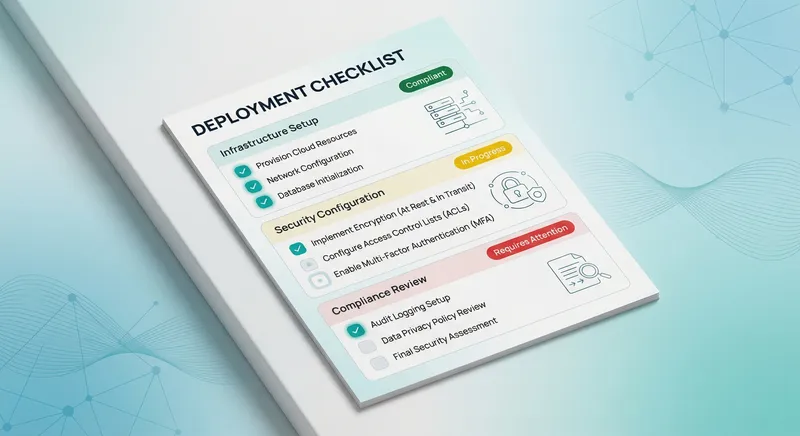

Deployment Validation Checklist

Before deploying autonomous workflows to production, conduct a comprehensive validation that verifies all security and compliance controls are implemented and functioning correctly. Use this checklist to ensure that nothing is overlooked.

- Data Classification: All data sources accessed by the workflow have been classified according to sensitivity level, and classification has been documented in your data governance policy.

- Data Residency: Data residency requirements have been identified for all restricted and confidential data, and workflow configurations enforce these requirements.

- Data Retention: Retention policies have been defined for all data classifications, and the workflow platform enforces automatic deletion when retention periods expire.

- Access Control: Agent permissions have been defined according to least privilege, implemented in your identity and access management system, and validated through testing.

- Service Accounts: All agent credentials are stored in a secrets management system, rotated on a defined schedule, and never embedded in code or configuration.

- Multi-Factor Authentication: MFA has been implemented for agent access to sensitive systems and for human access to critical workflow management functions.

- Encryption in Transit: All data transmitted by the workflow is encrypted using TLS 1.2 or higher, and TLS configuration has been validated against security standards.

- Encryption at Rest: All data stored by the workflow is encrypted using AES-256 or equivalent, and encryption is applied at multiple layers.

- Key Management: Encryption keys are stored in a dedicated key management system, rotated on a defined schedule, and access to keys is logged and audited.

- Audit Logging: All required events are logged with sufficient detail for forensic investigation, logs are immutable, and logs are retained according to policy.

- Log Centralization: Logs from all systems are aggregated in a central repository, standardized to a common format, and protected with encryption.

- Real-Time Alerting: Alerts have been configured for suspicious activities, alert rules have been tested, and your security team has procedures for responding to alerts.

- Agent Infrastructure: Agent infrastructure has been hardened by disabling unnecessary services, applying security patches, and implementing network segmentation.

- Vulnerability Scanning: Automated vulnerability scanning has been implemented and is running regularly, with findings being tracked and remediated.

- Penetration Testing: Penetration testing has been conducted on your autonomous workflow systems, findings have been remediated, and evidence of remediation has been documented.

- Compliance Mapping: Applicable compliance frameworks have been identified, security controls have been mapped to compliance requirements, and a compliance matrix has been created.

- Internal Audit: Internal audit procedures have been developed for each security control, internal audits have been conducted, and findings have been remediated.

- Policy Enforcement: Security and compliance policies have been integrated into workflow logic, policy-as-code has been implemented where possible, and guardrails prevent policy violations.

- Continuous Compliance: Automated compliance validation tools have been implemented and are running regularly, alerting your team to compliance violations.

- Incident Response: An incident response plan has been developed, incident response team has been trained, and tabletop exercises have been conducted.

- Breach Notification: Breach notification obligations have been identified, notification templates have been prepared, and procedures for rapid notification have been established.

- Documentation: All security controls, policies, procedures, and compliance efforts have been documented and organized for easy access by auditors.

- Approval: Security and compliance teams have reviewed the deployment, confirmed that all security requirements have been met, and approved the deployment to production.

Work through this checklist with your InfoSec and compliance teams before deploying any autonomous workflow to production. Ensure that every item has been addressed and that evidence of compliance has been documented. This checklist transforms security and compliance validation from an abstract concept into a concrete, verifiable process that gives you confidence in your autonomous workflow deployments.

Continuous Improvement and Ongoing Validation

Security and compliance are not one-time achievements but ongoing processes. After deploying autonomous workflows, establish a continuous improvement program that regularly reviews and enhances your security posture.

Regular Security Reviews and Updates

Conduct regular security reviews at least quarterly, and more frequently after significant changes to your workflows or infrastructure. During security reviews, examine recent security incidents and near-misses, assess whether your controls would have prevented them, and implement improvements if necessary. Review threat intelligence to understand emerging threats relevant to your industry and autonomous workflow deployments, then update your controls to address these threats.

Stay informed about new vulnerabilities and security best practices. Subscribe to security mailing lists relevant to your technology stack. Participate in security communities and forums where practitioners share experiences and lessons learned. When new vulnerabilities are discovered or new best practices emerge, evaluate their relevance to your autonomous workflows and implement improvements as appropriate.

Maintain a security roadmap that prioritizes security improvements over time. This roadmap should reflect emerging threats, regulatory changes, and lessons learned from incidents. Allocate resources to implement improvements systematically rather than reactively in response to incidents. A proactive security roadmap demonstrates to stakeholders that your organization is committed to continuously improving security.

Compliance Evolution and Regulatory Changes

Regulatory requirements change frequently. New regulations are introduced, existing regulations are modified, and regulatory interpretations evolve. Monitor regulatory changes affecting your industry and autonomous workflow deployments. When regulatory changes occur, assess their impact on your current controls and implement changes as necessary to maintain compliance.

Establish relationships with compliance consultants or legal advisors who can help you understand regulatory requirements and their implications for your organization. These advisors can help you interpret ambiguous regulations, understand how regulations apply to autonomous systems specifically, and plan for upcoming regulatory changes.

Document your compliance strategy and how your controls address regulatory requirements. When regulations change, update this documentation to reflect the new requirements and how your controls address them. This documentation becomes valuable evidence during compliance audits, demonstrating that your organization understands and takes regulatory requirements seriously.

Conclusion: Building Confidence in Autonomous Workflow Deployment

Deploying autonomous workflows at scale requires more than technical capability - it requires confidence that these systems will operate securely, maintain compliance with regulations, and protect sensitive data. The comprehensive checklist and framework outlined in this guide provides your organization with a structured approach to validating this confidence before production deployment.

The security and compliance landscape for autonomous systems continues to evolve. Regulators are developing specific guidance for AI and autonomous systems. Industry standards organizations are publishing frameworks for responsible AI deployment. Organizations across sectors are sharing lessons learned from their autonomous workflow deployments. By establishing a robust security and compliance program now, your organization positions itself to adapt quickly as the landscape evolves.

At A.I. PRIME, we understand that deploying autonomous workflows requires balancing innovation with governance. Our team of automation architects, compliance specialists, and security experts works with enterprise organizations to design and deploy autonomous systems that deliver transformative efficiency while maintaining the security and compliance controls your organization requires. We help you navigate the complexity of autonomous workflow deployment, establish governance frameworks that enable rather than constrain innovation, and build the confidence your stakeholders need to embrace autonomous systems.

If your organization is preparing to deploy autonomous workflows and needs expert guidance on security architecture, compliance validation, or governance integration, we'd welcome the opportunity to discuss how our services can support your initiative. Our approach combines deep technical expertise in autonomous systems with pragmatic understanding of compliance requirements and security best practices. We work with your teams to establish security controls that are effective, auditable, and aligned with your business objectives.

Whether you're deploying sales journey automation, operational process orchestration, data-driven agent networks, or other autonomous workflows, the security and compliance foundations matter. Start with the checklist provided in this guide, engage your InfoSec and compliance teams early in the deployment process, and establish clear validation procedures before moving to production. The investment in security and compliance upfront will pay dividends through reduced risk, faster audit cycles, and stakeholder confidence in your autonomous systems. Contact our team to discuss how we can support your autonomous workflow deployment and help you achieve the operational efficiency and governance alignment your organization requires.

The future of enterprise automation belongs to organizations that can deploy autonomous systems with confidence - systems that are secure, compliant, auditable, and aligned with business objectives. By following this comprehensive checklist and establishing a strong security and compliance program, your organization can join the leaders in autonomous workflow deployment, driving operational efficiency and competitive advantage while maintaining the governance controls that protect your business.