A.I. PRIME - Article

Integrating a Cognitive Orchestration Engine with ERP and CRM

Discover how cognitive orchestration engines integrate ERP and CRM systems to transform enterprises into intelligent, action-driven organizations.

Your enterprise systems hold vast amounts of data and operational logic, yet they remain siloed - isolated islands of information that rarely communicate seamlessly. ERP systems manage supply chains and financials. CRM platforms track customer interactions. WMS solutions optimize warehouse operations. Each excels at its specific function, but collectively, they create fragmentation that slows decision-making, increases costs, and leaves revenue opportunities untapped. The challenge facing most mid to large enterprises today is not a lack of data or systems, but rather the inability to orchestrate these systems into a unified, intelligent, and adaptive operating model. This is where a cognitive orchestration engine becomes transformative - not as another disconnected tool, but as the intelligent nervous system that turns your systems of record into systems of action.

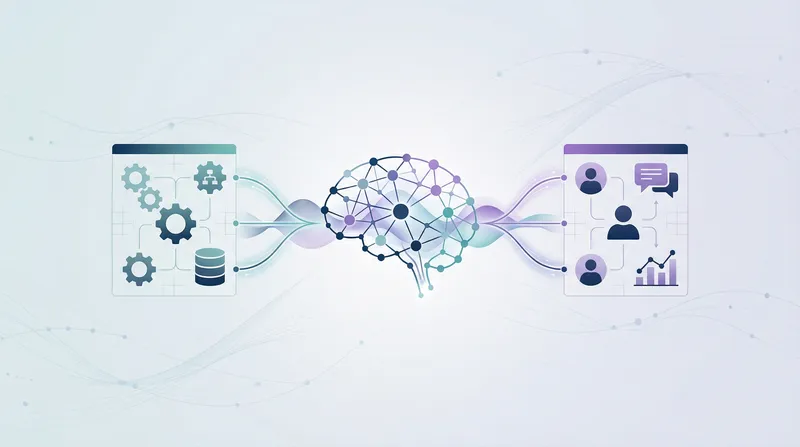

A cognitive orchestration engine bridges this gap by layering AI-driven intelligence across your existing enterprise infrastructure. Rather than replacing your ERP, CRM, or WMS, it connects to them, learns from them, and coordinates actions across them in real time. This technical integration transforms how your organization responds to market changes, customer needs, and operational inefficiencies. The result is faster decision cycles, reduced manual handoffs, lower operational costs, and the ability to scale automation without proportional increases in headcount.

In this comprehensive guide, we explore the technical patterns, architectural decisions, and implementation strategies required to successfully integrate a cognitive orchestration layer with your core enterprise systems. Whether you're a Chief Digital Officer evaluating transformation strategies or an IT Director tasked with implementing advanced automation, this post provides the technical depth and practical insights you need to move forward with confidence.

Understanding the Cognitive Orchestration Engine and Its Role in Enterprise Architecture

A cognitive orchestration engine is fundamentally different from traditional workflow automation tools. While legacy workflow engines execute predefined sequences of steps, a cognitive orchestration engine combines real-time data ingestion, machine learning inference, event-driven decision logic, and autonomous action execution. It doesn't just route work - it understands context, predicts outcomes, adapts to changing conditions, and continuously learns from results. Learn more in our post on Agent Network Deployment: Scaling Multi-Agent Orchestration for the Enterprise.

The core value proposition lies in transforming your enterprise from reactive to proactive. Instead of waiting for a customer service representative to manually check inventory, credit limits, and order history before responding to a customer inquiry, the cognitive orchestration engine monitors these systems continuously, anticipates issues, and pre-stages the necessary information or even initiates actions autonomously. This shift from systems of record to systems of action is what drives measurable business impact.

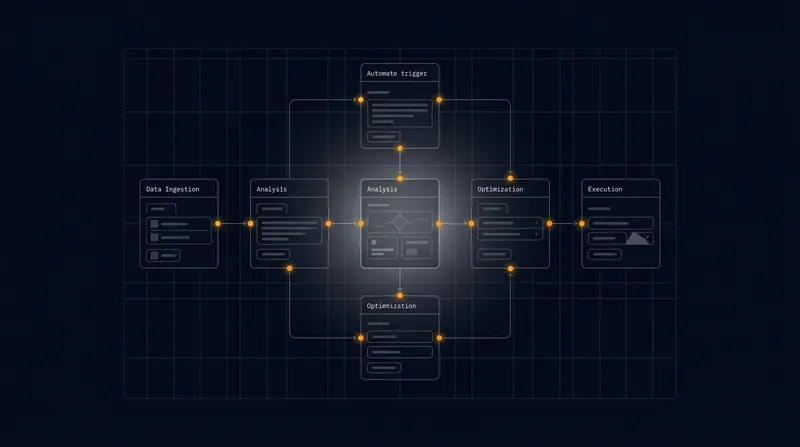

In architectural terms, the cognitive orchestration engine sits in the middle layer of your enterprise stack. It sits above your data infrastructure - which ingests raw data from ERP, CRM, WMS, and other systems - and below your user-facing applications and autonomous agents. This positioning allows it to serve as the intelligent coordinator between systems while remaining agnostic to how data is ultimately consumed or presented.

The cognitive orchestration engine transforms your enterprise from reactive to proactive by continuously monitoring systems, anticipating issues, and initiating intelligent actions without human intervention.

The engine operates on three core principles. First, event-driven architecture - every significant change in your enterprise systems (a new order, a customer payment, an inventory adjustment) generates an event that the orchestration engine can listen to and respond to. Second, contextual intelligence - the engine maintains a unified view of customer, product, and operational context drawn from multiple sources, enabling decisions that account for the full business picture. Third, adaptive execution - rather than following rigid scripts, the engine adjusts its behavior based on outcomes, learning which patterns and actions drive the best results.

Technical Patterns for Connecting to ERP Systems

ERP systems are the backbone of enterprise operations, managing everything from financial transactions to supply chain logistics. Successfully connecting a cognitive orchestration engine to your ERP requires careful consideration of data extraction methods, real-time event propagation, and transactional integrity. Learn more in our post on Integration Patterns: APIs, Event Streaming, and Connectors for Autonomous Agents.

API-First Connectivity and Data Extraction

Most modern ERP systems expose RESTful APIs that allow external systems to query data and trigger actions. The cognitive orchestration engine should leverage these APIs as its primary connection point. This approach offers several advantages: it respects the ERP system's internal logic and validation rules, it scales more efficiently than direct database connections, and it reduces the risk of corrupting data through improper access patterns.

However, API-first connectivity introduces latency considerations. A single API call to retrieve customer credit history, outstanding orders, and inventory levels might require three separate requests, each adding 100-200 milliseconds of latency. For real-time decision-making, this compounds quickly. The solution is to implement a local data cache within the orchestration engine that synchronizes with the ERP on a scheduled basis - perhaps every 15 minutes for non-critical data, or in real time for high-velocity data like inventory levels.

The caching strategy should be intelligent. Rather than caching all ERP data, focus on the attributes that drive decisions most frequently. For a sales orchestration workflow, this might include customer credit limits, pricing tiers, product availability, and delivery windows. For supply chain orchestration, it might be supplier lead times, warehouse stock levels, and transportation capacity. By caching strategically, you reduce API load on the ERP while maintaining decision accuracy.

Event Models and Real-Time Data Propagation

Beyond querying data on demand, the orchestration engine must receive notifications when critical data changes. This is where event models become essential. Modern ERP systems support event streaming through mechanisms like change data capture (CDC), webhook notifications, or native event buses. The orchestration engine should subscribe to these events and maintain a real-time event stream.

Consider a manufacturing scenario. When a production order completes in the ERP, an event fires. The orchestration engine captures this event, triggers a quality check workflow, updates customer dashboards, initiates shipment logistics, and notifies the sales team - all within seconds. Without event-driven integration, these actions would require manual intervention or batch processing that runs hours later.

Implementing event models requires establishing clear contracts between the ERP and the orchestration engine. Define which events are critical (order creation, payment received, inventory adjustment) versus informational (status updates, audit logs). Establish retry logic - if the orchestration engine is temporarily unavailable, the ERP should queue events and deliver them when connectivity resumes. Use event versioning to handle ERP upgrades that might alter event schemas without breaking the orchestration engine.

A practical pattern is to implement an event adapter layer - a lightweight service that sits between the ERP and the orchestration engine, translating ERP-native events into a standardized event schema that the orchestration engine understands. This decouples the orchestration engine from ERP-specific details and makes it easier to integrate multiple ERP systems (if your enterprise runs multiple instances or is consolidating systems).

Transactional Consistency and Compensating Actions

When the orchestration engine triggers actions in the ERP - creating a purchase order, updating inventory, posting a journal entry - it must ensure transactional consistency. In distributed systems, this is challenging. If the orchestration engine sends a command to create a purchase order and the ERP system crashes before confirming the action, the orchestration engine may not know whether the order was created.

The solution is to implement idempotent operations and compensating actions. Make every command the orchestration engine sends idempotent - meaning it can be safely retried without creating duplicates. For example, instead of "create a purchase order," the command becomes "ensure a purchase order exists with ID X, with these line items." If the command is retried, the ERP recognizes the request and returns the existing order rather than creating a duplicate.

For more complex workflows that span multiple systems, implement compensating actions. If a workflow involves creating a purchase order, reserving inventory, and then updating the sales order, but the inventory reservation fails, the orchestration engine should have a compensating action that cancels the purchase order. This ensures your systems remain consistent even when individual steps fail.

Integrating with CRM Systems for Customer-Centric Orchestration

While ERP systems manage internal operations, CRM systems are the enterprise's window into customer behavior and preferences. Integrating the orchestration engine with CRM enables customer-centric automation that dramatically improves sales effectiveness, customer retention, and lifetime value. Learn more in our post on Data Orchestration Best Practices to Power Predictive Scoring Loops.

Unified Customer Context and 360-Degree Views

A cognitive orchestration engine connected to CRM can maintain a unified customer context that combines data from multiple sources. This includes direct CRM data (contact information, interaction history, opportunity pipeline) as well as enriched data from ERP (purchase history, payment behavior, contract terms), WMS (delivery addresses, shipping preferences), and external sources (firmographic data, intent signals).

Building this unified view requires careful data mapping. Define a canonical customer entity that serves as the single source of truth. Map CRM customer records to ERP customer accounts, using account numbers or email addresses as the linking key. Handle duplicate records - customers often appear in CRM under slightly different names or email addresses. Implement fuzzy matching logic to identify likely duplicates and flag them for manual review or automated deduplication.

Once unified, this customer context becomes the foundation for intelligent orchestration. When a customer makes an inquiry, the orchestration engine can instantly access their full history, current contracts, pending orders, and payment status. Sales teams receive not just contact information but contextual intelligence - "this customer has a contract renewal coming in 30 days" or "this customer has had three failed deliveries in the past year, so we should offer expedited shipping."

Sales Journey Automation and Predictive Engagement

The orchestration engine transforms CRM from a record-keeping system into an active orchestrator of sales processes. Rather than sales representatives manually managing follow-ups, the engine can autonomously guide customers through defined journey stages based on their behavior and attributes.

A practical example: a prospect downloads a product whitepaper. The orchestration engine captures this event from your website analytics, enriches it with CRM data about the prospect's company and industry, and determines the next best action. If the prospect is in a high-value industry and their company recently received funding, the engine might trigger an immediate sales outreach. If they're in a lower-priority segment, it might add them to a nurture email sequence. If they match the profile of a customer who typically churns, it might flag them for a customer success check-in.

This requires mapping customer journey stages to specific triggers and actions. Define stages like "awareness," "consideration," "evaluation," and "decision." For each stage, specify the conditions that move a customer forward (engagement score above threshold, whitepaper downloaded, demo scheduled) and the actions to take (send targeted content, schedule call, create opportunity). The orchestration engine monitors customer behavior continuously and advances them through stages automatically.

Predictive engagement layers add another dimension. Machine learning models trained on historical CRM data can predict which customers are most likely to convert, which are at risk of churn, and which are ready for upsell. The orchestration engine uses these predictions to prioritize actions - focusing sales team attention on high-probability opportunities, triggering retention campaigns for at-risk customers, and timing upsell conversations for maximum receptiveness.

Real-Time Synchronization and Data Consistency

CRM systems are highly transactional - sales representatives constantly update records, create new contacts, and modify opportunities. The orchestration engine must stay synchronized with these changes in real time. This requires bidirectional data flow: the orchestration engine reads data from CRM to inform decisions, and it writes data back to CRM to record actions it takes.

Implement webhook subscriptions for critical CRM events: new lead created, opportunity stage changed, contact updated, activity logged. When these events fire, the orchestration engine updates its internal state and may trigger downstream actions. For example, when a customer moves to the "negotiation" stage in CRM, the orchestration engine might automatically pull their contract templates from document management, check inventory availability, and prepare a proposal.

Conversely, when the orchestration engine takes actions - sending an email, scheduling a meeting, updating customer information - it should record these actions back in CRM. This creates an audit trail and ensures that sales representatives always see the most current information. A sales rep shouldn't call a prospect only to discover that the orchestration engine already sent them a proposal two hours earlier.

Handle synchronization conflicts carefully. If a sales representative manually updates a customer record at the same time the orchestration engine is writing data, which version wins? Establish a conflict resolution strategy: perhaps the orchestration engine always defers to human updates, or perhaps it only updates specific fields (like engagement scores) while humans retain control of others (like opportunity amounts). Make these rules explicit and configurable.

Mapping Strategies and Data Transformation Patterns

Connecting disparate enterprise systems requires careful data mapping. Each system has its own data model, terminology, and business logic. The orchestration engine must translate between these different models seamlessly.

Canonical Data Models and Transformation Layers

The most effective approach is to define a canonical data model - an enterprise-wide standard representation of key entities like customers, orders, products, and inventory. This canonical model serves as the Rosetta Stone between different systems.

For example, the ERP might represent a customer as an "account" with fields like "account number," "billing address," and "payment terms." The CRM might represent the same customer as a "company" with fields like "account name," "headquarters," and "industry." The orchestration engine defines a canonical "customer" entity with normalized fields, then maps both the ERP account and CRM company to this canonical representation.

Implement this mapping through a dedicated transformation layer - a service or component that converts data between system-specific schemas and the canonical model. This layer should be configurable, allowing business users to define mappings without code changes. For example, "ERP.Account.AccountNumber maps to Canonical.Customer.ID" or "CRM.Company.Industry maps to Canonical.Customer.IndustrySegment."

Handle complex transformations through mapping rules. Some mappings are simple one-to-one conversions. Others require logic. For example, mapping ERP sales to a "customer lifetime value" field might require summing all orders, adjusting for returns, and applying a discount factor for recency. Define these rules explicitly, version them, and maintain audit logs of which rule version was applied to which data.

Handling Multi-System Hierarchies and Relationships

Enterprise systems often have complex hierarchies. A customer might have multiple divisions, each with multiple locations, each with multiple contacts. The CRM might organize this as a company with multiple accounts and contacts. The ERP might represent it as a customer with multiple ship-to addresses. The orchestration engine must understand these hierarchies and maintain consistency across systems.

Implement a relationship registry - a service that maintains mappings between system-specific identifiers. When the CRM creates a new account, the registry captures the account ID and any associated ERP customer numbers. When the orchestration engine needs to find all orders for a customer, it uses the registry to look up all associated ERP accounts, then queries all of them.

Handle hierarchy changes carefully. If a customer reorganizes and moves a division from one parent company to another, this change must propagate through multiple systems. Define change propagation rules: which changes are automatically synchronized, which require human approval, which trigger notifications to system owners.

Data Quality and Enrichment

Raw data from enterprise systems is often incomplete or inconsistent. A customer address might be missing a zip code. A product description might have formatting inconsistencies. Contact phone numbers might be in different formats. The orchestration engine should implement data quality checks and enrichment logic.

Define data quality rules for critical entities. For customers, require a valid address, email, and phone number. For orders, require a valid product ID, quantity, and delivery date. When data fails quality checks, implement remediation workflows - automatically attempt to fill missing data from alternative sources, flag records for manual review, or prevent downstream actions until data is corrected.

Enrichment services can augment system data with external sources. Firmographic data services can enrich company records with industry, revenue, and employee count. Email validation services can verify email addresses and identify corporate vs. personal accounts. Intent signal providers can indicate which companies are actively searching for solutions like yours. Integrate these enrichment services into your data pipeline to ensure the orchestration engine always has the richest possible context.

Event Models and Real-Time Decision Architecture

The true power of a cognitive orchestration engine emerges when it operates on real-time events. Rather than batch processing that runs nightly, event-driven architecture enables millisecond-level responses to business events.

Event Sourcing and Stream Processing

Implement event sourcing - a pattern where every change to system state is captured as an immutable event and stored in an event log. When an order is created, an "OrderCreated" event is recorded. When it's shipped, an "OrderShipped" event is recorded. This event log becomes the source of truth, and all system state can be reconstructed by replaying events.

Stream processing engines consume these events and apply business logic in real time. A stream processor might listen for "OrderCreated" events from the ERP. When one arrives, it enriches the order with customer data from CRM, checks inventory from WMS, calculates fulfillment cost, and determines the optimal warehouse for picking. All of this happens within seconds of order creation, before the order even reaches the warehouse staff.

Define your event taxonomy carefully. What constitutes an event worth capturing? Typically, events represent state changes that other systems care about - a new customer, a new order, a payment received, an inventory adjustment. Don't capture every keystroke or database update; focus on business-meaningful events. Define event schemas that include essential context - the entity that changed, what changed, when it changed, and who or what initiated the change.

Implement event versioning to handle schema evolution. As your business processes change, event schemas will evolve. Version each event schema and include the version in every event. This allows the orchestration engine to handle both old and new event formats, enabling gradual migration without breaking existing integrations.

Complex Event Processing and Pattern Detection

Beyond reacting to individual events, the orchestration engine can detect patterns across events. Complex event processing (CEP) engines can identify sequences, correlations, and anomalies that signal important business situations.

For example, detect a fraud pattern: "customer places three orders in one day, each shipping to a different address, totaling more than $10,000." When this pattern is detected, the orchestration engine might automatically flag the orders for review, trigger additional verification steps, or contact the customer to confirm legitimacy. This pattern-based detection is far more effective than rule-based approaches that might flag legitimate bulk orders.

Another example: detect customer churn risk. If a customer who typically orders monthly hasn't placed an order in 45 days, and their last interaction with your company was three months ago, the orchestration engine detects this pattern and triggers a retention campaign. These patterns combine data from multiple systems - order history from ERP, interaction history from CRM, support tickets from service systems.

Implement machine learning models that learn patterns from historical data. Train models on past customer behavior to predict which customers are most likely to churn, which are ready for upsell, which might have a support issue. The orchestration engine uses these predictions to trigger proactive actions.

Latency Considerations and Real-Time SLAs

Event-driven architecture introduces latency at every stage. An event is generated in the ERP, transmitted through the event bus, processed by the orchestration engine, and then an action is triggered. Each stage adds milliseconds of latency. For most business processes, sub-second latency is acceptable. But for high-frequency trading, fraud detection, or real-time pricing, even 100 milliseconds of latency can be problematic.

Establish clear latency SLAs for different event types. For example: "inventory adjustment events must be processed within 5 seconds" or "fraud detection patterns must be evaluated within 100 milliseconds." Design your architecture to meet these SLAs. This might mean deploying the orchestration engine in the same data center as your ERP to minimize network latency, or caching frequently accessed data locally to avoid external API calls.

Use message queues to decouple event production from processing. When an event is generated, it's immediately written to a queue. The orchestration engine consumes events from the queue and processes them at its own pace. This prevents a slow orchestration engine from blocking the ERP system. However, queues introduce latency - events sit in the queue waiting to be processed. Balance throughput and latency by tuning queue batch sizes and processing intervals.

Monitor latency continuously. Measure the time from event generation to action execution for each event type. Set up alerts when latency exceeds SLAs. Use this data to identify bottlenecks - perhaps a particular API call is slow, or a machine learning model is computationally expensive. Optimize iteratively.

Effective event-driven architecture requires establishing clear latency SLAs, monitoring performance continuously, and optimizing bottlenecks iteratively to ensure the orchestration engine responds in real time.

Governance, Security, and Compliance in Orchestrated Environments

As the orchestration engine becomes more autonomous, governance becomes critical. You need visibility into what actions the engine is taking, confidence that it's making good decisions, and assurance that it's operating within compliance boundaries.

Audit Trails and Decision Transparency

Implement comprehensive audit logging for every action the orchestration engine takes. When it creates an order, sends an email, updates a customer record, or approves a discount, log it. Include the decision context - what data was available, what rules were applied, what predictions were made. This creates a complete audit trail that satisfies compliance requirements and enables troubleshooting when something goes wrong.

Make decisions transparent and explainable. If a machine learning model recommends declining a customer's credit application, the orchestration engine should explain why - "customer has three unpaid invoices exceeding 60 days" or "customer's industry has elevated default risk." This transparency builds trust with stakeholders and helps identify biases in the model.

Implement decision versioning. If you update business rules or retrain machine learning models, version these changes. Maintain a history of which rule version or model version was used for each decision. This is critical for compliance - regulators may ask "explain the decision made for customer X on date Y," and you need to be able to retrieve the exact rules and data that were in effect at that time.

Access Control and Data Governance

The orchestration engine accesses data from multiple systems and triggers actions across them. Implement fine-grained access control to ensure the engine only accesses and modifies data it's authorized to. This is particularly important in regulated industries like healthcare and finance, where data access is strictly controlled.

Use role-based access control (RBAC) and attribute-based access control (ABAC). Define roles for the orchestration engine - perhaps "order processor," "customer service," "financial approver." Each role has specific permissions - which systems it can read from, which it can write to, what value thresholds it can approve. If the orchestration engine is configured with the "order processor" role, it can create orders but cannot modify customer credit limits.

Implement data masking for sensitive information. When the orchestration engine retrieves customer data that includes credit card numbers or social security numbers, mask this data in logs and audit trails. Only unmask it when necessary for specific operations, and log those unmasking events.

Establish data governance policies that define data ownership, retention, and usage. Who owns customer data - is it the CRM team, the customer service team, or the data governance office? What's the retention period for transactional data - keep it forever or purge after seven years? Can the orchestration engine use customer data for purposes beyond the original intent - for example, can it use purchase data for predictive modeling or only for transaction processing?

Compliance and Regulatory Alignment

Depending on your industry and geography, you may be subject to strict compliance requirements. GDPR in Europe, HIPAA in healthcare, PCI DSS for payment processing, SOX for financial reporting. The orchestration engine must operate within these compliance boundaries.

Map your orchestration workflows to compliance requirements. If GDPR requires that customers can request deletion of their data, implement a workflow that handles data deletion requests - identifying all systems that store the customer's data, deleting it from each system, and logging the deletion for audit purposes. If HIPAA requires encryption of patient data, ensure the orchestration engine encrypts data both in transit and at rest.

Implement consent management. If a customer opts out of marketing communications, the orchestration engine must respect that preference and not trigger marketing workflows for that customer. If a customer revokes consent for data processing, the orchestration engine must cease using their data for non-essential purposes.

Conduct regular compliance audits. Review audit logs to ensure the orchestration engine is operating within compliance boundaries. Verify that sensitive data is properly protected, that access controls are effective, and that decisions are being made fairly without bias. Use these audits to identify gaps and remediate them before regulators do.

Deployment Patterns and Scaling Considerations

Once you've designed the orchestration architecture, deployment becomes the next challenge. How do you roll out this complex system across your enterprise without disrupting operations?

Phased Rollout and Pilot Programs

Don't attempt to orchestrate all systems simultaneously. Start with a pilot program focused on a specific use case - perhaps automating the order-to-cash process or the lead-to-customer journey. Choose a use case with clear ROI, executive sponsorship, and a defined success metric. This pilot allows you to validate the architecture, identify integration challenges, and build organizational confidence before broader rollout.

Implement the pilot in a controlled environment - perhaps a single business unit or geographic region. This limits the blast radius if something goes wrong. Run the pilot in parallel with existing processes, so if the orchestration engine fails, manual processes can take over. Measure the pilot's performance against the success metric - reduced cycle time, lower cost, improved quality, higher customer satisfaction.

Once the pilot succeeds, expand to additional use cases and business units. Each expansion should follow the same pattern - clear success metric, controlled environment, parallel operation with fallback. This phased approach reduces risk and builds momentum as each success demonstrates the value of orchestration.

Architecture for Scale and High Availability

As the orchestration engine handles more workflows and processes more events, it must scale horizontally. Design the architecture with stateless components that can be replicated across multiple servers. The orchestration engine itself should be stateless - any instance can process any event. State should be externalized to databases or caches that all instances can access.

Implement load balancing to distribute events across multiple orchestration engine instances. Use message queues to decouple event production from processing - events are queued, and orchestration instances consume them at their own pace. If one instance is slow or unavailable, other instances pick up its work.

Design for high availability and disaster recovery. The orchestration engine is a critical system - if it fails, business processes grind to a halt. Deploy it across multiple data centers or availability zones. Replicate data continuously so that if one instance fails, another can take over without data loss. Establish recovery time objectives (RTO) and recovery point objectives (RPO) - how quickly must the system recover, and how much data loss is acceptable.

Monitor performance continuously. Track metrics like event processing latency, queue depth, error rates, and resource utilization. Set up alerts when these metrics exceed thresholds. Use this data to identify when scaling is needed - perhaps you need to add more orchestration instances, or optimize a slow API call.

Connector Architecture and System Abstraction

The orchestration engine should not be tightly coupled to specific ERP, CRM, or WMS systems. Instead, implement a connector architecture where each system integration is encapsulated in a connector. This allows you to swap systems, upgrade versions, or add new systems without changing the core orchestration engine.

Each connector should expose a standardized interface - methods to query data, subscribe to events, and trigger actions. The orchestration engine calls these standard methods, and the connector translates them to system-specific API calls or database queries. This abstraction means that if you upgrade your CRM from version 1.0 to 2.0, you only need to update the CRM connector, not the entire orchestration engine.

Implement connectors as microservices that can be deployed and scaled independently. If the CRM connector is slow, scale it independently without scaling the entire orchestration engine. If you add a new system, deploy a new connector without affecting existing systems.

Version connectors carefully. Each connector version should specify which system versions it supports. If you upgrade your ERP, verify that the connector supports the new version before upgrading. Maintain backward compatibility when possible - if the new ERP version adds a new field, the connector should still work with the old version that doesn't have that field.

Measuring ROI and Continuous Improvement

The ultimate measure of orchestration success is business impact. Does it reduce costs? Does it improve customer satisfaction? Does it accelerate revenue growth? Establish metrics that directly tie orchestration activities to business outcomes.

Key Performance Indicators and Outcome Tracking

Define KPIs that reflect your orchestration objectives. If you're automating order processing, measure order cycle time (time from order creation to shipment), order accuracy (percentage of orders shipped correctly), and cost per order processed. If you're automating customer engagement, measure response time (time from customer inquiry to response), customer satisfaction (CSAT or NPS), and conversion rate.

Implement attribution tracking to connect orchestration activities to outcomes. When the orchestration engine sends a targeted email to a customer, track whether that customer subsequently makes a purchase. When it automatically approves a credit application, track whether that customer pays on time. Build a feedback loop where outcomes inform the orchestration engine's decision-making - if a particular workflow consistently leads to poor outcomes, adjust the workflow or retrain the models.

Calculate the ROI of orchestration initiatives. Measure the cost of implementing and operating the orchestration engine against the benefits - cost savings from automation, revenue gains from faster sales cycles, risk reduction from better compliance. For a typical enterprise, orchestration ROI breaks even within 6-12 months and generates positive ROI thereafter.

Feedback Loops and Model Refinement

The orchestration engine should continuously learn and improve. Implement feedback loops where outcomes inform decision-making. If a machine learning model predicts that a customer will churn, and that customer subsequently churns, the model was correct - reinforce this pattern. If the model predicts churn but the customer doesn't churn, the model was incorrect - adjust it to avoid false positives.

Establish model retraining cycles. Retrain machine learning models monthly or quarterly with fresh data. Monitor model performance - track prediction accuracy, false positive and false negative rates. When performance degrades (perhaps because customer behavior has changed), retrain the model. When performance improves, deploy the improved model.

Implement A/B testing for orchestration workflows. Test two variants of a workflow - perhaps different email subject lines, different timing, different offers - and measure which performs better. Use the results to refine the workflow. This systematic testing drives continuous improvement in orchestration effectiveness.

Common Challenges and Solutions

Organizations implementing cognitive orchestration engines commonly encounter several challenges. Understanding these challenges and their solutions accelerates implementation and reduces risk.

Data Quality and Integration Complexity

The most common challenge is poor data quality in source systems. If customer data in the CRM is incomplete or inconsistent, the orchestration engine will make poor decisions based on poor data. Solution: implement data quality assessments before orchestration implementation. Identify data quality issues, remediate them, and establish ongoing data quality governance. Implement data validation in the orchestration engine that catches quality issues and triggers remediation workflows.

Change Management and Organizational Resistance

Orchestration changes how work gets done. Sales representatives may resist automated engagement workflows if they perceive them as threatening their autonomy. Support teams may resist automated case routing if they don't trust the routing logic. Solution: involve stakeholders early in orchestration design. Explain how orchestration will make their jobs easier, not replace them. Provide training and support. Start with low-risk automation and build confidence gradually.

Legacy System Limitations

Older ERP or CRM systems may not have modern APIs or event streaming capabilities. Integrating with these systems requires workarounds. Solution: implement adapters that work around system limitations. For example, if the ERP doesn't support webhooks, implement a polling adapter that periodically queries the ERP for changes. These adapters are less elegant than native event streaming, but they work.

Balancing Automation with Human Control

Determining which decisions should be fully automated and which should require human approval is challenging. Automate too much and you lose control; automate too little and you don't realize the benefits. Solution: start with low-risk, high-volume decisions that are fully automated. For example, automatically approve orders under $1,000 from customers with perfect payment history. For higher-risk decisions, implement approval workflows where the orchestration engine recommends an action but a human approves it. As confidence builds, expand automation.

Conclusion: Transforming Your Enterprise into a System of Action

The integration of a cognitive orchestration engine with your ERP, CRM, and WMS systems represents a fundamental shift in how enterprises operate. Rather than systems of record that passively store information, your enterprise systems become systems of action that actively drive business outcomes. Orders are processed faster. Customers are engaged more effectively. Operational inefficiencies are identified and corrected automatically. Compliance is maintained without manual oversight.

This transformation doesn't happen overnight. It requires careful architectural design, thoughtful data mapping, robust governance, and phased implementation. But the organizations that successfully implement cognitive orchestration gain substantial competitive advantages - lower costs, faster time-to-market, higher customer satisfaction, and the ability to scale operations without proportional increases in headcount.

At A.I. PRIME, we specialize in exactly this transformation. Our cognitive orchestration engine is purpose-built to connect with your existing enterprise systems, learning from your data and autonomously executing workflows that drive measurable business impact. We don't replace your ERP or CRM - we amplify them, turning them into intelligent, adaptive systems that respond to market changes in real time.

Our approach combines technical depth with business acumen. We understand enterprise architecture, data integration, and machine learning. But we also understand business processes, compliance requirements, and organizational change management. We work with your teams to design orchestration workflows aligned to your strategic objectives, implement them with minimal disruption to operations, and measure impact against clear ROI metrics.

Whether you're a Chief Digital Officer evaluating transformation strategies, a CTO tasked with modernizing enterprise systems, or an Automation Manager looking to scale operations, we can help. Our workflow design services ensure your orchestration architecture is optimized for your specific business context. Our automation blueprinting translates business requirements into technical specifications. Our agent network deployment brings orchestration to life across your enterprise. Our governance integration ensures orchestration operates within compliance boundaries. And our continuous enablement support ensures your teams have the skills and knowledge to operate and optimize orchestration over time.

The future belongs to enterprises that can transform data into action faster than their competitors. That's what cognitive orchestration enables. If you're ready to explore how orchestration can transform your enterprise, let's talk. Contact our team today to discuss your specific challenges, evaluate your current systems, and design a transformation roadmap that delivers measurable ROI. Your competitive advantage awaits.

Next step

Book the Opportunity Sprint